Inmathematics,specifically inlinear algebra,matrix multiplicationis abinary operationthat produces amatrixfrom two matrices. For matrix multiplication, the number of columns in the first matrix must be equal to the number of rows in the second matrix. The resulting matrix, known as thematrix product,has the number of rows of the first and the number of columns of the second matrix. The product of matricesAandBis denoted asAB.[1]

Matrix multiplication was first described by the French mathematicianJacques Philippe Marie Binetin 1812,[2]to represent thecompositionoflinear mapsthat are represented by matrices. Matrix multiplication is thus a basic tool oflinear algebra,and as such has numerous applications in many areas of mathematics, as well as inapplied mathematics,statistics,physics,economics,andengineering.[3][4] Computing matrix products is a central operation in all computational applications of linear algebra.

Notation

editThis article will use the following notational conventions: matrices are represented by capital letters in bold, e.g.A;vectorsin lowercase bold, e.g.a;and entries of vectors and matrices are italic (they are numbers from a field), e.g.Aanda.Index notationis often the clearest way to express definitions, and is used as standard in the literature. The entry in rowi,columnjof matrixAis indicated by(A)ij,Aijoraij.In contrast, a single subscript, e.g.A1,A2,is used to select a matrix (not a matrix entry) from a collection of matrices.

Definitions

editMatrix times matrix

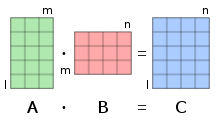

editIfAis anm×nmatrix andBis ann×pmatrix, thematrix productC=AB(denoted without multiplication signs or dots) is defined to be them×pmatrix[5][6][7][8] such that fori= 1,...,mandj= 1,...,p.

That is, the entryof the product is obtained by multiplying term-by-term the entries of theith row ofAand thejth column ofB,and summing thesenproducts. In other words,is thedot productof theith row ofAand thejth column ofB.

Therefore,ABcan also be written as

Thus the productABis defined if and only if the number of columns inAequals the number of rows inB,[1]in this casen.

In most scenarios, the entries are numbers, but they may be any kind ofmathematical objectsfor which an addition and a multiplication are defined, that areassociative,and such that the addition iscommutative,and the multiplication isdistributivewith respect to the addition. In particular, the entries may be matrices themselves (seeblock matrix).

Matrix times vector

editA vectorof lengthcan be viewed as acolumn vector,corresponding to anmatrixwhose entries are given byIfis anmatrix, the matrix-times-vector product denoted byis then the vectorthat, viewed as a column vector, is equal to thematrixIn index notation, this amounts to:

One way of looking at this is that the changes from "plain" vector to column vector and back are assumed and left implicit.

Vector times matrix

editSimilarly, a vectorof lengthcan be viewed as arow vector,corresponding to amatrix. To make it clear that a row vector is meant, it is customary in this context to represent it as thetransposeof a column vector; thus, one will see notations such asThe identityholds. In index notation, ifis anmatrix,amounts to:

Vector times vector

editThedot productof two vectorsandof equal length is equal to the single entry of thematrix resulting from multiplying these vectors as a row and a column vector, thus:(orwhich results in the samematrix).

Illustration

editThe figure to the right illustrates diagrammatically the product of two matricesAandB,showing how each intersection in the product matrix corresponds to a row ofAand a column ofB.

The values at the intersections, marked with circles in figure to the right, are:

Fundamental applications

editHistorically, matrix multiplication has been introduced for facilitating and clarifying computations inlinear algebra.This strong relationship between matrix multiplication and linear algebra remains fundamental in all mathematics, as well as inphysics,chemistry,engineeringandcomputer science.

Linear maps

editIf avector spacehas a finitebasis,its vectors are each uniquely represented by a finitesequenceof scalars, called acoordinate vector,whose elements are thecoordinatesof the vector on the basis. These coordinate vectors form another vector space, which isisomorphicto the original vector space. A coordinate vector is commonly organized as acolumn matrix(also called acolumn vector), which is a matrix with only one column. So, a column vector represents both a coordinate vector, and a vector of the original vector space.

Alinear mapAfrom a vector space of dimensionninto a vector space of dimensionmmaps a column vector

onto the column vector

The linear mapAis thus defined by the matrix

and maps the column vectorto the matrix product

IfBis another linear map from the preceding vector space of dimensionm,into a vector space of dimensionp,it is represented by amatrixA straightforward computation shows that the matrix of thecomposite mapis the matrix productThe general formula) that defines the function composition is instanced here as a specific case of associativity of matrix product (see§ Associativitybelow):

Geometric rotations

editUsing aCartesian coordinatesystem in a Euclidean plane, therotationby an anglearound theoriginis a linear map. More precisely, where the source pointand its imageare written as column vectors.

The composition of the rotation byand that bythen corresponds to the matrix product where appropriatetrigonometric identitiesare employed for the second equality. That is, the composition corresponds to the rotation by angle,as expected.

Resource allocation in economics

editAs an example, a fictitious factory uses 4 kinds ofbasic commodities,to produce 3 kinds ofintermediate goods,,which in turn are used to produce 3 kinds offinal products,.The matrices

- and

provide the amount of basic commodities needed for a given amount of intermediate goods, and the amount of intermediate goods needed for a given amount of final products, respectively. For example, to produce one unit of intermediate good,one unit of basic commodity,two units of,no units of,and one unit ofare needed, corresponding to the first column of.

Using matrix multiplication, compute

this matrix directly provides the amounts of basic commodities needed for given amounts of final goods. For example, the bottom left entry ofis computed as,reflecting thatunits ofare needed to produce one unit of.Indeed, oneunit is needed for,one for each of two,andfor each of the fourunits that go into theunit, see picture.

In order to produce e.g. 100 units of the final product,80 units of,and 60 units of,the necessary amounts of basic goods can be computed as

that is,units of,units of,units of,units ofare needed. Similarly, the product matrixcan be used to compute the needed amounts of basic goods for other final-good amount data.[9]

System of linear equations

editThe general form of asystem of linear equationsis

Using same notation as above, such a system is equivalent with the single matrixequation

Dot product, bilinear form and sesquilinear form

editThedot productof two column vectors is the unique entry of the matrix product

whereis therow vectorobtained bytransposing.(As usual, a 1×1 matrix is identified with its unique entry.)

More generally, anybilinear formover a vector space of finite dimension may be expressed as a matrix product

and anysesquilinear formmay be expressed as

wheredenotes theconjugate transposeof(conjugate of the transpose, or equivalently transpose of the conjugate).

General properties

editMatrix multiplication shares some properties with usualmultiplication.However, matrix multiplication is not defined if the number of columns of the first factor differs from the number of rows of the second factor, and it isnon-commutative,[10]even when the product remains defined after changing the order of the factors.[11][12]

Non-commutativity

editAn operation iscommutativeif, given two elementsAandBsuch that the productis defined, thenis also defined, and

IfAandBare matrices of respective sizesand,thenis defined if,andis defined if.Therefore, if one of the products is defined, the other one need not be defined. If,the two products are defined, but have different sizes; thus they cannot be equal. Only if,that is, ifAandBaresquare matricesof the same size, are both products defined and of the same size. Even in this case, one has in general

For example

but

This example may be expanded for showing that, ifAis amatrix with entries in afieldF,thenfor everymatrixBwith entries inF,if and only ifwhere,andIis theidentity matrix.If, instead of a field, the entries are supposed to belong to aring,then one must add the condition thatcbelongs to thecenterof the ring.

One special case where commutativity does occur is whenDandEare two (square)diagonal matrices(of the same size); thenDE=ED.[10]Again, if the matrices are over a general ring rather than a field, the corresponding entries in each must also commute with each other for this to hold.

Distributivity

editThe matrix product isdistributivewith respect tomatrix addition.That is, ifA,B,C,Dare matrices of respective sizesm×n,n×p,n×p,andp×q,one has (left distributivity)

and (right distributivity)

This results from the distributivity for coefficients by

Product with a scalar

editIfAis a matrix andca scalar, then the matricesandare obtained by left or right multiplying all entries ofAbyc.If the scalars have thecommutative property,then

If the productis defined (that is, the number of columns ofAequals the number of rows ofB), then

- and

If the scalars have the commutative property, then all four matrices are equal. More generally, all four are equal ifcbelongs to thecenterof aringcontaining the entries of the matrices, because in this case,cX=Xcfor all matricesX.

These properties result from thebilinearityof the product of scalars:

Transpose

editIf the scalars have thecommutative property,thetransposeof a product of matrices is the product, in the reverse order, of the transposes of the factors. That is

whereTdenotes the transpose, that is the interchange of rows and columns.

This identity does not hold for noncommutative entries, since the order between the entries ofAandBis reversed, when one expands the definition of the matrix product.

Complex conjugate

editIfAandBhavecomplexentries, then

where*denotes the entry-wisecomplex conjugateof a matrix.

This results from applying to the definition of matrix product the fact that the conjugate of a sum is the sum of the conjugates of the summands and the conjugate of a product is the product of the conjugates of the factors.

Transposition acts on the indices of the entries, while conjugation acts independently on the entries themselves. It results that, ifAandBhave complex entries, one has

where†denotes theconjugate transpose(conjugate of the transpose, or equivalently transpose of the conjugate).

Associativity

editGiven three matricesA,BandC,the products(AB)CandA(BC)are defined if and only if the number of columns ofAequals the number of rows ofB,and the number of columns ofBequals the number of rows ofC(in particular, if one of the products is defined, then the other is also defined). In this case, one has theassociative property

As for any associative operation, this allows omitting parentheses, and writing the above products as

This extends naturally to the product of any number of matrices provided that the dimensions match. That is, ifA1,A2,...,Anare matrices such that the number of columns ofAiequals the number of rows ofAi+ 1fori= 1,...,n– 1,then the product

is defined and does not depend on theorder of the multiplications,if the order of the matrices is kept fixed.

These properties may be proved by straightforward but complicatedsummationmanipulations. This result also follows from the fact that matrices representlinear maps.Therefore, the associative property of matrices is simply a specific case of the associative property offunction composition.

Computational complexity depends on parenthesization

editAlthough the result of a sequence of matrix products does not depend on theorder of operation(provided that the order of the matrices is not changed), thecomputational complexitymay depend dramatically on this order.

For example, ifA,BandCare matrices of respective sizes10×30, 30×5, 5×60,computing(AB)Cneeds10×30×5 + 10×5×60 = 4,500multiplications, while computingA(BC)needs30×5×60 + 10×30×60 = 27,000multiplications.

Algorithms have been designed for choosing the best order of products; seeMatrix chain multiplication.When the numbernof matrices increases, it has been shown that the choice of the best order has a complexity of[13][14]

Application to similarity

editAnyinvertible matrixdefines asimilarity transformation(on square matrices of the same size as)

Similarity transformations map product to products, that is

In fact, one has

Square matrices

editLet us denotethe set ofn×nsquare matriceswith entries in aringR,which, in practice, is often afield.

In,the product is defined for every pair of matrices. This makesaring,which has theidentity matrixIasidentity element(the matrix whose diagonal entries are equal to 1 and all other entries are 0). This ring is also anassociativeR-algebra.

Ifn> 1,many matrices do not have amultiplicative inverse.For example, a matrix such that all entries of a row (or a column) are 0 does not have an inverse. If it exists, the inverse of a matrixAis denotedA−1,and, thus verifies

A matrix that has an inverse is aninvertible matrix.Otherwise, it is asingular matrix.

A product of matrices is invertible if and only if each factor is invertible. In this case, one has

WhenRiscommutative,and, in particular, when it is a field, thedeterminantof a product is the product of the determinants. As determinants are scalars, and scalars commute, one has thus

The other matrixinvariantsdo not behave as well with products. Nevertheless, ifRis commutative,ABandBAhave the sametrace,the samecharacteristic polynomial,and the sameeigenvalueswith the same multiplicities. However, theeigenvectorsare generally different ifAB≠BA.

Powers of a matrix

editOne may raise a square matrix to anynonnegative integer powermultiplying it by itself repeatedly in the same way as for ordinary numbers. That is,

Computing thekth power of a matrix needsk– 1times the time of a single matrix multiplication, if it is done with the trivial algorithm (repeated multiplication). As this may be very time consuming, one generally prefers usingexponentiation by squaring,which requires less than2 log2kmatrix multiplications, and is therefore much more efficient.

An easy case for exponentiation is that of adiagonal matrix.Since the product of diagonal matrices amounts to simply multiplying corresponding diagonal elements together, thekth power of a diagonal matrix is obtained by raising the entries to the powerk:

Abstract algebra

editThe definition of matrix product requires that the entries belong to a semiring, and does not require multiplication of elements of the semiring to becommutative.In many applications, the matrix elements belong to a field, although thetropical semiringis also a common choice for graphshortest pathproblems.[15]Even in the case of matrices over fields, the product is not commutative in general, although it isassociativeand isdistributiveovermatrix addition.Theidentity matrices(which are thesquare matriceswhose entries are zero outside of the main diagonal and 1 on the main diagonal) areidentity elementsof the matrix product. It follows that then×nmatrices over aringform a ring, which is noncommutative except ifn= 1and the ground ring is commutative.

A square matrix may have amultiplicative inverse,called aninverse matrix.In the common case where the entries belong to acommutative ringR,a matrix has an inverse if and only if itsdeterminanthas a multiplicative inverse inR.The determinant of a product of square matrices is the product of the determinants of the factors. Then×nmatrices that have an inverse form agroupunder matrix multiplication, thesubgroupsof which are calledmatrix groups.Many classical groups (including allfinite groups) areisomorphicto matrix groups; this is the starting point of the theory ofgroup representations.

Matrices are themorphismsof acategory,thecategory of matrices.The objects are thenatural numbersthat measure the size of matrices, and the composition of morphisms is matrix multiplication. The source of a morphism is the number of columns of the corresponding matrix, and the target is the number of rows.

Computational complexity

editThe matrix multiplicationalgorithmthat results from the definition requires, in theworst case,multiplications andadditions of scalars to compute the product of two squaren×nmatrices. Itscomputational complexityis therefore,in amodel of computationfor which the scalar operations take constant time.

Rather surprisingly, this complexity is not optimal, as shown in 1969 byVolker Strassen,who provided an algorithm, now calledStrassen's algorithm,with a complexity of[16] Strassen's algorithm can be parallelized to further improve the performance.[17] As of January 2024[update],the best peer-reviewed matrix multiplication algorithm is byVirginia Vassilevska Williams,Yinzhan Xu, Zixuan Xu, and Renfei Zhou and has complexityO(n2.371552).[18][19] It is not known whether matrix multiplication can be performed inn2 + o(1)time.[20]This would be optimal, since one must read theelements of a matrix in order to multiply it with another matrix.

Since matrix multiplication forms the basis for many algorithms, and many operations on matrices even have the same complexity as matrix multiplication (up to a multiplicative constant), the computational complexity of matrix multiplication appears throughoutnumerical linear algebraandtheoretical computer science.

Generalizations

editOther types of products of matrices include:

- Block matrix operations

- Cracovian product,defined asA∧B=BTA

- Frobenius inner product,thedot productof matrices considered as vectors, or, equivalently the sum of the entries of the Hadamard product

- Hadamard productof two matrices of the same size, resulting in a matrix of the same size, which is the product entry-by-entry

- Kronecker productortensor product,the generalization to any size of the preceding

- Khatri-Rao productandFace-splitting product

- Outer product,also calleddyadic productortensor productof two column matrices, which is

- Scalar multiplication

See also

edit- Matrix calculus,for the interaction of matrix multiplication with operations from calculus

Notes

edit- ^abNykamp, Duane."Multiplying matrices and vectors".Math Insight.RetrievedSeptember 6,2020.

- ^O'Connor, John J.;Robertson, Edmund F.,"Jacques Philippe Marie Binet",MacTutor History of Mathematics Archive,University of St Andrews

- ^Lerner, R. G.;Trigg, G. L. (1991).Encyclopaedia of Physics(2nd ed.). VHC publishers.ISBN978-3-527-26954-9.

- ^Parker, C. B. (1994).McGraw Hill Encyclopaedia of Physics(2nd ed.). McGraw-Hill.ISBN978-0-07-051400-3.

- ^Lipschutz, S.; Lipson, M. (2009).Linear Algebra.Schaum's Outlines (4th ed.). McGraw Hill (USA). pp.30–31.ISBN978-0-07-154352-1.

- ^Riley, K. F.; Hobson, M. P.; Bence, S. J. (2010).Mathematical methods for physics and engineering.Cambridge University Press.ISBN978-0-521-86153-3.

- ^Adams, R. A. (1995).Calculus, A Complete Course(3rd ed.). Addison Wesley. p. 627.ISBN0-201-82823-5.

- ^Horn, Johnson (2013).Matrix Analysis(2nd ed.). Cambridge University Press. p. 6.ISBN978-0-521-54823-6.

- ^Peter Stingl (1996).Mathematik für Fachhochschulen – Technik und Informatik(in German) (5th ed.).Munich:Carl Hanser Verlag.ISBN3-446-18668-9.Here: Exm.5.4.10, p.205-206

- ^abcWeisstein, Eric W."Matrix Multiplication".mathworld.wolfram.Retrieved2020-09-06.

- ^Lipcshutz, S.; Lipson, M. (2009). "2".Linear Algebra.Schaum's Outlines (4th ed.). McGraw Hill (USA).ISBN978-0-07-154352-1.

- ^Horn, Johnson (2013). "Chapter 0".Matrix Analysis(2nd ed.). Cambridge University Press.ISBN978-0-521-54823-6.

- ^Hu, T. C.;Shing, M.-T. (1982)."Computation of Matrix Chain Products, Part I"(PDF).SIAM Journal on Computing.11(2):362–373.CiteSeerX10.1.1.695.2923.doi:10.1137/0211028.ISSN0097-5397.

- ^Hu, T. C.;Shing, M.-T. (1984)."Computation of Matrix Chain Products, Part II"(PDF).SIAM Journal on Computing.13(2):228–251.CiteSeerX10.1.1.695.4875.doi:10.1137/0213017.ISSN0097-5397.

- ^Motwani, Rajeev;Raghavan, Prabhakar(1995).Randomized Algorithms.Cambridge University Press. p. 280.ISBN9780521474658.

- ^ Volker Strassen (Aug 1969)."Gaussian elimination is not optimal".Numerische Mathematik.13(4):354–356.doi:10.1007/BF02165411.S2CID121656251.

- ^C.-C. Chou and Y.-F. Deng and G. Li and Y. Wang (1995)."Parallelizing Strassen's Method for Matrix Multiplication on Distributed-Memory MIMD Architectures"(PDF).Computers Math. Applic.30(2):49–69.doi:10.1016/0898-1221(95)00077-C.

- ^Vassilevska Williams, Virginia; Xu, Yinzhan; Xu, Zixuan; Zhou, Renfei.New Bounds for Matrix Multiplication: from Alpha to Omega.Proceedings of the 2024 Annual ACM-SIAM Symposium on Discrete Algorithms (SODA). pp.3792–3835.arXiv:2307.07970.doi:10.1137/1.9781611977912.134.

- ^Nadis, Steve (March 7, 2024)."New Breakthrough Brings Matrix Multiplication Closer to Ideal".Retrieved2024-03-09.

- ^that is, in timen2+f(n),for some functionfwithf(n)→0asn→∞

References

edit- Henry Cohn,Robert Kleinberg,Balázs Szegedy,and Chris Umans. Group-theoretic Algorithms for Matrix Multiplication.arXiv:math.GR/0511460.Proceedings of the 46th Annual Symposium on Foundations of Computer Science,23–25 October 2005, Pittsburgh, PA, IEEE Computer Society, pp. 379–388.

- Henry Cohn, Chris Umans. A Group-theoretic Approach to Fast Matrix Multiplication.arXiv:math.GR/0307321.Proceedings of the 44th Annual IEEE Symposium on Foundations of Computer Science,11–14 October 2003, Cambridge, MA, IEEE Computer Society, pp. 438–449.

- Coppersmith, D.; Winograd, S. (1990)."Matrix multiplication via arithmetic progressions".J. Symbolic Comput.9(3):251–280.doi:10.1016/s0747-7171(08)80013-2.

- Horn, Roger A.; Johnson, Charles R. (1991),Topics in Matrix Analysis,Cambridge University Press,ISBN978-0-521-46713-1

- Knuth, D.E.,The Art of Computer ProgrammingVolume 2: Seminumerical Algorithms.Addison-Wesley Professional; 3 edition (November 14, 1997).ISBN978-0-201-89684-8.pp. 501.

- Press, William H.; Flannery, Brian P.;Teukolsky, Saul A.;Vetterling, William T. (2007),Numerical Recipes: The Art of Scientific Computing(3rd ed.),Cambridge University Press,ISBN978-0-521-88068-8.

- Ran Raz.On the complexity of matrix product. In Proceedings of the thirty-fourth annual ACM symposium on Theory of computing. ACM Press, 2002.doi:10.1145/509907.509932.

- Robinson, Sara,Toward an Optimal Algorithm for Matrix Multiplication,SIAM News 38(9), November 2005.PDF

- Strassen, Volker,Gaussian Elimination is not Optimal,Numer. Math. 13, p. 354–356, 1969.

- Styan, George P. H. (1973),"Hadamard Products and Multivariate Statistical Analysis"(PDF),Linear Algebra and Its Applications,6:217–240,doi:10.1016/0024-3795(73)90023-2

- Williams, Virginia Vassilevska (2012-05-19)."Multiplying matrices faster than coppersmith-winograd".Proceedings of the 44th symposium on Theory of Computing - STOC '12.ACM. pp.887–898.CiteSeerX10.1.1.297.2680.doi:10.1145/2213977.2214056.ISBN9781450312455.S2CID14350287.