Video

This articleneeds additional citations forverification.(June 2024) |

Videois anelectronicmedium for the recording,copying,playback,broadcasting,and display ofmovingvisualmedia.[1]Video was first developed formechanical televisionsystems, which were quickly replaced bycathode-ray tube(CRT) systems, which, in turn, were replaced byflat-panel displaysof several types.

Video systems vary indisplay resolution,aspect ratio,refresh rate,color capabilities, and other qualities. Analog and digital variants exist and can be carried on a variety of media, includingradio broadcasts,magnetic tape,optical discs,computer files,andnetwork streaming.

Etymology[edit]

The wordvideocomes from theLatinvideo(I see).[2]

History[edit]

Analog video[edit]

Video developed from facsimile systems developed in the mid-19th century. Early mechanical video scanners, such as theNipkow Diskwere patented as early as 1884, however, it took several decades before practical video systems could be developed, many decades afterfilm.Film records using a sequence of miniature photographic images visible to the eye when the film is physically examined. Video, by contrast, encodes images electronically, turning the images into analog or digital electronic signals for transmission or recording.[3]

Video technology was first developed formechanical televisionsystems, which were quickly replaced bycathode-ray tube(CRT)televisionsystems. Video was originally exclusivelylivetechnology. Live video cameras used an electron beam which would scan a photoconductive plate with the desired image and produce a voltage signal proportional to the brightness in each part of the image. The signal could then be sent to televisions where another beam would receive and display the image.[4]Charles Ginsburgled anAmpexresearch team to develop one of the first practicalvideo tape recorders(VTR). In 1951, the first VTR captured live images fromtelevision camerasby writing the camera's electrical signal onto magneticvideotape.

Video recorders were sold for US$50,000 in 1956, and videotapes cost US$300 per one-hour reel.[5]However, prices gradually dropped over the years; in 1971, Sony began sellingvideocassette recorder(VCR) decks and tapes into theconsumer market.[6]

Digital video[edit]

Digital video is capable of higher quality and, eventually, a much lower cost than earlier analog technology. After the commercial introduction of theDVDin 1997 and later theBlu-ray Discin 2006, sales of videotape and recording equipment plummeted. Advances incomputertechnology allow even inexpensivepersonal computersandsmartphonesto capture, store, edit, and transmit digital video, further reducing the cost ofvideo productionand allowing program-makers and broadcasters to move totapeless production.The advent ofdigital broadcastingand the subsequentdigital television transitionare in the process of relegating analog video to the status of alegacy technologyin most parts of the world. The development of high-resolution video cameras with improveddynamic rangeandcolor gamuts,along with the introduction of high-dynamic-rangedigital intermediatedata formats with improvedcolor depth,has caused digital video technology to converge with film technology. Since 2013,[update]the use ofdigital camerasinHollywoodhas surpassed the use of film cameras.[7]

Characteristics of video streams[edit]

Number of frames per second[edit]

Frame rate,the number of still pictures per unit of time of video, ranges from six or eight frames per second (frame/s) for old mechanical cameras to 120 or more frames per second for new professional cameras.PALstandards (Europe, Asia, Australia, etc.) andSECAM(France, Russia, parts of Africa, etc.) specify 25 frame/s, whileNTSCstandards (United States, Canada, Japan, etc.) specify 29.97 frame/s.[8]Film is shot at a slower frame rate of 24 frames per second, which slightly complicates the process of transferring a cinematic motion picture to video. The minimum frame rate to achieve a comfortable illusion of amoving imageis about sixteen frames per second.[9]

Interlaced vs. progressive[edit]

Video can beinterlacedorprogressive.In progressive scan systems, each refresh period updates all scan lines in each frame in sequence. When displaying a natively progressive broadcast or recorded signal, the result is the optimum spatial resolution of both the stationary and moving parts of the image. Interlacing was invented as a way to reduce flicker in earlymechanicalandCRTvideo displays without increasing the number of completeframes per second.Interlacing retains detail while requiring lowerbandwidthcompared to progressive scanning.[10][11]

In interlaced video, the horizontalscan linesof each complete frame are treated as if numbered consecutively and captured as twofields:anodd field(upper field) consisting of the odd-numbered lines and aneven field(lower field) consisting of the even-numbered lines. Analog display devices reproduce each frame, effectively doubling the frame rate as far as perceptible overall flicker is concerned. When the image capture device acquires the fields one at a time, rather than dividing up a complete frame after it is captured, the frame rate for motion is effectively doubled as well, resulting in smoother, more lifelike reproduction of rapidly moving parts of the image when viewed on an interlaced CRT display.[10][11]

NTSC, PAL, and SECAM are interlaced formats. Abbreviated video resolution specifications often include anito indicate interlacing. For example, PAL video format is often described as576i50,where576indicates the total number of horizontal scan lines,iindicates interlacing, and50indicates 50 fields (half-frames) per second.[11][12]

When displaying a natively interlaced signal on a progressive scan device, the overall spatial resolution is degraded by simpleline doubling—artifacts, such as flickering or "comb" effects in moving parts of the image that appear unless special signal processing eliminates them.[10][13]A procedure known asdeinterlacingcan optimize the display of an interlaced video signal from an analog, DVD, or satellite source on a progressive scan device such as anLCD television,digitalvideo projector,or plasma panel. Deinterlacing cannot, however, producevideo qualitythat is equivalent to true progressive scan source material.[11][12][13]

Aspect ratio[edit]

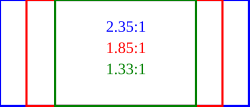

Aspect ratiodescribes the proportional relationship between the width and height of video screens and video picture elements. All popular video formats arerectangular,and this can be described by a ratio between width and height. The ratio of width to height for a traditional television screen is 4:3, or about 1.33:1. High-definition televisions use an aspect ratio of 16:9, or about 1.78:1. The aspect ratio of a full 35 mm film frame with soundtrack (also known as theAcademy ratio) is 1.375:1.[14][15]

Pixelson computer monitors are usually square, but pixels used indigital videooften have non-square aspect ratios, such as those used in the PAL and NTSC variants of theCCIR 601digital video standard and the corresponding anamorphic widescreen formats. The720 by 480 pixelraster uses thin pixels on a 4:3 aspect ratio display and fat pixels on a 16:9 display.[14][15]

The popularity of viewing video on mobile phones has led to the growth ofvertical video.Mary Meeker, a partner at Silicon Valley venture capital firmKleiner Perkins Caufield & Byers,highlighted the growth of vertical video viewing in her 2015 Internet Trends Report – growing from 5% of video viewing in 2010 to 29% in 2015. Vertical video ads likeSnapchat's are watched in their entirety nine times more frequently than landscape video ads.[16]

Color model and depth[edit]

Thecolor modeluses the video color representation and maps encoded color values to visible colors reproduced by the system. There are several such representations in common use: typically,YIQis used in NTSC television,YUVis used in PAL television,YDbDris used by SECAM television, andYCbCris used for digital video.[17][18]

The number of distinct colors a pixel can represent depends on thecolor depthexpressed in the number of bits per pixel. A common way to reduce the amount of data required in digital video is bychroma subsampling(e.g., 4:4:4, 4:2:2, etc.). Because the human eye is less sensitive to details in color than brightness, the luminance data for all pixels is maintained, while the chrominance data is averaged for a number of pixels in a block, and the same value is used for all of them. For example, this results in a 50% reduction in chrominance data using 2-pixel blocks (4:2:2) or 75% using 4-pixel blocks (4:2:0). This process does not reduce the number of possible color values that can be displayed, but it reduces the number of distinct points at which the color changes.[12][17][18]

Video quality[edit]

Video qualitycan be measured with formal metrics likepeak signal-to-noise ratio(PSNR) or throughsubjective video qualityassessment using expert observation. Many subjective video quality methods are described in theITU-TrecommendationBT.500.One of the standardized methods is theDouble Stimulus Impairment Scale(DSIS). In DSIS, each expert views anunimpairedreference video, followed by animpairedversion of the same video. The expert then rates theimpairedvideo using a scale ranging from "impairments are imperceptible" to "impairments are very annoying."

Video compression method (digital only)[edit]

Uncompressed videodelivers maximum quality, but at a very highdata rate.A variety of methods are used to compress video streams, with the most effective ones using agroup of pictures(GOP) to reduce spatial and temporalredundancy.Broadly speaking, spatial redundancy is reduced by registering differences between parts of a single frame; this task is known asintraframecompressionand is closely related toimage compression.Likewise, temporal redundancy can be reduced by registering differences between frames; this task is known asinterframecompression,includingmotion compensationand other techniques. The most common modern compression standards areMPEG-2,used forDVD,Blu-ray,andsatellite television,andMPEG-4,used forAVCHD,mobile phones (3GP) and the Internet.[19][20]

Stereoscopic[edit]

Stereoscopic videofor3D filmand other applications can be displayed using several different methods:[21][22]

- Two channels: a right channel for the right eye and a left channel for the left eye. Both channels may be viewed simultaneously by usinglight-polarizing filters90 degrees off-axis from each other on two video projectors. These separately polarized channels are viewed wearing eyeglasses with matching polarization filters.

- Anaglyph 3Dwhere one channel is overlaid with two color-coded layers. This left and right layer technique is occasionally used for network broadcasts or recent anaglyph releases of 3D movies on DVD. Simple red/cyan plastic glasses provide the means to view the images discretely to form a stereoscopic view of the content.

- One channel with alternating left and right frames for the corresponding eye, usingLCD shutter glassesthat synchronize to the video to alternately block the image for each eye, so the appropriate eye sees the correct frame. This method is most common in computervirtual realityapplications, such as in aCave Automatic Virtual Environment,but reduces effective video framerate by a factor of two.

Formats[edit]

Different layers of video transmission and storage each provide their own set of formats to choose from.

For transmission, there is a physical connector and signal protocol (seeList of video connectors). A given physical link can carry certaindisplay standardsthat specify a particular refresh rate,display resolution,andcolor space.

Many analog and digitalrecording formatsare in use, and digitalvideo clipscan also be stored on acomputer file systemas files, which have their own formats. In addition to the physical format used by thedata storage deviceor transmission medium, the stream of ones and zeros that is sent must be in a particular digitalvideo coding format,for which a number is available.

Analog video[edit]

Analog video is a video signal represented by one or moreanalog signals.Analog color video signals includeluminance,brightness (Y) andchrominance(C). When combined into one channel, as is the case among others withNTSC,PAL,andSECAM,it is calledcomposite video.Analog video may be carried in separate channels, as in two-channelS-Video(YC) and multi-channelcomponent videoformats.

Analog video is used in both consumer and professionaltelevision productionapplications.

-

Composite video

(single channel RCA) -

S-Video

(2-channel YC)

Digital video[edit]

Digital videosignal formats have been adopted, includingserial digital interface(SDI),Digital Visual Interface(DVI),High-Definition Multimedia Interface(HDMI) andDisplayPortInterface.

Transport medium[edit]

Video can be transmitted or transported in a variety of ways including wirelessterrestrial televisionas an analog or digital signal, coaxial cable in a closed-circuit system as an analog signal. Broadcast or studio cameras use a single or dual coaxial cable system usingserial digital interface(SDI). SeeList of video connectorsfor information about physical connectors and related signal standards.

Video may be transported over networks and other shared digital communications links using, for instance,MPEG transport stream,SMPTE 2022andSMPTE 2110.

Display standards[edit]

Digital television[edit]

Digital televisionbroadcasts use theMPEG-2and othervideo coding formatsand include:

- ATSC– United States,Canada,Mexico,Korea

- Digital Video Broadcasting(DVB) –Europe

- ISDB–Japan

- Digital Multimedia Broadcasting(DMB) –Korea

Analog television[edit]

Analog televisionbroadcast standards include:

- Field-sequential color system(FCS) – US, Russia; obsolete

- Multiplexed Analogue Components(MAC) – Europe; obsolete

- Multiple sub-Nyquist sampling encoding(MUSE) – Japan

- NTSC–United States,Canada,Japan

- EDTV-II "Clear-Vision"- NTSC extension, Japan

- PAL–Europe,Asia,Oceania

- RS-343(military)

- SECAM–France,formerSoviet Union,Central Africa

- CCIR System A

- CCIR System B

- CCIR System G

- CCIR System H

- CCIR System I

- CCIR System M

An analog video format consists of more information than the visible content of the frame. Preceding and following the image are lines and pixels containing metadata and synchronization information. This surrounding margin is known as ablanking intervalorblanking region;the horizontal and verticalfront porch and back porchare the building blocks of the blanking interval.

Computer displays[edit]

Computer display standardsspecify a combination of aspect ratio, display size, display resolution, color depth, and refresh rate. Alist of common resolutionsis available.

Recording[edit]

Early television was almost exclusively a live medium, with some programs recorded to film for historical purposes usingKinescope.The analogvideo tape recorderwas commercially introduced in 1951. The following list is in rough chronological order. All formats listed were sold to and used by broadcasters, video producers, or consumers; or were important historically.[23][24]

- VERA(BBCexperimental format ca. 1952)

- 2 "Quadruplex videotape(Ampex1956)

- 1 "Type A videotape(Ampex)

- 1/2 "EIAJ(1969)

- U-matic3/4 "(Sony)

- 1/2 "Cartrivision(Avco)

- VCR, VCR-LP, SVR

- 1 "Type B videotape(Robert Bosch GmbH)

- 1 "Type C videotape(Ampex,MarconiandSony)

- Betamax(Sony)

- VHS(JVC)

- Video 2000(Philips)

- 2 "Helical Scan Videotape(IVC)

- 1/4 "CVC(Funai)

- Betacam(Sony)

- HDVS(Sony)[25]

- Betacam SP(Sony)

- Video8(Sony) (1986)

- S-VHS(JVC) (1987)

- VHS-C(JVC)

- Pixelvision(Fisher-Price)

- UniHi 1/2 "HD(Sony)[25]

- Hi8(Sony) (mid-1990s)

- W-VHS(JVC) (1994)

Digital video tape recorders offered improved quality compared to analog recorders.[24][26]

Optical storage mediums offered an alternative, especially in consumer applications, to bulky tape formats.[23][27]

- Blu-ray Disc(Sony)

- China Blue High-definition Disc(CBHD)

- DVD(wasSuper Density Disc,DVD Forum)

- Professional Disc

- Universal Media Disc(UMD) (Sony)

- Enhanced Versatile Disc(EVD, Chinese government-sponsored)

- HD DVD(NECandToshiba)

- HD-VMD

- Capacitance Electronic Disc

- Laserdisc(MCAandPhilips)

- Television Electronic Disc(TeldecandTelefunken)

- VHD(JVC)

- Video CD

Digital encoding formats[edit]

A video codec issoftwareorhardwarethatcompressesanddecompressesdigital video.In the context of video compression,codecis aportmanteauofencoderanddecoder,while a device that only compresses is typically called anencoder,and one that only decompresses is adecoder.The compressed data format usually conforms to a standardvideo coding format.The compression is typicallylossy,meaning that the compressed video lacks some information present in the original video. A consequence of this is that decompressed video has lower quality than the original, uncompressed video because there is insufficient information to accurately reconstruct the original video.[28]

See also[edit]

- General

- Video format

- Video usage

- Video screen recording software

References[edit]

- ^"Video – HiDef Audio and Video".hidefnj.com.Archivedfrom the original on May 14, 2017.RetrievedMarch 30,2017.

- ^"video",Online Etymology Dictionary

- ^Amidon, Audrey (June 25, 2013)."Film Preservation 101: What's the Difference Between a Film and a Video?".The Unwritten Record.US National Archives.

- ^"Vocademy - Learn for Free - Electronics Technology - Analog Circuits - Analog Television".vocademy.net.Retrieved2024-06-29.

- ^Elen, Richard."TV Technology 10. Roll VTR".Archivedfrom the original on October 27, 2011.

- ^"Vintage Umatic VCR – Sony VO-1600. The worlds first VCR. 1971".Rewind Museum.Archivedfrom the original on February 22, 2014.RetrievedFebruary 21,2014.

- ^Follows, Stephen (February 11, 2019)."The use of digital vs celluloid film on Hollywood movies".Archivedfrom the original on April 11, 2022.RetrievedFebruary 19,2022.

- ^Soseman, Ned."What's the difference between 59.94fps and 60fps?".Archived fromthe originalon June 29, 2017.RetrievedJuly 12,2017.

- ^Watson, Andrew B. (1986)."Temporal Sensitivity"(PDF).Sensory Processes and Perception.Archived fromthe original(PDF)on March 8, 2016.

- ^abcBovik, Alan C. (2005).Handbook of image and video processing(2nd ed.). Amsterdam: Elsevier Academic Press. pp. 14–21.ISBN978-0-08-053361-2.OCLC190789775.Archivedfrom the original on August 25, 2022.RetrievedAugust 25,2022.

- ^abcdWright, Steve (2002).Digital compositing for film and video.Boston: Focal Press.ISBN978-0-08-050436-0.OCLC499054489.Archivedfrom the original on August 25, 2022.RetrievedAugust 25,2022.

- ^abcBrown, Blain (2013).Cinematography: Theory and Practice: Image Making for Cinematographers and Directors.Taylor & Francis.pp. 159–166.ISBN9781136047381.

- ^abParker, Michael (2013).Digital Video Processing for Engineers: a Foundation for Embedded Systems Design.Suhel Dhanani. Amsterdam.ISBN978-0-12-415761-3.OCLC815408915.Archivedfrom the original on August 25, 2022.RetrievedAugust 25,2022.

{{cite book}}:CS1 maint: location missing publisher (link) - ^abBing, Benny (2010).3D and HD broadband video networking.Boston: Artech House. pp. 57–70.ISBN978-1-60807-052-7.OCLC672322796.Archivedfrom the original on August 25, 2022.RetrievedAugust 25,2022.

- ^abStump, David (2022).Digital cinematography: fundamentals, tools, techniques, and workflows(2nd ed.). New York, NY:Routledge.pp. 125–139.ISBN978-0-429-46885-8.OCLC1233023513.Archivedfrom the original on August 25, 2022.RetrievedAugust 25,2022.

- ^Constine, Josh (May 27, 2015)."The Most Important Insights From Mary Meeker's 2015 Internet Trends Report".TechCrunch.Archivedfrom the original on August 4, 2015.RetrievedAugust 6,2015.

- ^abLi, Ze-Nian; Drew, Mark S.; Liu, Jiangchun (2021).Fundamentals of multimedia(3rd ed.). Cham, Switzerland:Springer.pp. 108–117.ISBN978-3-030-62124-7.OCLC1243420273.Archivedfrom the original on August 25, 2022.RetrievedAugust 25,2022.

- ^abBanerjee, Sreeparna (2019). "Video in Multimedia".Elements of multimedia.Boca Raton: CRC Press.ISBN978-0-429-43320-7.OCLC1098279086.Archivedfrom the original on August 25, 2022.RetrievedAugust 25,2022.

- ^Andy Beach (2008).Real World Video Compression.Peachpit Press.ISBN978-0-13-208951-7.OCLC1302274863.Archivedfrom the original on August 25, 2022.RetrievedAugust 25,2022.

- ^Sanz, Jorge L. C. (1996).Image Technology: Advances in Image Processing, Multimedia and Machine Vision.Berlin, Heidelberg: Springer Berlin Heidelberg.ISBN978-3-642-58288-2.OCLC840292528.Archivedfrom the original on August 25, 2022.RetrievedAugust 25,2022.

- ^Ekmekcioglu, Erhan; Fernando, Anil; Worrall, Stewart (2013).3DTV: processing and transmission of 3D video signals.Chichester, West Sussex, United Kingdom: Wiley & Sons.ISBN978-1-118-70573-5.OCLC844775006.Archivedfrom the original on August 25, 2022.RetrievedAugust 25,2022.

- ^Block, Bruce A.; McNally, Phillip (2013).3D storytelling: how stereoscopic 3D works and how to use it.Burlington, MA: Taylor & Francis.ISBN978-1-136-03881-5.OCLC858027807.Archivedfrom the original on August 25, 2022.RetrievedAugust 25,2022.

- ^abTozer, E.P.J. (2013).Broadcast engineer's reference book(1st ed.). New York. pp. 470–476.ISBN978-1-136-02417-7.OCLC1300579454.Archivedfrom the original on August 25, 2022.RetrievedAugust 25,2022.

{{cite book}}:CS1 maint: location missing publisher (link) - ^abPizzi, Skip; Jones, Graham (2014).A Broadcast Engineering Tutorial for Non-Engineers(4th ed.). Hoboken: Taylor and Francis. pp. 145–152.ISBN978-1-317-90683-4.OCLC879025861.Archivedfrom the original on August 25, 2022.RetrievedAugust 25,2022.

- ^ab"Sony HD Formats Guide (2008)"(PDF).pro.sony.com.Archived(PDF)from the original on March 6, 2015.RetrievedNovember 16,2014.

- ^Ward, Peter (2015). "Video Recording Formats".Multiskilling for television production.Alan Bermingham, Chris Wherry. New York: Focal Press.ISBN978-0-08-051230-3.OCLC958102392.Archivedfrom the original on August 25, 2022.RetrievedAugust 25,2022.

- ^Merskin, Debra L., ed. (2020).The Sage international encyclopedia of mass media and society.Thousand Oaks, California.ISBN978-1-4833-7551-9.OCLC1130315057.Archivedfrom the original on June 3, 2020.RetrievedAugust 25,2022.

{{cite book}}:CS1 maint: location missing publisher (link) - ^Ghanbari, Mohammed (2003).Standard Codecs: Image Compression to Advanced Video Coding.Institution of Engineering and Technology.pp. 1–12.ISBN9780852967102.Archivedfrom the original on August 8, 2019.RetrievedNovember 27,2019.