BigOnotation

| Fit approximation |

|---|

| Concepts |

| Other fundamentals |

BigOnotationis a mathematical notation that describes thelimiting behaviorof afunctionwhen theargumenttends towards a particular value or infinity. Big O is a member of afamily of notationsinvented by German mathematiciansPaul Bachmann,[1]Edmund Landau,[2]and others, collectively calledBachmann–Landau notationorasymptotic notation.The letter O was chosen by Bachmann to stand forOrdnung,meaning theorder of approximation.

Incomputer science,big O notation is used toclassify algorithmsaccording to how their run time or space requirements grow as the input size grows.[3]Inanalytic number theory,big O notation is often used to express a bound on the difference between anarithmetical functionand a better understood approximation; a famous example of such a difference is the remainder term in theprime number theorem.Big O notation is also used in many other fields to provide similar estimates.

Big O notation characterizes functions according to their growth rates: different functions with the same asymptotic growth rate may be represented using the same O notation. The letter O is used because the growth rate of a function is also referred to as theorder of the function.A description of a function in terms of big O notation usually only provides anupper boundon the growth rate of the function.

Associated with big O notation are several related notations, using the symbolso,Ω,ω,andΘ,to describe other kinds of bounds on asymptotic growth rates.

Formal definition

[edit]Let,the function to be estimated, be arealorcomplexvalued function, and let,the comparison function, be a real valued function. Let both functions be defined on someunboundedsubsetof the positivereal numbers,andbe strictly positive for all large enough values of.[4]One writes and it is read "is big O of"if theabsolute valueofis at most a positive constant multiple offor all sufficiently large values of.That is,if there exists a positive real numberand a real numbersuch that In many contexts, the assumption that we are interested in the growth rate as the variablegoes to infinity is left unstated, and one writes more simply that The notation can also be used to describe the behavior ofnear some real number(often,): we say if there exist positive numbersandsuch that for all definedwith, Asis chosen to be strictly positive for such values of,both of these definitions can be unified using thelimit superior: if And in both of these definitions thelimit point(whetheror not) is acluster pointof the domains ofand,i. e., in every neighbourhood ofthere have to be infinitely many points in common. Moreover, as pointed out in the article about thelimit inferior and limit superior,the(at least on theextended real number line) always exists.

In computer science, a slightly more restrictive definition is common:andare both required to be functions from some unbounded subset of thepositive integersto the nonnegative real numbers; thenif there exist positive integer numbersandsuch thatfor all.[5]

Example

[edit]In typical usage theOnotation is asymptotical, that is, it refers to very largex.In this setting, the contribution of the terms that grow "most quickly" will eventually make the other ones irrelevant. As a result, the following simplification rules can be applied:

- Iff(x)is a sum of several terms, if there is one with largest growth rate, it can be kept, and all others omitted.

- Iff(x)is a product of several factors, any constants (factors in the product that do not depend onx) can be omitted.

For example, letf(x) = 6x4− 2x3+ 5,and suppose we wish to simplify this function, usingOnotation, to describe its growth rate asxapproaches infinity. This function is the sum of three terms:6x4,−2x3,and5.Of these three terms, the one with the highest growth rate is the one with the largest exponent as a function ofx,namely6x4.Now one may apply the second rule:6x4is a product of6andx4in which the first factor does not depend onx.Omitting this factor results in the simplified formx4.Thus, we say thatf(x)is a "big O" ofx4.Mathematically, we can writef(x) =O(x4).One may confirm this calculation using the formal definition: letf(x) = 6x4− 2x3+ 5andg(x) =x4.Applying theformal definitionfrom above, the statement thatf(x) =O(x4)is equivalent to its expansion, for some suitable choice of a real numberx0and a positive real numberMand for allx>x0.To prove this, letx0= 1andM= 13.Then, for allx>x0: so

Usage

[edit]Big O notation has two main areas of application:

- Inmathematics,it is commonly used to describehow closely a finite series approximates a given function,especially in the case of a truncatedTaylor seriesorasymptotic expansion

- Incomputer science,it is useful in theanalysis of algorithms

In both applications, the functiong(x)appearing within theO(·)is typically chosen to be as simple as possible, omitting constant factors and lower order terms.

There are two formally close, but noticeably different, usages of this notation:[citation needed]

- infiniteasymptotics

- infinitesimalasymptotics.

This distinction is only in application and not in principle, however—the formal definition for the "big O" is the same for both cases, only with different limits for the function argument.[original research?]

Infinite asymptotics

[edit]

Big O notation is useful whenanalyzing algorithmsfor efficiency. For example, the time (or the number of steps) it takes to complete a problem of sizenmight be found to beT(n) = 4n2− 2n+ 2.Asngrows large, then2termwill come to dominate, so that all other terms can be neglected—for instance whenn= 500,the term4n2is 1000 times as large as the2nterm. Ignoring the latter would have negligible effect on the expression's value for most purposes. Further, thecoefficientsbecome irrelevant if we compare to any otherorderof expression, such as an expression containing a termn3orn4.Even ifT(n) = 1,000,000n2,ifU(n) =n3,the latter will always exceed the former oncengrows larger than1,000,000,viz.T(1,000,000) = 1,000,0003=U(1,000,000).Additionally, the number of steps depends on the details of the machine model on which the algorithm runs, but different types of machines typically vary by only a constant factor in the number of steps needed to execute an algorithm. So the big O notation captures what remains: we write either

or

and say that the algorithm hasorder ofn2time complexity. The sign "="is not meant to express" is equal to "in its normal mathematical sense, but rather a more colloquial" is ", so the second expression is sometimes considered more accurate (see the"Equals sign"discussion below) while the first is considered by some as anabuse of notation.[6]

Infinitesimal asymptotics

[edit]Big O can also be used to describe theerror termin an approximation to a mathematical function. The most significant terms are written explicitly, and then the least-significant terms are summarized in a single big O term. Consider, for example, theexponential seriesand two expressions of it that are valid whenxis small:

The middle expression (the one withO(x3)) means the absolute-value of the errorex− (1 +x+x2/2) is at most some constant times |x3| whenxis close enough to 0.

Properties

[edit]If the functionfcan be written as a finite sum of other functions, then the fastest growing one determines the order off(n).For example,

In particular, if a function may be bounded by a polynomial inn,then asntends toinfinity,one may disregardlower-orderterms of the polynomial. The setsO(nc)andO(cn)are very different. Ifcis greater than one, then the latter grows much faster. A function that grows faster thanncfor anycis calledsuperpolynomial.One that grows more slowly than any exponential function of the formcnis calledsubexponential.An algorithm can require time that is both superpolynomial and subexponential; examples of this include the fastest known algorithms forinteger factorizationand the functionnlogn.

We may ignore any powers ofninside of the logarithms. The setO(logn)is exactly the same asO(log(nc)).The logarithms differ only by a constant factor (sincelog(nc) =clogn) and thus the big O notation ignores that. Similarly, logs with different constant bases are equivalent. On the other hand, exponentials with different bases are not of the same order. For example,2nand3nare not of the same order.

Changing units may or may not affect the order of the resulting algorithm. Changing units is equivalent to multiplying the appropriate variable by a constant wherever it appears. For example, if an algorithm runs in the order ofn2,replacingnbycnmeans the algorithm runs in the order ofc2n2,and the big O notation ignores the constantc2.This can be written asc2n2= O(n2).If, however, an algorithm runs in the order of2n,replacingnwithcngives2cn= (2c)n.This is not equivalent to2nin general. Changing variables may also affect the order of the resulting algorithm. For example, if an algorithm's run time isO(n)when measured in terms of the numbernofdigitsof an input numberx,then its run time isO(logx)when measured as a function of the input numberxitself, becausen=O(logx).

Product

[edit]Sum

[edit]Ifandthen.It follows that ifandthen.In other words, this second statement says thatis aconvex cone.

Multiplication by a constant

[edit]Letkbe a nonzero constant. Then.In other words, if,then

Multiple variables

[edit]BigO(and little o, Ω, etc.) can also be used with multiple variables. To define bigOformally for multiple variables, supposeandare two functions defined on some subset of.We say

if and only if there exist constantsandsuch thatfor allwithfor some[7] Equivalently, the condition thatfor somecan be written,wheredenotes theChebyshev norm.For example, the statement

asserts that there exist constantsCandMsuch that

whenever eitherorholds. This definition allows all of the coordinates ofto increase to infinity. In particular, the statement

(i.e.,) is quite different from

(i.e.,).

Under this definition, the subset on which a function is defined is significant when generalizing statements from the univariate setting to the multivariate setting. For example, ifand,thenif we restrictandto,but not if they are defined on.

This is not the only generalization of big O to multivariate functions, and in practice, there is some inconsistency in the choice of definition.[8]

Matters of notation

[edit]Equals sign

[edit]The statement "f(x) isO(g(x)) "as defined above is usually written asf(x) =O(g(x)).Some consider this to be anabuse of notation,since the use of the equals sign could be misleading as it suggests a symmetry that this statement does not have. Asde Bruijnsays,O(x) =O(x2)is true butO(x2) =O(x)is not.[9]Knuthdescribes such statements as "one-way equalities", since if the sides could be reversed, "we could deduce ridiculous things liken=n2from the identitiesn=O(n2)andn2=O(n2)."[10]In another letter, Knuth also pointed out that "the equality sign is not symmetric with respect to such notations", as, in this notation, "mathematicians customarily use the = sign as they use the word" is "in English: Aristotle is a man, but a man isn't necessarily Aristotle".[11]

For these reasons, it would be more precise to useset notationand writef(x) ∈O(g(x))(read as: "f(x)is an element ofO(g(x)) ", or"f(x) is in the setO(g(x)) "), thinking ofO(g(x)) as the class of all functionsh(x) such that|h(x)| ≤Cg(x)for some positive real numberC.[10]However, the use of the equals sign is customary.[9][10]

Other arithmetic operators

[edit]Big O notation can also be used in conjunction with other arithmetic operators in more complicated equations. For example,h(x) +O(f(x))denotes the collection of functions having the growth ofh(x) plus a part whose growth is limited to that off(x). Thus,

expresses the same as

Example

[edit]Suppose analgorithmis being developed to operate on a set ofnelements. Its developers are interested in finding a functionT(n) that will express how long the algorithm will take to run (in some arbitrary measurement of time) in terms of the number of elements in the input set. The algorithm works by first calling a subroutine to sort the elements in the set and then perform its own operations. The sort has a known time complexity ofO(n2), and after the subroutine runs the algorithm must take an additional55n3+ 2n+ 10steps before it terminates. Thus the overall time complexity of the algorithm can be expressed asT(n) = 55n3+O(n2).Here the terms2n+ 10are subsumed within the faster-growingO(n2). Again, this usage disregards some of the formal meaning of the "=" symbol, but it does allow one to use the big O notation as a kind of convenient placeholder.

Multiple uses

[edit]In more complicated usage,O(·) can appear in different places in an equation, even several times on each side. For example, the following are true for: The meaning of such statements is as follows: foranyfunctions which satisfy eachO(·) on the left side, there aresomefunctions satisfying eachO(·) on the right side, such that substituting all these functions into the equation makes the two sides equal. For example, the third equation above means: "For any functionf(n) =O(1), there is some functiong(n) =O(en) such thatnf(n)=g(n). "In terms of the" set notation "above, the meaning is that the class of functions represented by the left side is a subset of the class of functions represented by the right side. In this use the" = "is a formal symbol that unlike the usual use of" = "is not asymmetric relation.Thus for examplenO(1)=O(en)does not imply the false statementO(en) =nO(1).

Typesetting

[edit]Big O is typeset as an italicized uppercase "O", as in the following example:.[12][13]InTeX,it is produced by simply typing O inside math mode. Unlike Greek-named Bachmann–Landau notations, it needs no special symbol. However, some authors use the calligraphic variantinstead.[14][15]

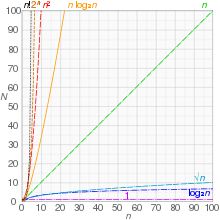

Orders of common functions

[edit]Here is a list of classes of functions that are commonly encountered when analyzing the running time of an algorithm. In each case,cis a positive constant andnincreases without bound. The slower-growing functions are generally listed first.

| Notation | Name | Example |

|---|---|---|

| constant | Finding the median value for a sorted array of numbers; Calculating;Using a constant-sizelookup table | |

| inverse Ackermann function | Amortized complexity per operation for theDisjoint-set data structure | |

| double logarithmic | Average number of comparisons spent finding an item usinginterpolation searchin a sorted array of uniformly distributed values | |

| logarithmic | Finding an item in a sorted array with abinary searchor a balanced searchtreeas well as all operations in abinomial heap | |

| polylogarithmic | Matrix chain ordering can be solved in polylogarithmic time on aparallel random-access machine. | |

| fractional power | Searching in ak-d tree | |

| linear | Finding an item in an unsorted list or in an unsorted array; adding twon-bit integers byripple carry | |

| nlog-starn | Performingtriangulationof a simple polygon usingSeidel's algorithm,where | |

| linearithmic,loglinear, quasilinear, or "nlogn" | Performing afast Fourier transform;fastest possiblecomparison sort;heapsortandmerge sort | |

| quadratic | Multiplying twon-digit numbers byschoolbook multiplication;simple sorting algorithms, such asbubble sort,selection sortandinsertion sort;(worst-case) bound on some usually faster sorting algorithms such asquicksort,Shellsort,andtree sort | |

| polynomialor algebraic | Tree-adjoining grammarparsing; maximummatchingforbipartite graphs;finding thedeterminantwithLU decomposition | |

| L-notationorsub-exponential | Factoring a number using thequadratic sieveornumber field sieve | |

| exponential | Finding the (exact) solution to thetravelling salesman problemusingdynamic programming;determining if two logical statements are equivalent usingbrute-force search | |

| factorial | Solving thetravelling salesman problemvia brute-force search; generating all unrestricted permutations of aposet;finding thedeterminantwithLaplace expansion;enumeratingall partitions of a set |

The statementis sometimes weakened toto derive simpler formulas for asymptotic complexity. For anyand,is a subset offor any,so may be considered as a polynomial with some bigger order.

Related asymptotic notations

[edit]BigOis widely used in computer science. Together with some other related notations, it forms the family of Bachmann–Landau notations.[citation needed]

Little-o notation

[edit]Intuitively, the assertion "f(x)iso(g(x))"(read"f(x)is little-o ofg(x)") means thatg(x)grows much faster thanf(x),or equivalentlyf(x)grows much slower thang(x).As before, letfbe a real or complex valued function andga real valued function, both defined on some unbounded subset of the positivereal numbers,such thatg(x) is strictly positive for all large enough values ofx.One writes

if for every positive constantεthere exists a constantsuch that

For example, one has

- andboth as

The difference between thedefinition of the big-O notationand the definition of little-o is that while the former has to be true forat least oneconstantM,the latter must hold foreverypositive constantε,however small.[17]In this way, little-o notation makes astronger statementthan the corresponding big-O notation: every function that is little-o ofgis also big-O ofg,but not every function that is big-O ofgis little-o ofg.For example,but.

Ifg(x) is nonzero, or at least becomes nonzero beyond a certain point, the relationis equivalent to

- (and this is in fact how Landau[16]originally defined the little-o notation).

Little-o respects a number of arithmetic operations. For example,

- ifcis a nonzero constant andthen,and

- ifandthen

It also satisfies atransitivityrelation:

- ifandthen

Big Omega notation

[edit]Another asymptotic notation is,read "big omega".[18]There are two widespread and incompatible definitions of the statement

- as,

whereais some real number,,or,wherefandgare real functions defined in a neighbourhood ofa,and wheregis positive in this neighbourhood.

The Hardy–Littlewood definition is used mainly inanalytic number theory,and the Knuth definition mainly incomputational complexity theory;the definitions are not equivalent.

The Hardy–Littlewood definition

[edit]In 1914Godfrey Harold HardyandJohn Edensor Littlewoodintroduced the new symbol,[19]which is defined as follows:

- asif

Thusis the negation of.

In 1916 the same authors introduced the two new symbolsand,defined as:[20]

- asif;

- asif

These symbols were used byEdmund Landau,with the same meanings, in 1924.[21]After Landau, the notations were never used again exactly thus;[citation needed]becameandbecame.

These three symbols,as well as(meaning thatandare both satisfied), are now currently used inanalytic number theory.[22][23]

Simple examples

[edit]We have

- as

and more precisely

- as

We have

- as

and more precisely

- as

however

- as

The Knuth definition

[edit]In 1976Donald Knuthpublished a paper to justify his use of the-symbol to describe a stronger property.[24]Knuth wrote: "For all the applications I have seen so far in computer science, a stronger requirement... is much more appropriate". He defined

with the comment: "Although I have changed Hardy and Littlewood's definition of,I feel justified in doing so because their definition is by no means in wide use, and because there are other ways to say what they want to say in the comparatively rare cases when their definition applies. "[24]

Family of Bachmann–Landau notations

[edit]| Notation | Name[24] | Description | Formal definition | Limit definition[25][26][27][24][19] |

|---|---|---|---|---|

| Small O; Small Oh; Little O; Little Oh | fis dominated bygasymptotically (for any constant factor) | |||

| Big O; Big Oh; Big Omicron | is asymptotically bounded above byg(up to constant factor) | |||

| (Hardy's notation) or(Knuth notation) | Of the same order as (Hardy); Big Theta (Knuth) | fis asymptotically bounded bygboth above (with constant factor) and below (with constant factor) | and | |

| Asymptotic equivalence | fis equal togasymptotically | |||

| Big Omega in complexity theory (Knuth) | fis bounded below bygasymptotically | |||

| Small Omega; Little Omega | fdominatesgasymptotically | |||

| Big Omega in number theory (Hardy–Littlewood) | is not dominated bygasymptotically |

The limit definitions assumefor sufficiently large.The table is (partly) sorted from smallest to largest, in the sense that(Knuth's version of)on functions correspond toon the real line[27](the Hardy–Littlewood version of,however, doesn't correspond to any such description).

Computer science uses the big,big Theta,little,little omegaand Knuth's big Omeganotations.[28]Analytic number theory often uses the big,small,Hardy's,[29]Hardy–Littlewood's big Omega(with or without the +, − or ± subscripts) andnotations.[22]The small omeganotation is not used as often in analysis.[30]

Use in computer science

[edit]Informally, especially in computer science, the bigOnotation often can be used somewhat differently to describe an asymptotictightbound where using big Theta Θ notation might be more factually appropriate in a given context.[31]For example, when considering a functionT(n) = 73n3+ 22n2+ 58, all of the following are generally acceptable, but tighter bounds (such as numbers 2 and 3 below) are usually strongly preferred over looser bounds (such as number 1 below).

- T(n) =O(n100)

- T(n) =O(n3)

- T(n) = Θ(n3)

The equivalent English statements are respectively:

- T(n) grows asymptotically no faster thann100

- T(n) grows asymptotically no faster thann3

- T(n) grows asymptotically as fast asn3.

So while all three statements are true, progressively more information is contained in each. In some fields, however, the big O notation (number 2 in the lists above) would be used more commonly than the big Theta notation (items numbered 3 in the lists above). For example, ifT(n) represents the running time of a newly developed algorithm for input sizen,the inventors and users of the algorithm might be more inclined to put an upper asymptotic bound on how long it will take to run without making an explicit statement about the lower asymptotic bound.

Other notation

[edit]In their bookIntroduction to Algorithms,Cormen,Leiserson,RivestandSteinconsider the set of functionsfwhich satisfy

In a correct notation this set can, for instance, be calledO(g), where

The authors state that the use of equality operator (=) to denote set membership rather than the set membership operator (∈) is an abuse of notation, but that doing so has advantages.[6]Inside an equation or inequality, the use of asymptotic notation stands for an anonymous function in the setO(g), which eliminates lower-order terms, and helps to reduce inessential clutter in equations, for example:[33]

Extensions to the Bachmann–Landau notations

[edit]Another notation sometimes used in computer science isÕ(readsoft-O), which hides polylogarithmic factors. There are two definitions in use: some authors usef(n) =Õ(g(n)) asshorthandforf(n) =O(g(n)logkn)for somek,while others use it as shorthand forf(n) =O(g(n) logkg(n)).[34]Wheng(n)is polynomial inn,there is no difference; however, the latter definition allows one to say, e.g. thatwhile the former definition allows forfor any constantk.Some authors writeO*for the same purpose as the latter definition.[35]Essentially, it is bigOnotation, ignoringlogarithmic factorsbecause thegrowth-rateeffects of some other super-logarithmic function indicate a growth-rate explosion for large-sized input parameters that is more important to predicting bad run-time performance than the finer-point effects contributed by the logarithmic-growth factor(s). This notation is often used to obviate the "nitpicking" within growth-rates that are stated as too tightly bounded for the matters at hand (since logknis alwayso(nε) for any constantkand anyε> 0).

Also, theLnotation,defined as

is convenient for functions that are betweenpolynomialandexponentialin terms of.

Generalizations and related usages

[edit]The generalization to functions taking values in anynormed vector spaceis straightforward (replacing absolute values by norms), wherefandgneed not take their values in the same space. A generalization to functionsgtaking values in anytopological groupis also possible[citation needed]. The "limiting process"x→xocan also be generalized by introducing an arbitraryfilter base,i.e. to directednetsfandg.Theonotation can be used to definederivativesanddifferentiabilityin quite general spaces, and also (asymptotical) equivalence of functions,

which is anequivalence relationand a more restrictive notion than the relationship "fis Θ(g) "from above. (It reduces to limf/g= 1 iffandgare positive real valued functions.) For example, 2xis Θ(x), but2x−xis noto(x).

History (Bachmann–Landau, Hardy, and Vinogradov notations)

[edit]The symbol O was first introduced by number theoristPaul Bachmannin 1894, in the second volume of his bookAnalytische Zahlentheorie( "analytic number theory").[1]The number theoristEdmund Landauadopted it, and was thus inspired to introduce in 1909 the notation o;[2]hence both are now called Landau symbols. These notations were used in applied mathematics during the 1950s for asymptotic analysis.[36] The symbol(in the sense "is not anoof ") was introduced in 1914 by Hardy and Littlewood.[19]Hardy and Littlewood also introduced in 1916 the symbols( "right" ) and( "left" ),[20]precursors of the modern symbols( "is not smaller than a small o of" ) and( "is not larger than a small o of" ). Thus the Omega symbols (with their original meanings) are sometimes also referred to as "Landau symbols". This notationbecame commonly used in number theory at least since the 1950s.[37]

The symbol,although it had been used before with different meanings,[27]was given its modern definition by Landau in 1909[38]and by Hardy in 1910.[39]Just above on the same page of his tract Hardy defined the symbol,wheremeans that bothandare satisfied. The notation is still currently used in analytic number theory.[40][29]In his tract Hardy also proposed the symbol,wheremeans thatfor some constant.

In the 1970s the big O was popularized in computer science byDonald Knuth,who proposed the different notationfor Hardy's,and proposed a different definition for the Hardy and Littlewood Omega notation.[24]

Two other symbols coined by Hardy were (in terms of the modernOnotation)

- and

(Hardy however never defined or used the notation,nor,as it has been sometimes reported). Hardy introduced the symbolsand(as well as the already mentioned other symbols) in his 1910 tract "Orders of Infinity", and made use of them only in three papers (1910–1913). In his nearly 400 remaining papers and books he consistently used the Landau symbols O and o.

Hardy's symbolsand(as well as) are not used anymore. On the other hand, in the 1930s,[41]the Russian number theoristIvan Matveyevich Vinogradovintroduced his notation,which has been increasingly used in number theory instead of thenotation. We have

and frequently both notations are used in the same paper.

The big-O originally stands for "order of" ( "Ordnung", Bachmann 1894), and is thus a Latin letter. Neither Bachmann nor Landau ever call it "Omicron". The symbol was much later on (1976) viewed by Knuth as a capitalomicron,[24]probably in reference to his definition of the symbolOmega.The digitzeroshould not be used.

See also

[edit]- Asymptotic computational complexity

- Asymptotic expansion:Approximation of functions generalizing Taylor's formula

- Asymptotically optimal algorithm:A phrase frequently used to describe an algorithm that has an upper bound asymptotically within a constant of a lower bound for the problem

- Big O in probability notation:Op,op

- Limit inferior and limit superior:An explanation of some of the limit notation used in this article

- Master theorem (analysis of algorithms):For analyzing divide-and-conquer recursive algorithms using Big O notation

- Nachbin's theorem:A precise method of boundingcomplex analyticfunctions so that the domain of convergence ofintegral transformscan be stated

- Order of approximation

- Computational complexity of mathematical operations

References and notes

[edit]- ^abBachmann, Paul(1894).Analytische Zahlentheorie[Analytic Number Theory] (in German). Vol. 2. Leipzig: Teubner.

- ^abLandau, Edmund(1909).Handbuch der Lehre von der Verteilung der Primzahlen[Handbook on the theory of the distribution of the primes] (in German). Leipzig: B. G. Teubner. p. 883.

- ^Mohr, Austin."Quantum Computing in Complexity Theory and Theory of Computation"(PDF).p. 2.Archived(PDF)from the original on 8 March 2014.Retrieved7 June2014.

- ^Landau, Edmund(1909).Handbuch der Lehre von der Verteilung der Primzahlen[Handbook on the theory of the distribution of the primes] (in German). Leipzig: B.G. Teubner. p. 31.

- ^Michael Sipser (1997).Introduction to the Theory of Computation.Boston/MA: PWS Publishing Co.Here: Def.7.2, p.227

- ^abCormen, Thomas H.; Leiserson, Charles E.; Rivest, Ronald L. (2009).Introduction to Algorithms(3rd ed.). Cambridge/MA: MIT Press. p.45.ISBN978-0-262-53305-8.

Becauseθ(g(n)) is a set, we could write "f(n) ∈θ(g(n)) "to indicate thatf(n) is a member ofθ(g(n)). Instead, we will usually writef(n) =θ(g(n)) to express the same notion. You might be confused because we abuse equality in this way, but we shall see later in this section that doing so has its advantages.

- ^Cormen et al. (2009),p. 53

- ^Howell, Rodney."On Asymptotic Notation with Multiple Variables"(PDF).Archived(PDF)from the original on 2015-04-24.Retrieved2015-04-23.

- ^abN. G. de Bruijn(1958).Asymptotic Methods in Analysis.Amsterdam: North-Holland. pp. 5–7.ISBN978-0-486-64221-5.Archivedfrom the original on 2023-01-17.Retrieved2021-09-15.

- ^abcGraham, Ronald;Knuth, Donald;Patashnik, Oren(1994).Concrete Mathematics(2 ed.). Reading, Massachusetts: Addison–Wesley. p. 446.ISBN978-0-201-55802-9.Archivedfrom the original on 2023-01-17.Retrieved2016-09-23.

- ^Donald Knuth (June–July 1998)."Teach Calculus with Big O"(PDF).Notices of the American Mathematical Society.45(6): 687.Archived(PDF)from the original on 2021-10-14.Retrieved2021-09-05.(Unabridged versionArchived2008-05-13 at theWayback Machine)

- ^Donald E. Knuth, The art of computer programming. Vol. 1. Fundamental algorithms, third edition, Addison Wesley Longman, 1997. Section 1.2.11.1.

- ^Ronald L. Graham, Donald E. Knuth, and Oren Patashnik,Concrete Mathematics: A Foundation for Computer Science (2nd ed.),Addison-Wesley, 1994. Section 9.2, p. 443.

- ^Sivaram Ambikasaran and Eric Darve, AnFast Direct Solver for Partial Hierarchically Semi-Separable Matrices,J. Scientific Computing57(2013), no. 3, 477–501.

- ^Saket Saurabh and Meirav Zehavi,-Max-Cut: An-Time Algorithm and a Polynomial Kernel,Algorithmica80(2018), no. 12, 3844–3860.

- ^abLandau, Edmund(1909).Handbuch der Lehre von der Verteilung der Primzahlen[Handbook on the theory of the distribution of the primes] (in German). Leipzig: B. G. Teubner. p. 61.

- ^Thomas H. Cormen et al., 2001,Introduction to Algorithms, Second Edition, Ch. 3.1Archived2009-01-16 at theWayback Machine

- ^Cormen TH, Leiserson CE, Rivest RL, Stein C (2009).Introduction to algorithms(3rd ed.). Cambridge, Mass.: MIT Press. p. 48.ISBN978-0-262-27083-0.OCLC676697295.

- ^abcHardy, G. H.; Littlewood, J. E. (1914)."Some problems of diophantine approximation: Part II. The trigonometrical series associated with the elliptic θ-functions".Acta Mathematica.37:225.doi:10.1007/BF02401834.Archivedfrom the original on 2018-12-12.Retrieved2017-03-14.

- ^abG. H. Hardy and J. E. Littlewood, « Contribution to the theory of the Riemann zeta-function and the theory of the distribution of primes »,Acta Mathematica,vol. 41, 1916.

- ^E. Landau, "Über die Anzahl der Gitterpunkte in gewissen Bereichen. IV." Nachr. Gesell. Wiss. Gött. Math-phys. Kl. 1924, 137–150.

- ^abAleksandar Ivić.The Riemann zeta-function, chapter 9. John Wiley & Sons 1985.

- ^Gérald Tenenbaum,Introduction to analytic and probabilistic number theory, Chapter I.5. American Mathematical Society, Providence RI, 2015.

- ^abcdefKnuth, Donald (April–June 1976)."Big Omicron and big Omega and big Theta".SIGACT News.8(2): 18–24.doi:10.1145/1008328.1008329.S2CID5230246.

- ^Balcázar, José L.; Gabarró, Joaquim."Nonuniform complexity classes specified by lower and upper bounds"(PDF).RAIRO – Theoretical Informatics and Applications – Informatique Théorique et Applications.23(2): 180.ISSN0988-3754.Archived(PDF)from the original on 14 March 2017.Retrieved14 March2017– via Numdam.

- ^Cucker, Felipe; Bürgisser, Peter (2013)."A.1 Big Oh, Little Oh, and Other Comparisons".Condition: The Geometry of Numerical Algorithms.Berlin, Heidelberg: Springer. pp. 467–468.doi:10.1007/978-3-642-38896-5.ISBN978-3-642-38896-5.

- ^abcVitányi, Paul;Meertens, Lambert(April 1985)."Big Omega versus the wild functions"(PDF).ACM SIGACT News.16(4): 56–59.CiteSeerX10.1.1.694.3072.doi:10.1145/382242.382835.S2CID11700420.Archived(PDF)from the original on 2016-03-10.Retrieved2017-03-14.

- ^Cormen, Thomas H.;Leiserson, Charles E.;Rivest, Ronald L.;Stein, Clifford(2001) [1990].Introduction to Algorithms(2nd ed.). MIT Press and McGraw-Hill. pp. 41–50.ISBN0-262-03293-7.

- ^abGérald Tenenbaum, Introduction to analytic and probabilistic number theory, « Notation », page xxiii. American Mathematical Society, Providence RI, 2015.

- ^for example it is omitted in:Hildebrand, A.J."Asymptotic Notations"(PDF).Department of Mathematics.Asymptotic Methods in Analysis.Math 595, Fall 2009. Urbana, IL: University of Illinois.Archived(PDF)from the original on 14 March 2017.Retrieved14 March2017.

- ^Cormen et al. (2009),p. 64: "Many people continue to use theO-notation where the Θ-notation is more technically precise. "

- ^Cormen, Thomas H.; Leiserson, Charles E.; Rivest, Ronald L. (2009).Introduction to Algorithms(3rd ed.). Cambridge/MA: MIT Press. p. 47.ISBN978-0-262-53305-8.

When we have only an asymptotic upper bound, we use O-notation. For a given functiong(n), we denote byO(g(n)) (pronounced "big-oh ofgofn"or sometimes just" oh ofgofn") the set of functionsO(g(n)) = {f(n): there exist positive constantscandn0such that 0 ≤f(n) ≤cg(n) for alln≥n0}

- ^Cormen, Thomas H.; Leiserson, Charles E.; Rivest, Ronald L. (2009).Introduction to Algorithms(3rd ed.). Cambridge/MA: MIT Press. p.49.ISBN978-0-262-53305-8.

When the asymptotic notation stands alone (that is, not within a larger formula) on the right-hand side of an equation (or inequality), as in n = O(n2), we have already defined the equal sign to mean set membership: n ∈ O(n2). In general, however, when asymptotic notation appears in a formula, we interpret it as standing for some anonymous function that we do not care to name. For example, the formula 2n2+ 3n+ 1 = 2n2+θ(n) means that 2n2+ 3n+ 1 = 2n2+f(n), wheref(n) is some function in the setθ(n). In this case, we letf(n) = 3n+ 1, which is indeed inθ(n). Using asymptotic notation in this manner can help eliminate inessential detail and clutter in an equation.

- ^Cormen, Thomas H.; Leiserson, Charles E.; Rivest, Ronald L.; Stein, Clifford (2022).Introduction to Algorithms(4th ed.). Cambridge, Mass.: The MIT Press. pp. 74–75.ISBN9780262046305.

- ^Andreas Björklund and Thore Husfeldt and Mikko Koivisto (2009)."Set partitioning via inclusion-exclusion"(PDF).SIAM Journal on Computing.39(2): 546–563.doi:10.1137/070683933.Archived(PDF)from the original on 2022-02-03.Retrieved2022-02-03.See sect.2.3, p.551.

- ^Erdelyi, A. (1956).Asymptotic Expansions.Courier Corporation.ISBN978-0-486-60318-6.

- ^E. C. Titchmarsh, The Theory of the Riemann Zeta-Function (Oxford; Clarendon Press, 1951)

- ^Landau, Edmund(1909).Handbuch der Lehre von der Verteilung der Primzahlen[Handbook on the theory of the distribution of the primes] (in German). Leipzig: B. G. Teubner. p. 62.

- ^Hardy, G. H.(1910).Orders of Infinity: The 'Infinitärcalcül' of Paul du Bois-Reymond.Cambridge University Press.p. 2.

- ^Hardy, G. H.;Wright, E. M.(2008) [1st ed. 1938]. "1.6. Some notations".An Introduction to the Theory of Numbers.Revised byD. R. Heath-BrownandJ. H. Silverman,with a foreword byAndrew Wiles(6th ed.). Oxford: Oxford University Press.ISBN978-0-19-921985-8.

- ^See for instance "A new estimate forG(n) in Waring's problem "(Russian). Doklady Akademii Nauk SSSR 5, No 5-6 (1934), 249–253. Translated in English in: Selected works / Ivan Matveevič Vinogradov; prepared by the Steklov Mathematical Institute of the Academy of Sciences of the USSR on the occasion of his 90th birthday. Springer-Verlag, 1985.

Further reading

[edit]- Hardy, G. H.(1910).Orders of Infinity: The 'Infinitärcalcül' of Paul du Bois-Reymond.Cambridge University Press.

- Knuth, Donald(1997). "1.2.11: Asymptotic Representations".Fundamental Algorithms.The Art of Computer Programming. Vol. 1 (3rd ed.). Addison-Wesley.ISBN978-0-201-89683-1.

- Cormen, Thomas H.;Leiserson, Charles E.;Rivest, Ronald L.;Stein, Clifford(2001). "3.1: Asymptotic notation".Introduction to Algorithms(2nd ed.). MIT Press and McGraw-Hill.ISBN978-0-262-03293-3.

- Sipser, Michael(1997).Introduction to the Theory of Computation.PWS Publishing. pp.226–228.ISBN978-0-534-94728-6.

- Avigad, Jeremy; Donnelly, Kevin (2004).Formalizing O notation in Isabelle/HOL(PDF).International Joint Conference on Automated Reasoning.doi:10.1007/978-3-540-25984-8_27.

- Black, Paul E. (11 March 2005). Black, Paul E. (ed.)."big-O notation".Dictionary of Algorithms and Data Structures.U.S. National Institute of Standards and Technology.RetrievedDecember 16,2006.

- Black, Paul E. (17 December 2004). Black, Paul E. (ed.)."little-o notation".Dictionary of Algorithms and Data Structures.U.S. National Institute of Standards and Technology.RetrievedDecember 16,2006.

- Black, Paul E. (17 December 2004). Black, Paul E. (ed.)."Ω".Dictionary of Algorithms and Data Structures.U.S. National Institute of Standards and Technology.RetrievedDecember 16,2006.

- Black, Paul E. (17 December 2004). Black, Paul E. (ed.)."ω".Dictionary of Algorithms and Data Structures.U.S. National Institute of Standards and Technology.RetrievedDecember 16,2006.

- Black, Paul E. (17 December 2004). Black, Paul E. (ed.)."Θ".Dictionary of Algorithms and Data Structures.U.S. National Institute of Standards and Technology.RetrievedDecember 16,2006.

External links

[edit]- Growth of sequences — OEIS (Online Encyclopedia of Integer Sequences) Wiki

- Introduction to Asymptotic Notations

- Big-O Notation – What is it good for

- An example of Big O in accuracy of central divided difference scheme for first derivativeArchived2018-10-07 at theWayback Machine

- A Gentle Introduction to Algorithm Complexity Analysis

![{\displaystyle {\begin{aligned}e^{x}&=1+x+{\frac {x^{2}}{2!}}+{\frac {x^{3}}{3!}}+{\frac {x^{4}}{4!}}+\dotsb &{\text{for all }}x\\[4pt]&=1+x+{\frac {x^{2}}{2}}+O(x^{3})&{\text{as }}x\to 0\\[4pt]&=1+x+O(x^{2})&{\text{as }}x\to 0\end{aligned}}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/a6f78f0e8f34f3b3cbe295591de6e949c2477ef4)

![{\displaystyle L_{n}[\alpha ,c]=e^{(c+o(1))(\ln n)^{\alpha }(\ln \ln n)^{1-\alpha }}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/40715162d91a34daf9bcfed5f41d8e336417f803)

![{\displaystyle L_{n}[\alpha ,c]=e^{(c+o(1))(\ln n)^{\alpha }(\ln \ln n)^{1-\alpha }},}](https://wikimedia.org/api/rest_v1/media/math/render/svg/59b5d9f7b7c3e8763737cb136642c142bd27da00)