Probability distribution

| Part of a series onstatistics |

| Probability theory |

|---|

|

Inprobability theoryandstatistics,aprobability distributionis the mathematicalfunctionthat gives the probabilities of occurrence of possibleoutcomesfor anexperiment.[1][2]It is a mathematical description of arandomphenomenon in terms of itssample spaceand theprobabilitiesofevents(subsetsof the sample space).[3]

For instance, ifXis used to denote the outcome of a coin toss ( "the experiment" ), then the probability distribution ofXwould take the value 0.5 (1 in 2 or 1/2) forX= heads,and 0.5 forX= tails(assuming thatthe coin is fair). More commonly, probability distributions are used to compare the relative occurrence of many different random values.

Probability distributions can be defined in different ways and for discrete or for continuous variables. Distributions with special properties or for especially important applications are given specific names.

Introduction

[edit]A probability distribution is a mathematical description of the probabilities of events, subsets of thesample space.The sample space, often represented in notation byis thesetof all possibleoutcomesof a random phenomenon being observed. The sample space may be any set: a set ofreal numbers,a set of descriptive labels, a set ofvectors,a set of arbitrary non-numerical values, etc. For example, the sample space of a coin flip could be Ω ={"heads", "tails"}.

To define probability distributions for the specific case ofrandom variables(so the sample space can be seen as a numeric set), it is common to distinguish betweendiscreteandabsolutely continuousrandom variables.In the discrete case, it is sufficient to specify aprobability mass functionassigning a probability to each possible outcome (e.g. when throwing a fairdie,each of the six digits“1”to“6”,corresponding to the number of dots on the die, has the probabilityThe probability of aneventis then defined to be the sum of the probabilities of all outcomes that satisfy the event; for example, the probability of the event "the die rolls an even value" is

In contrast, when a random variable takes values from a continuum then by convention, any individual outcome is assigned probability zero. For suchcontinuous random variables,only events that include infinitely many outcomes such as intervals have probability greater than 0.

For example, consider measuring the weight of a piece of ham in the supermarket, and assume the scale can provide arbitrarily many digits of precision. Then, the probability that it weighsexactly500gmust be zero because no matter how high the level of precision chosen, it cannot be assumed the there are no non-zero decimal digits in the remaining omitted digits ignored by the precision level.

However, for the same use case, it is possible to meet quality control requirements such as that a package of "500 g" of ham must weigh between 490 g and 510 g with at least 98% probability. This is possible because this measurement does not require as much precision from the underlying equipment.

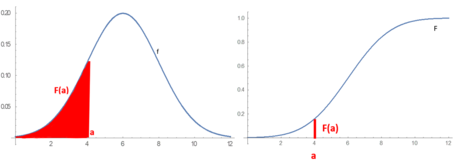

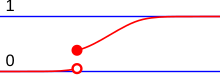

Absolutely continuous probability distributions can be described in several ways. Theprobability density functiondescribes theinfinitesimalprobability of any given value, and the probability that the outcome lies in a given interval can be computed byintegratingthe probability density function over that interval.[4]An alternative description of the distribution is by means of thecumulative distribution function,which describes the probability that the random variable is no larger than a given value (i.e.,for some).The cumulative distribution function is the area under theprobability density functionfromtoas shown in figure 1.[5]

General probability definition

[edit]A probability distribution can be described in various forms, such as by a probability mass function or a cumulative distribution function. One of the most general descriptions, which applies for absolutely continuous and discrete variables, is by means of a probability functionwhoseinput spaceis aσ-algebra,and gives areal numberprobabilityas its output, particularly, a number in.

The probability functioncan take as argument subsets of the sample space itself, as in the coin toss example, where the functionwas defined so thatP(heads) = 0.5andP(tails) = 0.5.However, because of the widespread use ofrandom variables,which transform the sample space into a set of numbers (e.g.,,), it is more common to study probability distributions whose argument are subsets of these particular kinds of sets (number sets),[6]and all probability distributions discussed in this article are of this type. It is common to denote asthe probability that a certain value of the variablebelongs to a certain event.[7][8]

The above probability function only characterizes a probability distribution if it satisfies all theKolmogorov axioms,that is:

- ,so the probability is non-negative

- ,so no probability exceeds

- for any countable disjoint family of sets

The concept of probability function is made more rigorous by defining it as the element of aprobability space,whereis the set of possible outcomes,is the set of all subsetswhose probability can be measured, andis the probability function, orprobability measure,that assigns a probability to each of these measurable subsets.[9]

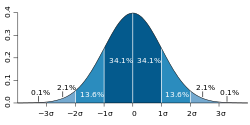

Probability distributions usually belong to one of two classes. Adiscrete probability distributionis applicable to the scenarios where the set of possible outcomes isdiscrete(e.g. a coin toss, a roll of a die) and the probabilities are encoded by a discrete list of the probabilities of the outcomes; in this case the discrete probability distribution is known asprobability mass function.On the other hand,absolutely continuous probability distributionsare applicable to scenarios where the set of possible outcomes can take on values in a continuous range (e.g. real numbers), such as the temperature on a given day. In the absolutely continuous case, probabilities are described by aprobability density function,and the probability distribution is by definition the integral of the probability density function.[7][4][8]Thenormal distributionis a commonly encountered absolutely continuous probability distribution. More complex experiments, such as those involvingstochastic processesdefined incontinuous time,may demand the use of more generalprobability measures.

A probability distribution whose sample space is one-dimensional (for example real numbers, list of labels, ordered labels or binary) is calledunivariate,while a distribution whose sample space is avector spaceof dimension 2 or more is calledmultivariate.A univariate distribution gives the probabilities of a singlerandom variabletaking on various different values; a multivariate distribution (ajoint probability distribution) gives the probabilities of arandom vector– a list of two or more random variables – taking on various combinations of values. Important and commonly encountered univariate probability distributions include thebinomial distribution,thehypergeometric distribution,and thenormal distribution.A commonly encountered multivariate distribution is themultivariate normal distribution.

Besides the probability function, the cumulative distribution function, the probability mass function and the probability density function, themoment generating functionand thecharacteristic functionalso serve to identify a probability distribution, as they uniquely determine an underlying cumulative distribution function.[10]

Terminology

[edit]Some key concepts and terms, widely used in the literature on the topic of probability distributions, are listed below.[1]

Basic terms

[edit]- Random variable:takes values from a sample space; probabilities describe which values and set of values are taken more likely.

- Event:set of possible values (outcomes) of a random variable that occurs with a certain probability.

- Probability functionorprobability measure:describes the probabilitythat the eventoccurs.[11]

- Cumulative distribution function:function evaluating theprobabilitythatwill take a value less than or equal tofor a random variable (only for real-valued random variables).

- Quantile function:the inverse of the cumulative distribution function. Givessuch that, with probability,will not exceed.

Discrete probability distributions

[edit]- Discrete probability distribution:for many random variables with finitely or countably infinitely many values.

- Probability mass function(pmf): function that gives the probability that a discrete random variable is equal to some value.

- Frequency distribution:a table that displays the frequency of various outcomesin a sample.

- Relative frequencydistribution:afrequency distributionwhere each value has been divided (normalized) by a number of outcomes in asample(i.e. sample size).

- Categorical distribution:for discrete random variables with a finite set of values.

Absolutely continuous probability distributions

[edit]- Absolutely continuous probability distribution:for many random variables with uncountably many values.

- Probability density function(pdf) orprobability density:function whose value at any given sample (or point) in thesample space(the set of possible values taken by the random variable) can be interpreted as providing arelative likelihoodthat the value of the random variable would equal that sample.

Related terms

[edit]- Support:set of values that can be assumed with non-zero probability (or probability density in the case of a continuous distribution) by the random variable. For a random variable,it is sometimes denoted as.

- Tail:[12]the regions close to the bounds of the random variable, if the pmf or pdf are relatively low therein. Usually has the form,or a union thereof.

- Head:[12]the region where the pmf or pdf is relatively high. Usually has the form.

- Expected valueormean:theweighted averageof the possible values, using their probabilities as their weights; or the continuous analog thereof.

- Median:the value such that the set of values less than the median, and the set greater than the median, each have probabilities no greater than one-half.

- Mode:for a discrete random variable, the value with highest probability; for an absolutely continuous random variable, a location at which the probability density function has a local peak.

- Quantile:the q-quantile is the valuesuch that.

- Variance:the second moment of the pmf or pdf about the mean; an important measure of thedispersionof the distribution.

- Standard deviation:the square root of the variance, and hence another measure of dispersion.

- Symmetry:a property of some distributions in which the portion of the distribution to the left of a specific value (usually the median) is a mirror image of the portion to its right.

- Skewness:a measure of the extent to which a pmf or pdf "leans" to one side of its mean. The thirdstandardized momentof the distribution.

- Kurtosis:a measure of the "fatness" of the tails of a pmf or pdf. The fourth standardized moment of the distribution.

Cumulative distribution function

[edit]In the special case of a real-valued random variable, the probability distribution can equivalently be represented by a cumulative distribution function instead of a probability measure. The cumulative distribution function of a random variablewith regard to a probability distributionis defined as

The cumulative distribution function of any real-valued random variable has the properties:

- is non-decreasing;

- isright-continuous;

- ;

- and;and

- .

Conversely, any functionthat satisfies the first four of the properties above is the cumulative distribution function of some probability distribution on the real numbers.[13]

Any probability distribution can be decomposed as themixtureof adiscrete,anabsolutely continuousand asingular continuous distribution,[14]and thus any cumulative distribution function admits a decomposition as theconvex sumof the three according cumulative distribution functions.

Discrete probability distribution

[edit]

Adiscrete probability distributionis the probability distribution of a random variable that can take on only a countable number of values[15](almost surely)[16]which means that the probability of any eventcan be expressed as a (finite orcountably infinite) sum: whereis a countable set with.Thus the discrete random variables (i.e. random variables whose probability distribution is discrete) are exactly those with aprobability mass function.In the case where the range of values is countably infinite, these values have to decline to zero fast enough for the probabilities to add up to 1. For example, iffor,the sum of probabilities would be.

Well-known discrete probability distributions used in statistical modeling include thePoisson distribution,theBernoulli distribution,thebinomial distribution,thegeometric distribution,thenegative binomial distributionandcategorical distribution.[3]When asample(a set of observations) is drawn from a larger population, the sample points have anempirical distributionthat is discrete, and which provides information about the population distribution. Additionally, thediscrete uniform distributionis commonly used in computer programs that make equal-probability random selections between a number of choices.

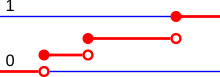

Cumulative distribution function

[edit]A real-valued discrete random variable can equivalently be defined as a random variable whose cumulative distribution function increases only byjump discontinuities—that is, its cdf increases only where it "jumps" to a higher value, and is constant in intervals without jumps. The points where jumps occur are precisely the values which the random variable may take. Thus the cumulative distribution function has the form

The points where the cdf jumps always form a countable set; this may be any countable set and thus may even be dense in the real numbers.

Dirac delta representation

[edit]A discrete probability distribution is often represented withDirac measures,the probability distributions ofdeterministic random variables.For any outcome,letbe the Dirac measure concentrated at.Given a discrete probability distribution, there is a countable setwithand a probability mass function.Ifis any event, then or in short,

Similarly, discrete distributions can be represented with theDirac delta functionas ageneralizedprobability density function,wherewhich means for any event[17]

Indicator-function representation

[edit]For a discrete random variable,letbe the values it can take with non-zero probability. Denote

These aredisjoint sets,and for such sets

It follows that the probability thattakes any value except foris zero, and thus one can writeas

except on a set of probability zero, whereis the indicator function of.This may serve as an alternative definition of discrete random variables.

One-point distribution

[edit]A special case is the discrete distribution of a random variable that can take on only one fixed value; in other words, it is adeterministic distribution.Expressed formally, the random variablehas a one-point distribution if it has a possible outcomesuch that[18]All other possible outcomes then have probability 0. Its cumulative distribution function jumps immediately from 0 to 1.

Absolutely continuous probability distribution

[edit]Anabsolutely continuous probability distributionis a probability distribution on the real numbers with uncountably many possible values, such as a whole interval in the real line, and where the probability of any event can be expressed as an integral.[19]More precisely, a real random variablehas anabsolutely continuousprobability distribution if there is a functionsuch that for each intervalthe probability ofbelonging tois given by the integral ofover:[20][21] This is the definition of aprobability density function,so that absolutely continuous probability distributions are exactly those with a probability density function. In particular, the probability forto take any single value(that is,) is zero, because anintegralwith coinciding upper and lower limits is always equal to zero. If the intervalis replaced by any measurable set,the according equality still holds:

Anabsolutely continuous random variableis a random variable whose probability distribution is absolutely continuous.

There are many examples of absolutely continuous probability distributions:normal,uniform,chi-squared,andothers.

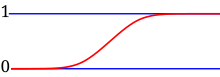

Cumulative distribution function

[edit]Absolutely continuous probability distributions as defined above are precisely those with anabsolutely continuouscumulative distribution function. In this case, the cumulative distribution functionhas the form whereis a density of the random variablewith regard to the distribution.

Note on terminology:Absolutely continuous distributions ought to be distinguished fromcontinuous distributions,which are those having a continuous cumulative distribution function. Every absolutely continuous distribution is a continuous distribution but the inverse is not true, there existsingular distributions,which are neither absolutely continuous nor discrete nor a mixture of those, and do not have a density. An example is given by theCantor distribution.Some authors however use the term "continuous distribution" to denote all distributions whose cumulative distribution function isabsolutely continuous,i.e. refer to absolutely continuous distributions as continuous distributions.[7]

For a more general definition of density functions and the equivalent absolutely continuous measures seeabsolutely continuous measure.

Kolmogorov definition

[edit]In themeasure-theoreticformalization ofprobability theory,arandom variableis defined as ameasurable functionfrom aprobability spaceto ameasurable space.Given that probabilities of events of the formsatisfyKolmogorov's probability axioms,theprobability distribution ofis theimage measureof,which is aprobability measureonsatisfying.[22][23][24]

Other kinds of distributions

[edit]

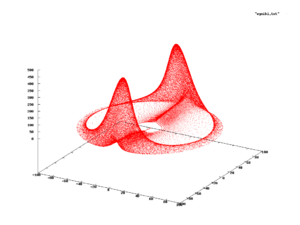

Absolutely continuous and discrete distributions with support onorare extremely useful to model a myriad of phenomena,[7][5]since most practical distributions are supported on relatively simple subsets, such ashypercubesorballs.However, this is not always the case, and there exist phenomena with supports that are actually complicated curveswithin some spaceor similar. In these cases, the probability distribution is supported on the image of such curve, and is likely to be determined empirically, rather than finding a closed formula for it.[25]

One example is shown in the figure to the right, which displays the evolution of asystem of differential equations(commonly known as theRabinovich–Fabrikant equations) that can be used to model the behaviour ofLangmuir wavesinplasma.[26]When this phenomenon is studied, the observed states from the subset are as indicated in red. So one could ask what is the probability of observing a state in a certain position of the red subset; if such a probability exists, it is called the probability measure of the system.[27][25]

This kind of complicated support appears quite frequently indynamical systems.It is not simple to establish that the system has a probability measure, and the main problem is the following. Letbe instants in time anda subset of the support; if the probability measure exists for the system, one would expect the frequency of observing states inside setwould be equal in intervaland,which might not happen; for example, it could oscillate similar to a sine,,whose limit whendoes not converge. Formally, the measure exists only if the limit of the relative frequency converges when the system is observed into the infinite future.[28]The branch of dynamical systems that studies the existence of a probability measure isergodic theory.

Note that even in these cases, the probability distribution, if it exists, might still be termed "absolutely continuous" or "discrete" depending on whether the support is uncountable or countable, respectively.

Random number generation

[edit]Most algorithms are based on apseudorandom number generatorthat produces numbersthat are uniformly distributed in thehalf-open interval[0, 1).Theserandom variatesare then transformed via some algorithm to create a new random variate having the required probability distribution. With this source of uniform pseudo-randomness, realizations of any random variable can be generated.[29]

For example, supposehas a uniform distribution between 0 and 1. To construct a random Bernoulli variable for some,we define so that

This random variableXhas a Bernoulli distribution with parameter.[29]This is a transformation of discrete random variable.

For a distribution functionof an absolutely continuous random variable, an absolutely continuous random variable must be constructed.,an inverse function of,relates to the uniform variable:

For example, suppose a random variable that has an exponential distributionmust be constructed.

soand ifhas adistribution, then the random variableis defined by.This has an exponential distribution of.[29]

A frequent problem in statistical simulations (theMonte Carlo method) is the generation ofpseudo-random numbersthat are distributed in a given way.

Common probability distributions and their applications

[edit]The concept of the probability distribution and the random variables which they describe underlies the mathematical discipline of probability theory, and the science of statistics. There is spread or variability in almost any value that can be measured in a population (e.g. height of people, durability of a metal, sales growth, traffic flow, etc.); almost all measurements are made with some intrinsic error; in physics, many processes are described probabilistically, from thekinetic properties of gasesto thequantum mechanicaldescription offundamental particles.For these and many other reasons, simplenumbersare often inadequate for describing a quantity, while probability distributions are often more appropriate.

The following is a list of some of the most common probability distributions, grouped by the type of process that they are related to. For a more complete list, seelist of probability distributions,which groups by the nature of the outcome being considered (discrete, absolutely continuous, multivariate, etc.)

All of the univariate distributions below are singly peaked; that is, it is assumed that the values cluster around a single point. In practice, actually observed quantities may cluster around multiple values. Such quantities can be modeled using amixture distribution.

Linear growth (e.g. errors, offsets)

[edit]- Normal distribution(Gaussian distribution), for a single such quantity; the most commonly used absolutely continuous distribution

Exponential growth (e.g. prices, incomes, populations)

[edit]- Log-normal distribution,for a single such quantity whose log isnormallydistributed

- Pareto distribution,for a single such quantity whose log isexponentiallydistributed; the prototypicalpower lawdistribution

Uniformly distributed quantities

[edit]- Discrete uniform distribution,for a finite set of values (e.g. the outcome of a fair dice)

- Continuous uniform distribution,for absolutely continuously distributed values

Bernoulli trials (yes/no events, with a given probability)

[edit]- Basic distributions:

- Bernoulli distribution,for the outcome of a single Bernoulli trial (e.g. success/failure, yes/no)

- Binomial distribution,for the number of "positive occurrences" (e.g. successes, yes votes, etc.) given a fixed total number ofindependentoccurrences

- Negative binomial distribution,for binomial-type observations but where the quantity of interest is the number of failures before a given number of successes occurs

- Geometric distribution,for binomial-type observations but where the quantity of interest is the number of failures before the first success; a special case of thenegative binomial distribution

- Related to sampling schemes over a finite population:

- Hypergeometric distribution,for the number of "positive occurrences" (e.g. successes, yes votes, etc.) given a fixed number of total occurrences, usingsampling without replacement

- Beta-binomial distribution,for the number of "positive occurrences" (e.g. successes, yes votes, etc.) given a fixed number of total occurrences, sampling using aPólya urn model(in some sense, the "opposite" ofsampling without replacement)

Categorical outcomes (events withKpossible outcomes)

[edit]- Categorical distribution,for a single categorical outcome (e.g. yes/no/maybe in a survey); a generalization of theBernoulli distribution

- Multinomial distribution,for the number of each type of categorical outcome, given a fixed number of total outcomes; a generalization of thebinomial distribution

- Multivariate hypergeometric distribution,similar to themultinomial distribution,but usingsampling without replacement;a generalization of thehypergeometric distribution

Poisson process (events that occur independently with a given rate)

[edit]- Poisson distribution,for the number of occurrences of a Poisson-type event in a given period of time

- Exponential distribution,for the time before the next Poisson-type event occurs

- Gamma distribution,for the time before the next k Poisson-type events occur

Absolute values of vectors with normally distributed components

[edit]- Rayleigh distribution,for the distribution of vector magnitudes with Gaussian distributed orthogonal components. Rayleigh distributions are found in RF signals with Gaussian real and imaginary components.

- Rice distribution,a generalization of the Rayleigh distributions for where there is a stationary background signal component. Found inRician fadingof radio signals due to multipath propagation and in MR images with noise corruption on non-zero NMR signals.

Normally distributed quantities operated with sum of squares

[edit]- Chi-squared distribution,the distribution of a sum of squaredstandard normalvariables; useful e.g. for inference regarding thesample varianceof normally distributed samples (seechi-squared test)

- Student's t distribution,the distribution of the ratio of astandard normalvariable and the square root of a scaledchi squaredvariable; useful for inference regarding themeanof normally distributed samples with unknown variance (seeStudent's t-test)

- F-distribution,the distribution of the ratio of two scaledchi squaredvariables; useful e.g. for inferences that involve comparing variances or involvingR-squared(the squaredcorrelation coefficient)

As conjugate prior distributions in Bayesian inference

[edit]- Beta distribution,for a single probability (real number between 0 and 1); conjugate to theBernoulli distributionandbinomial distribution

- Gamma distribution,for a non-negative scaling parameter; conjugate to the rate parameter of aPoisson distributionorexponential distribution,theprecision(inversevariance) of anormal distribution,etc.

- Dirichlet distribution,for a vector of probabilities that must sum to 1; conjugate to thecategorical distributionandmultinomial distribution;generalization of thebeta distribution

- Wishart distribution,for a symmetricnon-negative definitematrix; conjugate to the inverse of thecovariance matrixof amultivariate normal distribution;generalization of thegamma distribution[30]

Some specialized applications of probability distributions

[edit]- Thecache language modelsand otherstatistical language modelsused innatural language processingto assign probabilities to the occurrence of particular words and word sequences do so by means of probability distributions.

- In quantum mechanics, the probability density of finding the particle at a given point is proportional to the square of the magnitude of the particle'swavefunctionat that point (seeBorn rule). Therefore, the probability distribution function of the position of a particle is described by,probability that the particle's positionxwill be in the intervala≤x≤bin dimension one, and a similartriple integralin dimension three. This is a key principle of quantum mechanics.[31]

- Probabilistic load flow inpower-flow studyexplains the uncertainties of input variables as probability distribution and provides the power flow calculation also in term of probability distribution.[32]

- Prediction of natural phenomena occurrences based on previousfrequency distributionssuch astropical cyclones,hail, time in between events, etc.[33]

Fitting

[edit]Probability distribution fittingor simply distribution fitting is the fitting of a probability distribution to a series of data concerning the repeated measurement of a variable phenomenon. The aim of distribution fitting is topredicttheprobabilityor toforecastthefrequencyof occurrence of the magnitude of the phenomenon in a certain interval.

There are many probability distributions (seelist of probability distributions) of which some can be fitted more closely to the observed frequency of the data than others, depending on the characteristics of the phenomenon and of the distribution. The distribution giving a close fit is supposed to lead to good predictions.

In distribution fitting, therefore, one needs to select a distribution that suits the data well.See also

[edit]- Conditional probability distribution

- Empirical probability distribution

- Histogram

- Joint probability distribution

- Probability measure

- Quasiprobability distribution

- Riemann–Stieltjes integral application to probability theory

Lists

[edit]References

[edit]Citations

[edit]- ^abEveritt, Brian (2006).The Cambridge dictionary of statistics(3rd ed.). Cambridge, UK: Cambridge University Press.ISBN978-0-511-24688-3.OCLC161828328.

- ^Ash, Robert B. (2008).Basic probability theory(Dover ed.). Mineola, N.Y.: Dover Publications. pp. 66–69.ISBN978-0-486-46628-6.OCLC190785258.

- ^abEvans, Michael; Rosenthal, Jeffrey S. (2010).Probability and statistics: the science of uncertainty(2nd ed.). New York: W.H. Freeman and Co. p. 38.ISBN978-1-4292-2462-8.OCLC473463742.

- ^ab"1.3.6.1. What is a Probability Distribution".www.itl.nist.gov.Retrieved2020-09-10.

- ^abDekking, Michel (1946–) (2005).A Modern Introduction to Probability and Statistics: Understanding why and how.London, UK: Springer.ISBN978-1-85233-896-1.OCLC262680588.

{{cite book}}:CS1 maint: numeric names: authors list (link) - ^Walpole, R.E.; Myers, R.H.; Myers, S.L.; Ye, K. (1999).Probability and statistics for engineers.Prentice Hall.

- ^abcdRoss, Sheldon M. (2010).A first course in probability.Pearson.

- ^abDeGroot, Morris H.; Schervish, Mark J. (2002).Probability and Statistics.Addison-Wesley.

- ^Billingsley, P. (1986).Probability and measure.Wiley.ISBN9780471804789.

- ^Shephard, N.G. (1991)."From characteristic function to distribution function: a simple framework for the theory".Econometric Theory.7(4): 519–529.doi:10.1017/S0266466600004746.S2CID14668369.

- ^Chapters 1 and 2 ofVapnik (1998)

- ^abMore information and examples can be found in the articlesHeavy-tailed distribution,Long-tailed distribution,fat-tailed distribution

- ^Erhan, Çınlar (2011).Probability and stochastics.New York: Springer. p. 57.ISBN9780387878584.

- ^seeLebesgue's decomposition theorem

- ^Erhan, Çınlar (2011).Probability and stochastics.New York: Springer. p. 51.ISBN9780387878591.OCLC710149819.

- ^Cohn, Donald L. (1993).Measure theory.Birkhäuser.

- ^Khuri, André I. (March 2004). "Applications of Dirac's delta function in statistics".International Journal of Mathematical Education in Science and Technology.35(2): 185–195.doi:10.1080/00207390310001638313.ISSN0020-739X.S2CID122501973.

- ^Fisz, Marek (1963).Probability Theory and Mathematical Statistics(3rd ed.). John Wiley & Sons. p. 129.ISBN0-471-26250-1.

- ^Jeffrey Seth Rosenthal (2000).A First Look at Rigorous Probability Theory.World Scientific.

- ^Chapter 3.2 ofDeGroot & Schervish (2002)

- ^Bourne, Murray."11. Probability Distributions - Concepts".www.intmath.com.Retrieved2020-09-10.

- ^W., Stroock, Daniel (1999).Probability theory: an analytic view(Rev. ed.). Cambridge [England]: Cambridge University Press. p. 11.ISBN978-0521663496.OCLC43953136.

{{cite book}}:CS1 maint: multiple names: authors list (link) - ^Kolmogorov, Andrey (1950) [1933].Foundations of the theory of probability.New York, USA: Chelsea Publishing Company. pp. 21–24.

- ^Joyce, David (2014)."Axioms of Probability"(PDF).Clark University.RetrievedDecember 5,2019.

- ^abAlligood, K.T.; Sauer, T.D.; Yorke, J.A. (1996).Chaos: an introduction to dynamical systems.Springer.

- ^Rabinovich, M.I.; Fabrikant, A.L. (1979). "Stochastic self-modulation of waves in nonequilibrium media".J. Exp. Theor. Phys.77:617–629.Bibcode:1979JETP...50..311R.

- ^Section 1.9 ofRoss, S.M.; Peköz, E.A. (2007).A second course in probability(PDF).

- ^Walters, Peter (2000).An Introduction to Ergodic Theory.Springer.

- ^abcDekking, Frederik Michel; Kraaikamp, Cornelis; Lopuhaä, Hendrik Paul; Meester, Ludolf Erwin (2005), "Why probability and statistics?",A Modern Introduction to Probability and Statistics,Springer London, pp. 1–11,doi:10.1007/1-84628-168-7_1,ISBN978-1-85233-896-1

- ^Bishop, Christopher M. (2006).Pattern recognition and machine learning.New York: Springer.ISBN0-387-31073-8.OCLC71008143.

- ^Chang, Raymond. (2014).Physical chemistry for the chemical sciences.Thoman, John W., Jr., 1960-. [Mill Valley, California]. pp. 403–406.ISBN978-1-68015-835-9.OCLC927509011.

{{cite book}}:CS1 maint: location missing publisher (link) - ^Chen, P.; Chen, Z.; Bak-Jensen, B. (April 2008). "Probabilistic load flow: A review".2008 Third International Conference on Electric Utility Deregulation and Restructuring and Power Technologies.pp. 1586–1591.doi:10.1109/drpt.2008.4523658.ISBN978-7-900714-13-8.S2CID18669309.

- ^Maity, Rajib (2018-04-30).Statistical methods in hydrology and hydroclimatology.Singapore.ISBN978-981-10-8779-0.OCLC1038418263.

{{cite book}}:CS1 maint: location missing publisher (link)

Sources

[edit]- den Dekker, A. J.; Sijbers, J. (2014). "Data distributions in magnetic resonance images: A review".Physica Medica.30(7): 725–741.doi:10.1016/j.ejmp.2014.05.002.PMID25059432.

- Vapnik, Vladimir Naumovich (1998).Statistical Learning Theory.John Wiley and Sons.

External links

[edit]- "Probability distribution",Encyclopedia of Mathematics,EMS Press,2001 [1994]

- Field Guide to Continuous Probability Distributions,Gavin E. Crooks.

- Distinguishing probability measure, function and distribution,Math Stack Exchange

![{\displaystyle [0,1]\subseteq \mathbb {R} }](https://wikimedia.org/api/rest_v1/media/math/render/svg/042e529c93e86b5d03b1e1cd6ddcc50e89761c03)

![{\displaystyle f:\mathbb {R} \to [0,\infty ]}](https://wikimedia.org/api/rest_v1/media/math/render/svg/f8544ec4fd60d201e49cacb3afd640e760798489)

![{\displaystyle I=[a,b]\subset \mathbb {R} }](https://wikimedia.org/api/rest_v1/media/math/render/svg/1cad8a9865c17ed0c40a9e3f5eb3fe4a18df765e)

![{\displaystyle [a,b]}](https://wikimedia.org/api/rest_v1/media/math/render/svg/9c4b788fc5c637e26ee98b45f89a5c08c85f7935)

![{\displaystyle \gamma :[a,b]\rightarrow \mathbb {R} ^{n}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/58e103c376cd9ea50b5c12c8f5398ded4d2a3577)

![{\displaystyle [t_{1},t_{2}]}](https://wikimedia.org/api/rest_v1/media/math/render/svg/6e35e13fa8221f864808f15cafa3d1467b5d78ce)

![{\displaystyle [t_{2},t_{3}]}](https://wikimedia.org/api/rest_v1/media/math/render/svg/82eae695d40fda9d1b713787d35efa48d9a95478)

![{\displaystyle {\begin{aligned}F(x)=u&\Leftrightarrow 1-e^{-\lambda x}=u\\[2pt]&\Leftrightarrow e^{-\lambda x}=1-u\\[2pt]&\Leftrightarrow -\lambda x=\ln(1-u)\\[2pt]&\Leftrightarrow x={\frac {-1}{\lambda }}\ln(1-u)\end{aligned}}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/4fb889e8427ec79417200e4c016790ef0d20c446)