Global catastrophic risk

| Futures studies |

|---|

| Concepts |

| Techniques |

| Technologyassessmentandforecasting |

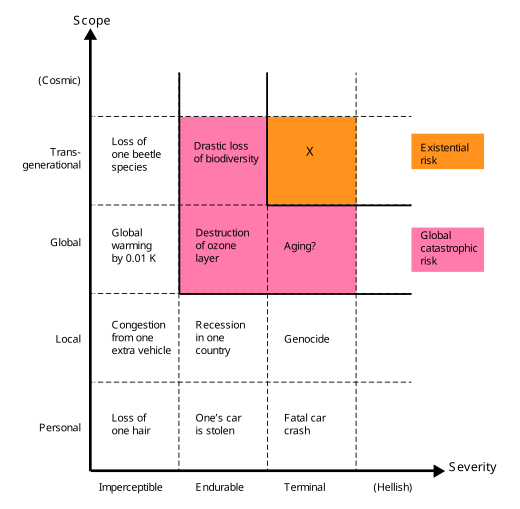

Aglobal catastrophic riskor adoomsday scenariois a hypothetical event that could damage human well-being on a global scale,[2]even endangering or destroyingmodern civilization.[3]An event that could causehuman extinctionor permanently and drastically curtail humanity's existence or potential is known as an "existential risk".[4]

In the 21st century, a number of academic and non-profit organizations have been established to research global catastrophic and existential risks, formulate potential mitigation measures and either advocate for or implement these measures.[5][6][7][8]

Definition and classification

[edit]

Defining global catastrophic risks

[edit]The term global catastrophic risk "lacks a sharp definition", and generally refers (loosely) to a risk that could inflict "serious damage to human well-being on a global scale".[10]

Humanity has suffered large catastrophes before. Some of these have caused serious damage but were only local in scope—e.g. theBlack Deathmay have resulted in the deaths of a third of Europe's population,[11]10% of the global population at the time.[12]Some were global, but were not as severe—e.g. the1918 influenza pandemickilled an estimated 3–6% of the world's population.[13]Most global catastrophic risks would not be so intense as to kill the majority of life on earth, but even if one did, the ecosystem and humanity would eventually recover (in contrast toexistential risks).

Similarly, inCatastrophe: Risk and Response,Richard Posnersingles out and groups together events that bring about "utter overthrow or ruin" on a global, rather than a "local or regional" scale. Posner highlights such events as worthy of special attention oncost–benefitgrounds because they could directly or indirectly jeopardize the survival of the human race as a whole.[14]

Defining existential risks

[edit]Existential risks are defined as "risks that threaten the destruction of humanity's long-term potential."[15]The instantiation of an existential risk (anexistential catastrophe[16]) would either cause outright human extinction or irreversibly lock in a drastically inferior state of affairs.[9][17]Existential risks are a sub-class of global catastrophic risks, where the damage is not onlyglobalbut alsoterminalandpermanent,preventing recovery and thereby affecting both current and all future generations.[9]

Non-extinction risks

[edit]While extinction is the most obvious way in which humanity's long-term potential could be destroyed, there are others, includingunrecoverablecollapseandunrecoverabledystopia.[18]A disaster severe enough to cause the permanent, irreversible collapse of human civilisation would constitute an existential catastrophe, even if it fell short of extinction.[18]Similarly, if humanity fell under a totalitarian regime, and there were no chance of recovery then such a dystopia would also be an existential catastrophe.[19]Bryan Caplanwrites that "perhaps an eternity of totalitarianism would be worse than extinction".[19](George Orwell's novelNineteen Eighty-Foursuggests[20]an example.[21]) A dystopian scenario shares the key features of extinction and unrecoverable collapse of civilization: before the catastrophe humanity faced a vast range of bright futures to choose from; after the catastrophe, humanity is locked forever in a terrible state.[18]

Potential sources of risk

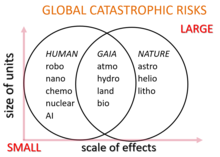

[edit]Potential global catastrophic risks are conventionally classified as anthropogenic or non-anthropogenic hazards. Examples of non-anthropogenic risks are an asteroid or cometimpact event,asupervolcaniceruption,a naturalpandemic,alethal gamma-ray burst,ageomagnetic stormfrom acoronal mass ejectiondestroying electronic equipment, natural long-termclimate change,hostileextraterrestrial life,or theSuntransforming into ared giant starand engulfing the Earthbillions of years in the future.[22]

Anthropogenic risks are those caused by humans and include those related to technology, governance, and climate change. Technological risks include the creation ofartificial intelligence misalignedwith human goals,biotechnology,andnanotechnology.Insufficient or malignglobal governancecreates risks in the social and political domain, such asglobal warandnuclear holocaust,[23]biological warfareandbioterrorismusinggenetically modified organisms,cyberwarfareandcyberterrorismdestroyingcritical infrastructurelike theelectrical grid,orradiological warfareusing weapons such as largecobalt bombs.Other global catastrophic risks include climate change,environmental degradation,extinction of species,famineas a result ofnon-equitableresource distribution,human overpopulationorunderpopulation,crop failures,and non-sustainable agriculture.

Methodological challenges

[edit]Research into the nature and mitigation of global catastrophic risks and existential risks is subject to a unique set of challenges and, as a result, is not easily subjected to the usual standards of scientific rigour.[18]For instance, it is neither feasible nor ethical to study these risks experimentally.Carl Saganexpressed this with regards to nuclear war: "Understanding the long-term consequences of nuclear war is not a problem amenable to experimental verification".[24]Moreover, many catastrophic risks change rapidly as technology advances and background conditions, such as geopolitical conditions, change. Another challenge is the general difficulty of accurately predicting the future over long timescales, especially for anthropogenic risks which depend on complex human political, economic and social systems.[18]In addition to known and tangible risks, unforeseeableblack swanextinction events may occur, presenting an additional methodological problem.[18][25]

Lack of historical precedent

[edit]Humanity has never suffered an existential catastrophe and if one were to occur, it would necessarily be unprecedented.[18]Therefore, existential risks pose unique challenges to prediction, even more than other long-term events, because ofobservation selection effects.[26]Unlike with most events, the failure of a completeextinction eventto occur in the past is not evidence against their likelihood in the future, because every world that has experienced such an extinction event has no observers, so regardless of their frequency, no civilization observes existential risks in its history.[26]Theseanthropicissues may partly be avoided by looking at evidence that does not have such selection effects, such as asteroid impact craters on the Moon, or directly evaluating the likely impact of new technology.[9]

To understand the dynamics of an unprecedented, unrecoverable global civilizational collapse (a type of existential risk), it may be instructive to study the various localcivilizational collapsesthat have occurred throughout human history.[27]For instance, civilizations such as theRoman Empirehave ended in a loss of centralized governance and a major civilization-wide loss of infrastructure and advanced technology. However, these examples demonstrate that societies appear to be fairly resilient to catastrophe; for example, Medieval Europe survived theBlack Deathwithout suffering anything resembling acivilization collapsedespite losing 25 to 50 percent of its population.[28]

Incentives and coordination

[edit]There are economic reasons that can explain why so little effort is going into existential risk reduction. It is aglobal public good,so we should expect it to be undersupplied by markets.[9]Even if a large nation invests in risk mitigation measures, that nation will enjoy only a small fraction of the benefit of doing so. Furthermore, existential risk reduction is anintergenerationalglobal public good, since most of the benefits of existential risk reduction would be enjoyed by future generations, and though these future people would in theory perhaps be willing to pay substantial sums for existential risk reduction, no mechanism for such a transaction exists.[9]

Cognitive biases

[edit]Numerouscognitive biasescan influence people's judgment of the importance of existential risks, includingscope insensitivity,hyperbolic discounting,availability heuristic,theconjunction fallacy,theaffect heuristic,and theoverconfidence effect.[29]

Scope insensitivity influences how bad people consider the extinction of the human race to be. For example, when people are motivated to donate money to altruistic causes, the quantity they are willing to give does not increase linearly with the magnitude of the issue: people are roughly as willing to prevent the deaths of 200,000 or 2,000 birds.[30]Similarly, people are often more concerned about threats to individuals than to larger groups.[29]

Eliezer Yudkowskytheorizes thatscope neglectplays a role in public perception of existential risks:[31][32]

Substantially larger numbers, such as 500 million deaths, and especially qualitatively different scenarios such as the extinction of the entire human species, seem to trigger a different mode of thinking... People who would never dream of hurting a child hear of existential risk, and say, "Well, maybe the human species doesn't really deserve to survive".

All past predictions of human extinction have proven to be false. To some, this makes future warnings seem less credible.Nick Bostromargues that the absence of human extinction in the past is weak evidence that there will be no human extinction in the future, due tosurvivor biasand otheranthropic effects.[33]

SociobiologistE. O. Wilsonargued that: "The reason for this myopic fog, evolutionary biologists contend, is that it was actually advantageous during all but the last few millennia of the two million years of existence of thegenusHomo... A premium was placed on close attention to the near future and early reproduction, and little else. Disasters of a magnitude that occur only once every few centuries were forgotten or transmuted into myth. "[34]

Proposed mitigation

[edit]Multi-layer defense

[edit]Defense in depthis a useful framework for categorizing risk mitigation measures into three layers of defense:[35]

- Prevention:Reducing the probability of a catastrophe occurring in the first place. Example: Measures to prevent outbreaks of new highly infectious diseases.

- Response:Preventing the scaling of a catastrophe to the global level. Example: Measures to prevent escalation of a small-scale nuclear exchange into an all-out nuclear war.

- Resilience:Increasing humanity's resilience (against extinction) when faced with global catastrophes. Example: Measures to increase food security during a nuclear winter.

Human extinction is most likely when all three defenses are weak, that is, "by risks we are unlikely to prevent, unlikely to successfully respond to, and unlikely to be resilient against".[35]

The unprecedented nature of existential risks poses a special challenge in designing risk mitigation measures since humanity will not be able to learn from a track record of previous events.[18]

Funding

[edit]Some researchers argue that both research and other initiatives relating to existential risk are underfunded. Nick Bostrom states that more research has been done onStar Trek,snowboarding,ordung beetlesthan on existential risks. Bostrom's comparisons have been criticized as "high-handed".[36][37]As of 2020, theBiological Weapons Conventionorganization had an annual budget of US$1.4 million.[38]

Survival planning

[edit]Some scholars propose the establishment on Earth of one or more self-sufficient, remote, permanently occupied settlements specifically created for the purpose of surviving a global disaster.[39][40][41]EconomistRobin Hansonargues that a refuge permanently housing as few as 100 people would significantly improve the chances of human survival during a range of global catastrophes.[39][42]

Food storagehas been proposed globally, but the monetary cost would be high. Furthermore, it would likely contribute to the current millions of deaths per year due tomalnutrition.[43]In 2022, a team led by David Denkenberger modeled the cost-effectiveness of resilient foods toartificial general intelligence (AGI) safetyand found "~98-99% confidence" for a higher marginal impact of work on resilient foods.[44]Somesurvivalistsstocksurvival retreatswith multiple-year food supplies.

TheSvalbard Global Seed Vaultis buried 400 feet (120 m) inside a mountain on an island in theArctic.It is designed to hold 2.5 billion seeds from more than 100 countries as a precaution to preserve the world's crops. The surrounding rock is −6 °C (21 °F) (as of 2015) but the vault is kept at −18 °C (0 °F) by refrigerators powered by locally sourced coal.[45][46]

More speculatively, if society continues to function and if thebiosphereremains habitable, calorie needs for the present human population might in theory be met during an extended absence of sunlight, given sufficient advance planning. Conjectured solutions include growing mushrooms on the dead plant biomass left in the wake of the catastrophe, converting cellulose to sugar, or feeding natural gas to methane-digesting bacteria.[47][48]

Global catastrophic risks and global governance

[edit]Insufficientglobal governancecreates risks in the social and political domain, but the governance mechanisms develop more slowly than technological and social change. There are concerns from governments, the private sector, as well as the general public about the lack of governance mechanisms to efficiently deal with risks, negotiate and adjudicate between diverse and conflicting interests. This is further underlined by an understanding of the interconnectedness of global systemic risks.[49]In absence or anticipation of global governance, national governments can act individually to better understand, mitigate and prepare for global catastrophes.[50]

Climate emergency plans

[edit]In 2018, theClub of Romecalled for greater climate change action and published its Climate Emergency Plan, which proposes ten action points to limit global average temperature increase to 1.5 degrees Celsius.[51]Further, in 2019, the Club published the more comprehensive Planetary Emergency Plan.[52]

There is evidence to suggest that collectively engaging with the emotional experiences that emerge during contemplating the vulnerability of the human species within the context of climate change allows for these experiences to be adaptive. When collective engaging with and processing emotional experiences is supportive, this can lead to growth in resilience, psychological flexibility, tolerance of emotional experiences, and community engagement.[53]

Space colonization

[edit]Space colonizationis a proposed alternative to improve the odds of surviving an extinction scenario.[54]Solutions of this scope may requiremegascale engineering.

AstrophysicistStephen Hawkingadvocated colonizing other planets within the Solar System once technology progresses sufficiently, in order to improve thechance of human survivalfrom planet-wide events such as global thermonuclear war.[55][56]

BillionaireElon Muskwrites that humanity must become a multiplanetary species in order to avoid extinction.[57]Musk is using his companySpaceXto develop technology he hopes will be used in the colonization ofMars.

Skeptics and opponents

[edit]PsychologistSteven Pinkerhas called existential risk a "useless category" that can distract from real threats such as climate change and nuclear war.[36]

Organizations

[edit]TheBulletin of the Atomic Scientists(est. 1945) is one of the oldest global risk organizations, founded after the public became alarmed by the potential of atomic warfare in the aftermath of WWII. It studies risks associated with nuclear war and energy and famously maintains theDoomsday Clockestablished in 1947. TheForesight Institute(est. 1986) examines the risks of nanotechnology and its benefits. It was one of the earliest organizations to study the unintended consequences of otherwise harmless technology gone haywire at a global scale. It was founded byK. Eric Drexlerwho postulated "grey goo".[58][59]

Beginning after 2000, a growing number of scientists, philosophers and tech billionaires created organizations devoted to studying global risks both inside and outside of academia.[60]

Independent non-governmental organizations (NGOs) include theMachine Intelligence Research Institute(est. 2000), which aims to reduce the risk of a catastrophe caused by artificial intelligence,[61]with donors includingPeter ThielandJed McCaleb.[62]TheNuclear Threat Initiative(est. 2001) seeks to reduce global threats from nuclear, biological and chemical threats, and containment of damage after an event.[8]It maintains a nuclear material security index.[63]The Lifeboat Foundation (est. 2009) funds research into preventing a technological catastrophe.[64]Most of the research money funds projects at universities.[65]The Global Catastrophic Risk Institute (est. 2011) is a US-based non-profit, non-partisan think tank founded bySeth Baumand Tony Barrett. GCRI does research and policy work across various risks, including artificial intelligence, nuclear war, climate change, and asteroid impacts.[66]TheGlobal Challenges Foundation(est. 2012), based in Stockholm and founded byLaszlo Szombatfalvy,releases a yearly report on the state of global risks.[67][68]TheFuture of Life Institute(est. 2014) works to reduce extreme, large-scale risks from transformative technologies, as well as steer the development and use of these technologies to benefit all life, through grantmaking, policy advocacy in the United States, European Union and United Nations, and educational outreach.[7]Elon Musk,Vitalik ButerinandJaan Tallinnare some of its biggest donors.[69]The Center on Long-Term Risk (est. 2016), formerly known as the Foundational Research Institute, is a British organization focused on reducing risks of astronomical suffering (s-risks) from emerging technologies.[70]

University-based organizations included theFuture of Humanity Institute(est. 2005) which researched the questions of humanity's long-term future, particularly existential risk.[5]It was founded byNick Bostromand was based at Oxford University.[5]TheCentre for the Study of Existential Risk(est. 2012) is a Cambridge University-based organization which studies four major technological risks: artificial intelligence, biotechnology, global warming and warfare.[6]All are man-made risks, asHuw Priceexplained to the AFP news agency, "It seems a reasonable prediction that some time in this or the next century intelligence will escape from the constraints of biology". He added that when this happens "we're no longer the smartest things around," and will risk being at the mercy of "machines that are not malicious, but machines whose interests don't include us."[71]Stephen Hawkingwas an acting adviser. TheMillennium Alliance for Humanity and the Biosphereis a Stanford University-based organization focusing on many issues related to global catastrophe by bringing together members of academia in the humanities.[72][73]It was founded byPaul Ehrlich,among others.[74]Stanford University also has theCenter for International Security and Cooperationfocusing on political cooperation to reduce global catastrophic risk.[75]TheCenter for Security and Emerging Technologywas established in January 2019 at Georgetown's Walsh School of Foreign Service and will focus on policy research of emerging technologies with an initial emphasis on artificial intelligence.[76]They received a grant of 55M USD fromGood Venturesas suggested byOpen Philanthropy.[76]

Other risk assessment groups are based in or are part of governmental organizations. TheWorld Health Organization(WHO) includes a division called the Global Alert and Response (GAR) which monitors and responds to global epidemic crisis.[77]GAR helps member states with training and coordination of response to epidemics.[78]TheUnited States Agency for International Development(USAID) has its Emerging Pandemic Threats Program which aims topreventand contain naturally generated pandemics at their source.[79]TheLawrence Livermore National Laboratoryhas a division called the Global Security Principal Directorate which researches on behalf of the government issues such as bio-security and counter-terrorism.[80]

See also

[edit]- Artificial intelligence arms race

- Community resilience

- Extreme risk

- Fermi paradox

- Foresight (psychology)

- Future of Earth

- Future of the Solar System

- Climate engineering

- Global Risks Report

- Great Filter

- Holocene extinction

- Impact event

- List of global issues

- Nuclear proliferation

- Outside Context Problem

- Planetary boundaries

- Rare events

- The Sixth Extinction: An Unnatural History

- Societal collapse

- Speculative evolution

- Suffering risks

- Survivalism

- Tail risk

- The Precipice: Existential Risk and the Future of Humanity

- Timeline of the far future

- Triple planetary crisis

- World Scientists' Warning to Humanity

References

[edit]- ^Schulte, P.; et al. (March 5, 2010)."The Chicxulub Asteroid Impact and Mass Extinction at the Cretaceous-Paleogene Boundary"(PDF).Science.327(5970): 1214–1218.Bibcode:2010Sci...327.1214S.doi:10.1126/science.1177265.PMID20203042.S2CID2659741.

- ^Bostrom, Nick(2008).Global Catastrophic Risks(PDF).Oxford University Press. p. 1.

- ^Ripple WJ, Wolf C, Newsome TM, Galetti M, Alamgir M, Crist E, Mahmoud MI, Laurance WF (November 13, 2017)."World Scientists' Warning to Humanity: A Second Notice".BioScience.67(12): 1026–1028.doi:10.1093/biosci/bix125.hdl:11336/71342.

- ^Bostrom, Nick(March 2002)."Existential Risks: Analyzing Human Extinction Scenarios and Related Hazards".Journal of Evolution and Technology.9.

- ^abc"About FHI".Future of Humanity Institute.RetrievedAugust 12,2021.

- ^ab"About us".Centre for the Study of Existential Risk.RetrievedAugust 12,2021.

- ^ab"The Future of Life Institute".Future of Life Institute.RetrievedMay 5,2014.

- ^ab"Nuclear Threat Initiative".Nuclear Threat Initiative.RetrievedJune 5,2015.

- ^abcdefBostrom, Nick (2013)."Existential Risk Prevention as Global Priority"(PDF).Global Policy.4(1): 15–3.doi:10.1111/1758-5899.12002– via Existential Risk.

- ^Bostrom, Nick; Cirkovic, Milan (2008).Global Catastrophic Risks.Oxford: Oxford University Press. p. 1.ISBN978-0-19-857050-9.

- ^Ziegler, Philip (2012).The Black Death.Faber and Faber. p. 397.ISBN9780571287116.

- ^Muehlhauser, Luke (March 15, 2017)."How big a deal was the Industrial Revolution?".lukemuelhauser.com.RetrievedAugust 3,2020.

- ^Taubenberger, Jeffery; Morens, David (2006)."1918 Influenza: the Mother of All Pandemics".Emerging Infectious Diseases.12(1): 15–22.doi:10.3201/eid1201.050979.PMC3291398.PMID16494711.

- ^Posner, Richard A. (2006).Catastrophe: Risk and Response.Oxford: Oxford University Press.ISBN978-0195306477.Introduction, "What is Catastrophe?"

- ^Ord, Toby (2020).The Precipice: Existential Risk and the Future of Humanity.New York: Hachette.ISBN9780316484916.

This is an equivalent, though crisper statement ofNick Bostrom's definition: "An existential risk is one that threatens the premature extinction of Earth-originating intelligent life or the permanent and drastic destruction of its potential for desirable future development." Source: Bostrom, Nick (2013). "Existential Risk Prevention as Global Priority". Global Policy. 4:15-31.

- ^Cotton-Barratt, Owen; Ord, Toby (2015),Existential risk and existential hope: Definitions(PDF),Future of Humanity Institute – Technical Report #2015-1, pp. 1–4

- ^Bostrom, Nick (2009)."Astronomical Waste: The opportunity cost of delayed technological development".Utilitas.15(3): 308–314.CiteSeerX10.1.1.429.2849.doi:10.1017/s0953820800004076.S2CID15860897.

- ^abcdefghOrd, Toby (2020).The Precipice: Existential Risk and the Future of Humanity.New York: Hachette.ISBN9780316484916.

- ^abBryan Caplan (2008). "The totalitarian threat".Global Catastrophic Risks,eds. Bostrom & Cirkovic (Oxford University Press): 504–519.ISBN9780198570509

- ^Glover, Dennis (June 1, 2017)."Did George Orwell secretly rewrite the end of Nineteen Eighty-Four as he lay dying?".The Sydney Morning Herald.RetrievedNovember 21,2021.

Winston's creator, George Orwell, believed that freedom would eventually defeat the truth-twisting totalitarianism portrayed in Nineteen Eighty-Four.

- ^Orwell, George (1949).Nineteen Eighty-Four. A novel.London: Secker & Warburg. Archived fromthe originalon May 4, 2012.RetrievedAugust 12,2021.

- ^Baum, Seth D. (2023)."Assessing natural global catastrophic risks".Natural Hazards.115(3): 2699–2719.Bibcode:2023NatHa.115.2699B.doi:10.1007/s11069-022-05660-w.PMC9553633.PMID36245947.

- ^Scouras, James (2019)."Nuclear War as a Global Catastrophic Risk".Journal of Benefit-Cost Analysis.10(2): 274–295.doi:10.1017/bca.2019.16.

- ^Sagan, Carl (Winter 1983)."Nuclear War and Climatic Catastrophe: Some Policy Implications".Foreign Affairs.Council on Foreign Relations.doi:10.2307/20041818.JSTOR20041818.RetrievedAugust 4,2020.

- ^Jebari, Karim (2014)."Existential Risks: Exploring a Robust Risk Reduction Strategy"(PDF).Science and Engineering Ethics.21(3): 541–54.doi:10.1007/s11948-014-9559-3.PMID24891130.S2CID30387504.RetrievedAugust 26,2018.

- ^abCirkovic, Milan M.;Bostrom, Nick;Sandberg, Anders(2010)."Anthropic Shadow: Observation Selection Effects and Human Extinction Risks"(PDF).Risk Analysis.30(10): 1495–1506.Bibcode:2010RiskA..30.1495C.doi:10.1111/j.1539-6924.2010.01460.x.PMID20626690.S2CID6485564.

- ^Kemp, Luke (February 2019)."Are we on the road to civilization collapse?".BBC.RetrievedAugust 12,2021.

- ^Ord, Toby(2020).The Precipice: Existential Risk and the Future of Humanity.Hachette Books.ISBN9780316484893.

Europe survived losing 25 to 50 percent of its population in the Black Death, while keeping civilization firmly intact

- ^abYudkowsky, Eliezer (2008)."Cognitive Biases Potentially Affecting Judgment of Global Risks"(PDF).Global Catastrophic Risks:91–119.Bibcode:2008gcr..book...86Y.

- ^Desvousges, W.H., Johnson, F.R., Dunford, R.W., Boyle, K.J., Hudson, S.P., and Wilson, N. 1993, Measuring natural resource damages with contingent valuation: tests of validity and reliability. In Hausman, J.A. (ed),Contingent Valuation:A Critical Assessment,pp. 91–159 (Amsterdam: North Holland).

- ^Bostrom 2013.

- ^Yudkowsky, Eliezer. "Cognitive biases potentially affecting judgment of global risks".Global catastrophic risks 1 (2008): 86. p.114

- ^"We're Underestimating the Risk of Human Extinction".The Atlantic. March 6, 2012.RetrievedJuly 1,2016.

- ^Is Humanity Suicidal?The New York Times MagazineMay 30, 1993)

- ^abCotton-Barratt, Owen; Daniel, Max; Sandberg, Anders (2020)."Defence in Depth Against Human Extinction: Prevention, Response, Resilience, and Why They All Matter".Global Policy.11(3): 271–282.doi:10.1111/1758-5899.12786.ISSN1758-5899.PMC7228299.PMID32427180.

- ^abKupferschmidt, Kai (January 11, 2018)."Could science destroy the world? These scholars want to save us from a modern-day Frankenstein".Science.AAAS.RetrievedApril 20,2020.

- ^"Oxford Institute Forecasts The Possible Doom Of Humanity".Popular Science.2013.RetrievedApril 20,2020.

- ^Toby Ord(2020).The precipice: Existential risk and the future of humanity.Hachette Books.ISBN9780316484893.

The international body responsible for the continued prohibition of bioweapons (the Biological Weapons Convention) has an annual budget of $1.4 million - less than the average McDonald's restaurant

- ^abMatheny, Jason Gaverick (2007)."Reducing the Risk of Human Extinction"(PDF).Risk Analysis.27(5): 1335–1344.Bibcode:2007RiskA..27.1335M.doi:10.1111/j.1539-6924.2007.00960.x.PMID18076500.S2CID14265396.Archived fromthe original(PDF)on August 27, 2014.RetrievedMay 16,2015.

- ^Wells, Willard. (2009).Apocalypse when?.Praxis.ISBN978-0387098364.

- ^Wells, Willard. (2017).Prospects for Human Survival.Lifeboat Foundation.ISBN978-0998413105.

- ^Hanson, Robin. "Catastrophe, social collapse, and human extinction".Global catastrophic risks 1 (2008): 357.

- ^Smil, Vaclav(2003).The Earth's Biosphere: Evolution, Dynamics, and Change.MIT Press.p. 25.ISBN978-0-262-69298-4.

- ^Denkenberger, David C.; Sandberg, Anders; Tieman, Ross John; Pearce, Joshua M. (2022)."Long term cost-effectiveness of resilient foods for global catastrophes compared to artificial general intelligence safety".International Journal of Disaster Risk Reduction.73:102798.Bibcode:2022IJDRR..7302798D.doi:10.1016/j.ijdrr.2022.102798.

- ^Lewis Smith (February 27, 2008)."Doomsday vault for world's seeds is opened under Arctic mountain".The Times Online.London. Archived fromthe originalon May 12, 2008.

- ^Suzanne Goldenberg (May 20, 2015)."The doomsday vault: the seeds that could save a post-apocalyptic world".The Guardian.RetrievedJune 30,2017.

- ^"Here's how the world could end—and what we can do about it".Science.AAAS. July 8, 2016.RetrievedMarch 23,2018.

- ^Denkenberger, David C.; Pearce, Joshua M. (September 2015)."Feeding everyone: Solving the food crisis in event of global catastrophes that kill crops or obscure the sun"(PDF).Futures.72:57–68.doi:10.1016/j.futures.2014.11.008.S2CID153917693.

- ^"Global Challenges Foundation | Understanding Global Systemic Risk".globalchallenges.org.Archived fromthe originalon August 16, 2017.RetrievedAugust 15,2017.

- ^"Global Catastrophic Risk Policy".gcrpolicy.com.Archived fromthe originalon August 11, 2019.RetrievedAugust 11,2019.

- ^Club of Rome(2018)."The Climate Emergency Plan".RetrievedAugust 17,2020.

- ^Club of Rome(2019)."The Planetary Emergency Plan".RetrievedAugust 17,2020.

- ^Kieft, J.; Bendell, J (2021)."The responsibility of communicating difficult truths about climate influenced societal disruption and collapse: an introduction to psychological research".Institute for Leadership and Sustainability (IFLAS) Occasional Papers.7:1–39.

- ^"Mankind must abandon earth or face extinction: Hawking",physorg.com,August 9, 2010,retrievedJanuary 23,2012

- ^Malik, Tariq (April 13, 2013)."Stephen Hawking: Humanity Must Colonize Space to Survive".Space.com.RetrievedJuly 1,2016.

- ^Shukman, David (January 19, 2016)."Hawking: Humans at risk of lethal 'own goal'".BBC News.RetrievedJuly 1,2016.

- ^Ginsberg, Leah (June 16, 2017)."Elon Musk thinks life on earth will go extinct, and is putting most of his fortune toward colonizing Mars".CNBC.

- ^Fred Hapgood (November 1986)."Nanotechnology: Molecular Machines that Mimic Life"(PDF).Omni.Archived fromthe original(PDF)on July 27, 2013.RetrievedJune 5,2015.

- ^Giles, Jim (2004)."Nanotech takes small step towards burying 'grey goo'".Nature.429(6992): 591.Bibcode:2004Natur.429..591G.doi:10.1038/429591b.PMID15190320.

- ^Sophie McBain (September 25, 2014)."Apocalypse soon: the scientists preparing for the end times".New Statesman.RetrievedJune 5,2015.

- ^"Reducing Long-Term Catastrophic Risks from Artificial Intelligence".Machine Intelligence Research Institute.RetrievedJune 5,2015.

The Machine Intelligence Research Institute aims to reduce the risk of a catastrophe, should such an event eventually occur.

- ^Angela Chen (September 11, 2014)."Is Artificial Intelligence a Threat?".The Chronicle of Higher Education.RetrievedJune 5,2015.

- ^Alexander Sehmar (May 31, 2015)."Isis could obtain nuclear weapon from Pakistan, warns India".The Independent.Archived fromthe originalon June 2, 2015.RetrievedJune 5,2015.

- ^"About the Lifeboat Foundation".The Lifeboat Foundation.RetrievedApril 26,2013.

- ^Ashlee, Vance(July 20, 2010)."The Lifeboat Foundation: Battling Asteroids, Nanobots and A.I."New York Times.RetrievedJune 5,2015.

- ^"Global Catastrophic Risk Institute".gcrinstitute.org.RetrievedMarch 22,2022.

- ^Meyer, Robinson (April 29, 2016)."Human Extinction Isn't That Unlikely".The Atlantic.Boston, Massachusetts: Emerson Collective.RetrievedApril 30,2016.

- ^"Global Challenges Foundation website".globalchallenges.org.RetrievedApril 30,2016.

- ^Nick Bilton (May 28, 2015)."Ava of 'Ex Machina' Is Just Sci-Fi (for Now)".New York Times.RetrievedJune 5,2015.

- ^"About Us".Center on Long-Term Risk.RetrievedMay 17,2020.

We currently focus on efforts to reduce the worst risks of astronomical suffering (s-risks) from emerging technologies, with a focus on transformative artificial intelligence.

- ^Hui, Sylvia (November 25, 2012)."Cambridge to study technology's risks to humans".Associated Press. Archived fromthe originalon December 1, 2012.RetrievedJanuary 30,2012.

- ^Scott Barrett (2014).Environment and Development Economics: Essays in Honour of Sir Partha Dasgupta.Oxford University Press. p. 112.ISBN9780199677856.RetrievedJune 5,2015.

- ^"Millennium Alliance for Humanity & The Biosphere".Millennium Alliance for Humanity & The Biosphere.RetrievedJune 5,2015.

- ^Guruprasad Madhavan (2012).Practicing Sustainability.Springer Science & Business Media. p. 43.ISBN9781461443483.RetrievedJune 5,2015.

- ^"Center for International Security and Cooperation".Center for International Security and Cooperation.RetrievedJune 5,2015.

- ^abAnderson, Nick (February 28, 2019)."Georgetown launches think tank on security and emerging technology".Washington Post.RetrievedMarch 12,2019.

- ^"Global Alert and Response (GAR)".World Health Organization.Archived fromthe originalon February 16, 2003.RetrievedJune 5,2015.

- ^Kelley Lee(2013).Historical Dictionary of the World Health Organization.Rowman & Littlefield. p. 92.ISBN9780810878587.RetrievedJune 5,2015.

- ^"USAID Emerging Pandemic Threats Program".USAID.Archived fromthe originalon October 22, 2014.RetrievedJune 5,2015.

- ^"Global Security".Lawrence Livermore National Laboratory.Archived fromthe originalon December 27, 2007.RetrievedJune 5,2015.

Further reading

[edit]- Avin, Shahar; Wintle, Bonnie C.; Weitzdörfer, Julius; ó Héigeartaigh, Seán S.; Sutherland, William J.; Rees, Martin J. (2018)."Classifying global catastrophic risks".Futures.102:20–26.doi:10.1016/j.futures.2018.02.001.

- Corey S. Powell(2000)"Twenty ways the world could end suddenly"Discover Magazine

- Currie, Adrian; Ó hÉigeartaigh, Seán (2018). "Working together to face humanity's greatest threats: Introduction to the Future of Research on Catastrophic and Existential Risk".Futures.102:1–5.doi:10.1016/j.futures.2018.07.003.hdl:10871/35764.

- Derrick Jensen(2006)EndgameISBN1-58322-730-X.

- Donella Meadows(1972)The Limits to GrowthISBN0-87663-165-0.

- Edward O. Wilson(2003)The Future of LifeISBN0-679-76811-4

- Holt, Jim(February 25, 2021)."The Power of Catastrophic Thinking".The New York Review of Books.Vol. LXVIII, no. 3. pp. 26–29. p. 28:

Whether you are searching for a cure for cancer, or pursuing a scholarly or artistic career, or engaged in establishing more just institutions, a threat to the future of humanity is also a threat to the significance of what you do.

- Huesemann, Michael H., and Joyce A. Huesemann (2011)Technofix: Why Technology Won't Save Us or the Environment,Chapter 6, "Sustainability or Collapse",New Society Publishers,Gabriola Island, British Columbia, Canada, 464 pagesISBN0865717044.

- Jared Diamond(2005 and 2011)Collapse: How Societies Choose to Fail or SucceedPenguin BooksISBN9780241958681.

- Jean-Francois Rischard(2003)High Noon 20 Global Problems, 20 Years to Solve ThemISBN0-465-07010-8

- Joel Garreau(2005)Radical EvolutionISBN978-0385509657.

- John A. Leslie(1996)The End of the WorldISBN0-415-14043-9.

- Joseph Tainter(1990)The Collapse of Complex Societies,Cambridge University Press,Cambridge, UKISBN9780521386739.

- Marshall Brain(2020)The Doomsday Book: The Science Behind Humanity's Greatest ThreatsUnion SquareISBN9781454939962

- Martin Rees(2004)Our Final Hour: A Scientist's warning: How Terror, Error, and Environmental Disaster Threaten Humankind's Future in This Century—On Earth and BeyondISBN0-465-06863-4

- Rhodes, Catherine (2024).Managing Extreme Technological Risk.World Scientific.doi:10.1142/q0438.ISBN978-1-80061-481-9.

- Roger-Maurice Bonnet andLodewijk Woltjer(2008)Surviving 1,000 Centuries Can We Do It?Springer-Praxis Books.

- Taggart, Gabel (2023). "Taking stock of systems for organizing existential and global catastrophic risks: Implications for policy".Global Policy.14(3): 489–499.doi:10.1111/1758-5899.13230.

- Toby Ord(2020)The Precipice - Existential Risk and the Future of HumanityBloomsbury PublishingISBN9781526600219

- Turchin, Alexey; Denkenberger, David (2018)."Global catastrophic and existential risks communication scale".Futures.102:27–38.doi:10.1016/j.futures.2018.01.003.

- Walsh, Bryan (2019).End Times: A Brief Guide to the End of the World.Hachette Books.ISBN978-0275948023.

External links

[edit]- "Are we on the road to civilisation collapse?".BBC.February 19, 2019.

- MacAskill, William(August 5, 2022)."The Case for Longtermism".The New York Times.

- "What a way to go"fromThe Guardian.Ten scientists name the biggest dangers to Earth and assess the chances they will happen. April 14, 2005.

- Humanity under threat from perfect storm of crises – study.The Guardian.February 6, 2020.

- Annual Reports on Global Riskby theGlobal Challenges Foundation

- Center on Long-Term Risk

- Global Catastrophic Risk PolicyArchivedAugust 11, 2019, at theWayback Machine

- Stephen Petranek: 10 ways the world could end,aTED talk