Information retrieval

| Information science |

|---|

| General aspects |

| Related fields and subfields |

Information retrieval(IR) incomputingandinformation scienceis the task of identifying and retrievinginformation systemresources that are relevant to aninformation need.The information need can be specified in the form of a search query. In the case of document retrieval, queries can be based onfull-textor other content-based indexing. Information retrieval is thescience[1]of searching for information in a document, searching for documents themselves, and also searching for themetadatathat describes data, and fordatabasesof texts, images or sounds.

Automated information retrieval systems are used to reduce what has been calledinformation overload.An IR system is a software system that provides access to books, journals and other documents; it also stores and manages those documents.Web search enginesare the most visible IR applications.

Overview[edit]

An information retrieval process begins when a user enters a query into the system. Queries are formal statements of information needs, for example search strings in web search engines. In information retrieval, a query does not uniquely identify a single object in the collection. Instead, several objects may match the query, perhaps with different degrees ofrelevance.

An object is an entity that is represented by information in a content collection ordatabase.User queries are matched against the database information. However, as opposed to classical SQL queries of a database, in information retrieval the results returned may or may not match the query, so results are typically ranked. Thisrankingof results is a key difference of information retrieval searching compared to database searching.[2]

Depending on theapplicationthe data objects may be, for example, text documents, images,[3]audio,[4]mind maps[5]or videos. Often the documents themselves are not kept or stored directly in the IR system, but are instead represented in the system by document surrogates ormetadata.

Most IR systems compute a numeric score on how well each object in the database matches the query, and rank the objects according to this value. The top ranking objects are then shown to the user. The process may then be iterated if the user wishes to refine the query.[6]

History[edit]

there is... a machine called the Univac... whereby letters and figures are coded as a pattern of magnetic spots on a long steel tape. By this means the text of a document, preceded by its subject code symbol, can be recorded... the machine... automatically selects and types out those references which have been coded in any desired way at a rate of 120 words a minute

— J. E. Holmstrom, 1948

The idea of using computers to search for relevant pieces of information was popularized in the articleAs We May ThinkbyVannevar Bushin 1945.[7]It would appear that Bush was inspired by patents for a 'statistical machine' – filed byEmanuel Goldbergin the 1920s and 1930s – that searched for documents stored on film.[8]The first description of a computer searching for information was described by Holmstrom in 1948,[9]detailing an early mention of theUnivaccomputer. Automated information retrieval systems were introduced in the 1950s: one even featured in the 1957 romantic comedy,Desk Set.In the 1960s, the first large information retrieval research group was formed byGerard Saltonat Cornell. By the 1970s several different retrieval techniques had been shown to perform well on smalltext corporasuch as the Cranfield collection (several thousand documents).[7]Large-scale retrieval systems, such as the Lockheed Dialog system, came into use early in the 1970s.

In 1992, the US Department of Defense along with theNational Institute of Standards and Technology(NIST), cosponsored theText Retrieval Conference(TREC) as part of the TIPSTER text program. The aim of this was to look into the information retrieval community by supplying the infrastructure that was needed for evaluation of text retrieval methodologies on a very large text collection. This catalyzed research on methods thatscaleto huge corpora. The introduction ofweb search engineshas boosted the need for very large scale retrieval systems even further.

Applications[edit]

Areas where information retrieval techniques are employed include (the entries are in alphabetical order within each category):

General applications[edit]

- Digital libraries

- Information filtering

- Media search

- Blog search

- Image retrieval

- 3D retrieval

- Music retrieval

- News search

- Speech retrieval

- Video retrieval

- Search engines

Domain-specific applications[edit]

- Expert search finding

- Genomic information retrieval

- Geographic information retrieval

- Information retrieval for chemical structures

- Information retrieval insoftware engineering

- Legal information retrieval

- Vertical search

Other retrieval methods[edit]

Methods/Techniques in which information retrieval techniques are employed include:

- Adversarial information retrieval

- Automatic summarization

- Compound term processing

- Cross-lingual retrieval

- Document classification

- Spam filtering

- Question answering

Model types[edit]

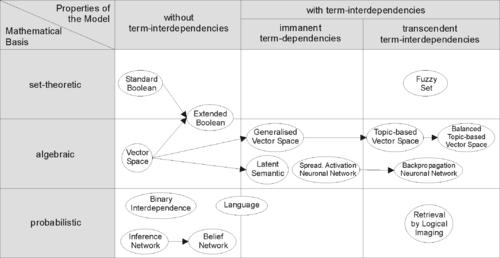

In order to effectively retrieve relevant documents by IR strategies, the documents are typically transformed into a suitable representation. Each retrieval strategy incorporates a specific model for its document representation purposes. The picture on the right illustrates the relationship of some common models. In the picture, the models are categorized according to two dimensions: the mathematical basis and the properties of the model.

First dimension: mathematical basis[edit]

- Set-theoreticmodels represent documents assetsof words or phrases. Similarities are usually derived from set-theoretic operations on those sets. Common models are:

- Algebraic modelsrepresent documents and queries usually as vectors, matrices, or tuples. The similarity of the query vector and document vector is represented as a scalar value.

- Probabilistic modelstreat the process of document retrieval as a probabilistic inference. Similarities are computed as probabilities that a document is relevant for a given query. Probabilistic theorems likeBayes' theoremare often used in these models.

- Binary Independence Model

- Probabilistic relevance modelon which is based theokapi (BM25)relevance function

- Uncertain inference

- Language models

- Divergence-from-randomness model

- Latent Dirichlet allocation

- Feature-based retrieval modelsview documents as vectors of values offeature functions(or justfeatures) and seek the best way to combine these features into a single relevance score, typically bylearning to rankmethods. Feature functions are arbitrary functions of document and query, and as such can easily incorporate almost any other retrieval model as just another feature.

Second dimension: properties of the model[edit]

- Models without term-interdependenciestreat different terms/words as independent. This fact is usually represented in vector space models by theorthogonalityassumption of term vectors or in probabilistic models by anindependencyassumption for term variables.

- Models with immanent term interdependenciesallow a representation of interdependencies between terms. However the degree of the interdependency between two terms is defined by the model itself. It is usually directly or indirectly derived (e.g. bydimensional reduction) from theco-occurrenceof those terms in the whole set of documents.

- Models with transcendent term interdependenciesallow a representation of interdependencies between terms, but they do not allege how the interdependency between two terms is defined. They rely on an external source for the degree of interdependency between two terms. (For example, a human or sophisticated algorithms.)

Performance and correctness measures[edit]

The evaluation of an information retrieval system' is the process of assessing how well a system meets the information needs of its users. In general, measurement considers a collection of documents to be searched and a search query. Traditional evaluation metrics, designed forBoolean retrieval[clarification needed]or top-k retrieval, includeprecision and recall.All measures assume aground truthnotion of relevance: every document is known to be either relevant or non-relevant to a particular query. In practice, queries may beill-posedand there may be different shades of relevance.

Timeline[edit]

- Before the1900s

- 1801:Joseph Marie Jacquardinvents theJacquard loom,the first machine to use punched cards to control a sequence of operations.

- 1880s:Herman Hollerithinvents an electro-mechanical data tabulator using punch cards as a machine readable medium.

- 1890Hollerithcards,keypunchesandtabulatorsused to process the1890 US Censusdata.

- 1920s-1930s

- Emanuel Goldbergsubmits patents for his "Statistical Machine", a document search engine that used photoelectric cells and pattern recognition to search the metadata on rolls of microfilmed documents.

- 1940s–1950s

- late 1940s:The US military confronted problems of indexing and retrieval of wartime scientific research documents captured from Germans.

- 1945:Vannevar Bush'sAs We May Thinkappeared inAtlantic Monthly.

- 1947:Hans Peter Luhn(research engineer at IBM since 1941) began work on a mechanized punch card-based system for searching chemical compounds.

- 1950s:Growing concern in the US for a "science gap" with the USSR motivated, encouraged funding and provided a backdrop for mechanized literature searching systems (Allen Kentet al.) and the invention of thecitation indexbyEugene Garfield.

- 1950:The term "information retrieval" was coined byCalvin Mooers.[10]

- 1951:Philip Bagley conducted the earliest experiment in computerized document retrieval in a master thesis atMIT.[11]

- 1955:Allen Kent joinedCase Western Reserve University,and eventually became associate director of the Center for Documentation and Communications Research. That same year, Kent and colleagues published a paper in American Documentation describing the precision and recall measures as well as detailing a proposed "framework" for evaluating an IR system which included statistical sampling methods for determining the number of relevant documents not retrieved.[12]

- 1958:International Conference on Scientific Information Washington DC included consideration of IR systems as a solution to problems identified. See:Proceedings of the International Conference on Scientific Information, 1958(National Academy of Sciences, Washington, DC, 1959)

- 1959:Hans Peter Luhnpublished "Auto-encoding of documents for information retrieval".

- late 1940s:The US military confronted problems of indexing and retrieval of wartime scientific research documents captured from Germans.

- 1960s:

- early 1960s:Gerard Saltonbegan work on IR at Harvard, later moved to Cornell.

- 1960:Melvin Earl Maronand John Lary Kuhns[13]published "On relevance, probabilistic indexing, and information retrieval" in the Journal of the ACM 7(3):216–244, July 1960.

- 1962:

- Cyril W. Cleverdonpublished early findings of the Cranfield studies, developing a model for IR system evaluation. See: Cyril W. Cleverdon, "Report on the Testing and Analysis of an Investigation into the Comparative Efficiency of Indexing Systems". Cranfield Collection of Aeronautics, Cranfield, England, 1962.

- Kent publishedInformation Analysis and Retrieval.

- 1963:

- Weinberg report "Science, Government and Information" gave a full articulation of the idea of a "crisis of scientific information". The report was named after Dr.Alvin Weinberg.

- Joseph Becker andRobert M. Hayespublished text on information retrieval. Becker, Joseph; Hayes, Robert Mayo.Information storage and retrieval: tools, elements, theories.New York, Wiley (1963).

- 1964:

- Karen Spärck Jonesfinished her thesis at Cambridge,Synonymy and Semantic Classification,and continued work oncomputational linguisticsas it applies to IR.

- TheNational Bureau of Standardssponsored a symposium titled "Statistical Association Methods for Mechanized Documentation". Several highly significant papers, including G. Salton's first published reference (we believe) to theSMARTsystem.

- mid-1960s:

- National Library of Medicine developedMEDLARSMedical Literature Analysis and Retrieval System, the first major machine-readable database and batch-retrieval system.

- Project Intrex at MIT.

- 1965:J. C. R. LickliderpublishedLibraries of the Future.

- 1966:Don Swansonwas involved in studies at University of Chicago on Requirements for Future Catalogs.

- late 1960s:F. Wilfrid Lancastercompleted evaluation studies of the MEDLARS system and published the first edition of his text on information retrieval.

- 1968:

- Gerard Salton publishedAutomatic Information Organization and Retrieval.

- John W. Sammon, Jr.'s RADC Tech report "Some Mathematics of Information Storage and Retrieval..." outlined the vector model.

- 1969:Sammon's "A nonlinear mapping for data structure analysisArchived2017-08-08 at theWayback Machine"(IEEE Transactions on Computers) was the first proposal for visualization interface to an IR system.

- 1970s

- early 1970s:

- First online systems—NLM's AIM-TWX, MEDLINE; Lockheed's Dialog; SDC's ORBIT.

- Theodor Nelsonpromoting concept ofhypertext,publishedComputer Lib/Dream Machines.

- 1971:Nicholas JardineandCornelis J. van Rijsbergenpublished "The use ofhierarchic clusteringin information retrieval ", which articulated the" cluster hypothesis ".[14]

- 1975:Three highly influential publications by Salton fully articulated his vector processing framework andterm discriminationmodel:

- 1978:The FirstACMSIGIRconference.

- 1979:C. J. van Rijsbergen publishedInformation Retrieval(Butterworths). Heavy emphasis on probabilistic models.

- 1979:Tamas Doszkocs implemented the CITEnatural language user interfacefor MEDLINE at the National Library of Medicine. The CITE system supported free form query input, ranked output and relevance feedback.[15]

- early 1970s:

- 1980s

- 1980:First international ACM SIGIR conference, joint with British Computer Society IR group in Cambridge.

- 1982:Nicholas J. Belkin,Robert N. Oddy, and Helen M. Brooks proposed the ASK (Anomalous State of Knowledge) viewpoint for information retrieval. This was an important concept, though their automated analysis tool proved ultimately disappointing.

- 1983:Salton (and Michael J. McGill) publishedIntroduction to Modern Information Retrieval(McGraw-Hill), with heavy emphasis on vector models.

- 1985:David Blair andBill Maronpublish: An Evaluation of Retrieval Effectiveness for a Full-Text Document-Retrieval System

- mid-1980s:Efforts to develop end-user versions of commercial IR systems.

- 1985–1993:Key papers on and experimental systems for visualization interfaces.

- Work byDonald B. Crouch,Robert R. Korfhage,Matthew Chalmers, Anselm Spoerri and others.

- 1989:FirstWorld Wide Webproposals byTim Berners-LeeatCERN.

- 1990s

- 1992:FirstTRECconference.

- 1997:Publication ofKorfhage'sInformation Storage and Retrieval[16]with emphasis on visualization and multi-reference point systems.

- 1999:Publication ofRicardo Baeza-Yatesand Berthier Ribeiro-Neto'sModern Information Retrievalby Addison Wesley, the first book that attempts to cover all IR.

- late 1990s:Web search enginesimplementation of many features formerly found only in experimental IR systems. Search engines become the most common and maybe best instantiation of IR models.

Major conferences[edit]

- SIGIR:Conference on Research and Development in Information Retrieval

- ECIR:European Conference on Information Retrieval

- CIKM:Conference on Information and Knowledge Management

- WWW:International World Wide Web Conference

- WSDM:Conference on Web Search and Data Mining

- ICTIR:International Conference on Theory of Information Retrieval

Awards in the field[edit]

See also[edit]

- Adversarial information retrieval– Information retrieval strategies in datasets

- Computer memory– Computer component that stores information for immediate use

- Controlled vocabulary– Method of organizing knowledge

- Cross-language information retrieval– retrieval of Information in different languages

- Data mining– Process of extracting and discovering patterns in large data sets

- Data retrieval– Way to obtain data from a database

- European Summer School in Information Retrieval– ESSIR promotes research, innovation, and development of information access systems by educating junior and senior researchers, students, professionals, and developers on the latest developments in the field, both methodological and technological.

- Human–computer information retrieval(HCIR)

- Information extraction– Machine reading of unstructured documents

- Information seeking– Process or activity of attempting to obtain information in both human and technological contexts

- Information seeking § Compared to information retrieval

- Collaborative information seeking

- Social information seeking– field of research that involves studying situations, motivations, and methods for people seeking and sharing information in participatory online social sites

- Information Retrieval Facility– Organization in Vienna, Austria 2006–2012

- Knowledge visualization– Set of techniques for creating images, diagrams, or animations to communicate a message

- Multimedia information retrieval

- Personal information management– Tools and systems for managing one's own data

- Pearl growing– Type of search strategy

- Query understanding– Search engine processing step

- Relevance (information retrieval)– Measure of a document's applicability to a given subject or search query

- Relevance feedback– type of feedback

- Rocchio classification– A classification model in machine learning based on centroids

- Search engine indexing– Method for data management

- Special Interest Group on Information Retrieval– Subgroup of the Association for Computing Machinery

- Subject indexing– Classifying a document by index terms

- Temporal information retrieval– Area of research related to information retrieval centered on timeliness

- tf–idf– Estimate of the importance of a word in a document

- XML retrieval– Content-based retrieval of XML documents

- Web mining– Process of extracting and discovering patterns in large data sets

References[edit]

- ^Luk, R. W. P. (2022). "Why is information retrieval a scientific discipline?".Foundations of Science.27(2): 427–453.doi:10.1007/s10699-020-09685-x.hdl:10397/94873.S2CID220506422.

- ^Jansen, B. J. and Rieh, S. (2010)The Seventeen Theoretical Constructs of Information Searching and Information RetrievalArchived2016-03-04 at theWayback Machine.Journal of the American Society for Information Sciences and Technology. 61(8), 1517-1534.

- ^Goodrum, Abby A. (2000). "Image Information Retrieval: An Overview of Current Research".Informing Science.3(2).

- ^Foote, Jonathan (1999). "An overview of audio information retrieval".Multimedia Systems.7:2–10.CiteSeerX10.1.1.39.6339.doi:10.1007/s005300050106.S2CID2000641.

- ^Beel, Jöran; Gipp, Bela; Stiller, Jan-Olaf (2009).Information Retrieval On Mind Maps - What Could It Be Good For?.Proceedings of the 5th International Conference on Collaborative Computing: Networking, Applications and Worksharing (CollaborateCom'09). Washington, DC: IEEE. Archived fromthe originalon 2011-05-13.Retrieved2012-03-13.

- ^Frakes, William B.; Baeza-Yates, Ricardo (1992).Information Retrieval Data Structures & Algorithms.Prentice-Hall, Inc.ISBN978-0-13-463837-9.Archived fromthe originalon 2013-09-28.

- ^abSinghal, Amit (2001)."Modern Information Retrieval: A Brief Overview"(PDF).Bulletin of the IEEE Computer Society Technical Committee on Data Engineering.24(4): 35–43.

- ^Mark Sanderson & W. Bruce Croft (2012)."The History of Information Retrieval Research".Proceedings of the IEEE.100:1444–1451.doi:10.1109/jproc.2012.2189916.

- ^JE Holmstrom (1948)."'Section III. Opening Plenary Session ".The Royal Society Scientific Information Conference, 21 June-2 July 1948: Report and Papers Submitted:85.

- ^Mooers, Calvin N.;The Theory of Digital Handling of Non-numerical Information and its Implications to Machine Economics(Zator Technical Bulletin No. 48), cited inFairthorne, R. A. (1958)."Automatic Retrieval of Recorded Information".The Computer Journal.1(1): 37.doi:10.1093/comjnl/1.1.36.

- ^Doyle, Lauren; Becker, Joseph (1975).Information Retrieval and Processing.Melville. pp. 410 pp.ISBN978-0-471-22151-7.

- ^Perry, James W.; Kent, Allen; Berry, Madeline M. (1955). "Machine literature searching X. Machine language; factors underlying its design and development".American Documentation.6(4): 242–254.doi:10.1002/asi.5090060411.

- ^Maron, Melvin E. (2008)."An Historical Note on the Origins of Probabilistic Indexing"(PDF).Information Processing and Management.44(2): 971–972.doi:10.1016/j.ipm.2007.02.012.

- ^N. Jardine, C.J. van Rijsbergen (December 1971). "The use of hierarchic clustering in information retrieval".Information Storage and Retrieval.7(5): 217–240.doi:10.1016/0020-0271(71)90051-9.

- ^Doszkocs, T.E. & Rapp, B.A. (1979). "Searching MEDLINE in English: a Prototype User Interface with Natural Language Query, Ranked Output, and relevance feedback," In: Proceedings of the ASIS Annual Meeting, 16: 131-139.

- ^Korfhage, Robert R. (1997).Information Storage and Retrieval.Wiley. pp.368 pp.ISBN978-0-471-14338-3.

Further reading[edit]

- Ricardo Baeza-Yates, Berthier Ribeiro-Neto.Modern Information Retrieval: The Concepts and Technology behind Search (second edition)Archived2017-09-18 at theWayback Machine.Addison-Wesley, UK, 2011.

- Stefan Büttcher, Charles L. A. Clarke, and Gordon V. Cormack.Information Retrieval: Implementing and Evaluating Search EnginesArchived2020-10-05 at theWayback Machine.MIT Press, Cambridge, Massachusetts, 2010.

- "Information Retrieval System".Library & Information Science Network.24 April 2015. Archived fromthe originalon 11 May 2020.Retrieved3 May2020.

- Christopher D. Manning, Prabhakar Raghavan, and Hinrich Schütze.Introduction to Information Retrieval.Cambridge University Press, 2008.

- Yeo, ShinJoung. (2023)Behind the Search Box: Google and the Global Internet Industry(U of Illinois Press, 2023) ISBN 10:0252087127online

External links[edit]

- ACM SIGIR: Information Retrieval Special Interest Group

- BCS IRSG: British Computer Society – Information Retrieval Specialist Group

- Text Retrieval Conference (TREC)

- Forum for Information Retrieval Evaluation (FIRE)

- Information Retrieval(online book) byC. J. van Rijsbergen

- Information Retrieval WikiArchived2015-11-24 at theWayback Machine

- Information Retrieval FacilityArchived2008-05-22 at theWayback Machine

- TREC report on information retrieval evaluation techniques

- How eBay measures search relevance

- Information retrieval performance evaluation tool @ Athena Research Centre