Lagrange multiplier

Inmathematical optimization,themethod of Lagrange multipliersis a strategy for finding the localmaxima and minimaof afunctionsubject toequation constraints(i.e., subject to the condition that one or moreequationshave to be satisfied exactly by the chosen values of thevariables).[1]It is named after the mathematicianJoseph-Louis Lagrange.

Summary and rationale[edit]

The basic idea is to convert a constrained problem into a form such that thederivative testof an unconstrained problem can still be applied. The relationship between the gradient of the function and gradients of the constraints rather naturally leads to a reformulation of the original problem, known as theLagrangian functionor Lagrangian.[2]In the general case, the Lagrangian is defined as for functions;is called the Lagrange multiplier.

In simple cases, where theinner productis defined as thedot product,the Lagrangian is

The method can be summarized as follows: in order to find the maximum or minimum of a functionsubjected to the equality constraint,find thestationary pointsofconsidered as a function ofand the Lagrange multiplier.This means that allpartial derivativesshould be zero, including the partial derivative with respect to.[3]

or equivalently

The solution corresponding to the original constrained optimization is always asaddle pointof the Lagrangian function,[4][5]which can be identified among the stationary points from thedefinitenessof thebordered Hessian matrix.[6]

The great advantage of this method is that it allows the optimization to be solved without explicitparameterizationin terms of the constraints. As a result, the method of Lagrange multipliers is widely used to solve challenging constrained optimization problems. Further, the method of Lagrange multipliers is generalized by theKarush–Kuhn–Tucker conditions,which can also take into account inequality constraints of the formfor a given constant.

Statement[edit]

The following is known as the Lagrange multiplier theorem.[7]

Letbe the objective function,be the constraints function, both belonging to(that is, having continuous first derivatives). Letbe an optimal solution to the following optimization problem such that, for the matrix of partial derivatives,:

Then there exists a unique Lagrange multipliersuch that(Note that this is a somewhat conventional thing whereis clearly treated as a column vector to ensure that the dimensions match. But, we might as well make it just a row vector without taking the transpose).

The Lagrange multiplier theorem states that at any local maximum (or minimum) of the function evaluated under the equality constraints, if constraint qualification applies (explained below), then thegradientof the function (at that point) can be expressed as alinear combinationof the gradients of the constraints (at that point), with the Lagrange multipliers acting ascoefficients.[8]This is equivalent to saying that any direction perpendicular to all gradients of the constraints is also perpendicular to the gradient of the function. Or still, saying that thedirectional derivativeof the function is0in every feasible direction.

Single constraint[edit]

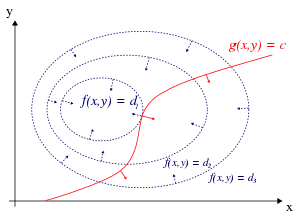

For the case of only one constraint and only two choice variables (as exemplified in Figure 1), consider theoptimization problem (Sometimes an additive constant is shown separately rather than being included in,in which case the constraint is writtenas in Figure 1.) We assume that bothandhave continuous firstpartial derivatives.We introduce a new variable () called aLagrange multiplier(orLagrange undetermined multiplier) and study theLagrange function(orLagrangianorLagrangian expression) defined by where theterm may be either added or subtracted. Ifis a maximum offor the original constrained problem andthen there existssuch that () is astationary pointfor the Lagrange function (stationary points are those points where the first partial derivatives ofare zero). The assumptionis called constraint qualification. However, not all stationary points yield a solution of the original problem, as the method of Lagrange multipliers yields only anecessary conditionfor optimality in constrained problems.[9][10][11][12][13]Sufficient conditions for a minimum or maximumalso exist,but if a particularcandidate solutionsatisfies the sufficient conditions, it is only guaranteed that that solution is the best onelocally– that is, it is better than any permissible nearby points. Theglobaloptimum can be found by comparing the values of the original objective function at the points satisfying the necessary and locally sufficient conditions.

The method of Lagrange multipliers relies on the intuition that at a maximum,f(x,y)cannot be increasing in the direction of any such neighboring point that also hasg= 0.If it were, we could walk alongg= 0to get higher, meaning that the starting point wasn't actually the maximum. Viewed in this way, it is an exact analogue to testing if the derivative of an unconstrained function is0,that is, we are verifying that the directional derivative is 0 in any relevant (viable) direction.

We can visualizecontoursoffgiven byf(x,y) =dfor various values ofd,and the contour ofggiven byg(x,y) =c.

Suppose we walk along the contour line withg=c.We are interested in finding points wherefalmost does not change as we walk, since these points might be maxima.

There are two ways this could happen:

- We could touch a contour line off,since by definitionfdoes not change as we walk along its contour lines. This would mean that the tangents to the contour lines offandgare parallel here.

- We have reached a "level" part off,meaning thatfdoes not change in any direction.

To check the first possibility (we touch a contour line off), notice that since thegradientof a function is perpendicular to the contour lines, the tangents to the contour lines offandgare parallel if and only if the gradients offandgare parallel. Thus we want points(x,y)whereg(x,y) =cand for some

where are the respective gradients. The constantis required because although the two gradient vectors are parallel, the magnitudes of the gradient vectors are generally not equal. This constant is called the Lagrange multiplier. (In some conventionsis preceded by a minus sign).

Notice that this method also solves the second possibility, thatfis level: iffis level, then its gradient is zero, and settingis a solution regardless of.

To incorporate these conditions into one equation, we introduce an auxiliary function and solve

Note that this amounts to solving three equations in three unknowns. This is the method of Lagrange multipliers.

Note thatimpliesas the partial derivative ofwith respect tois

To summarize

The method generalizes readily to functions onvariables which amounts to solvingn+ 1equations inn+ 1unknowns.

The constrained extrema offarecritical pointsof the Lagrangian,but they are not necessarilylocal extremaof(see§ Example 2below).

One mayreformulate the Lagrangianas aHamiltonian,in which case the solutions are local minima for the Hamiltonian. This is done inoptimal controltheory, in the form ofPontryagin's minimum principle.

The fact that solutions of the method of Lagrange multipliers are not necessarily extrema of the Lagrangian, also poses difficulties for numerical optimization. This can be addressed by minimizing themagnitudeof the gradient of the Lagrangian, as these minima are the same as the zeros of the magnitude, as illustrated inExample 5: Numerical optimization.

Multiple constraints[edit]

The method of Lagrange multipliers can be extended to solve problems with multiple constraints using a similar argument. Consider aparaboloidsubject to two line constraints that intersect at a single point. As the only feasible solution, this point is obviously a constrained extremum. However, thelevel setofis clearly not parallel to either constraint at the intersection point (see Figure 3); instead, it is a linear combination of the two constraints' gradients. In the case of multiple constraints, that will be what we seek in general: The method of Lagrange seeks points not at which the gradient ofis multiple of any single constraint's gradient necessarily, but in which it is a linear combination of all the constraints' gradients.

Concretely, suppose we haveconstraints and are walking along the set of points satisfyingEvery pointon the contour of a given constraint functionhas a space of allowable directions: the space of vectors perpendicular toThe set of directions that are allowed by all constraints is thus the space of directions perpendicular to all of the constraints' gradients. Denote this space of allowable moves byand denote the span of the constraints' gradients byThenthe space of vectors perpendicular to every element of

We are still interested in finding points wheredoes not change as we walk, since these points might be (constrained) extrema. We therefore seeksuch that any allowable direction of movement away fromis perpendicular to(otherwise we could increaseby moving along that allowable direction). In other words,Thus there are scalarssuch that

These scalars are the Lagrange multipliers. We now haveof them, one for every constraint.

As before, we introduce an auxiliary function and solve which amounts to solvingequations inunknowns.

The constraint qualification assumption when there are multiple constraints is that the constraint gradients at the relevant point are linearly independent.

Modern formulation via differentiable manifolds[edit]

The problem of finding the local maxima and minima subject to constraints can be generalized to finding local maxima and minima on adifferentiable manifold[14]In what follows, it is not necessary thatbe a Euclidean space, or even a Riemannian manifold. All appearances of the gradient(which depends on a choice of Riemannian metric) can be replaced with theexterior derivative

Single constraint[edit]

Letbe asmooth manifoldof dimensionSuppose that we wish to find the stationary pointsof a smooth functionwhen restricted to the submanifolddefined bywhereis a smooth function for which0is aregular value.

Letandbe theexterior derivativesofand.Stationarity for the restrictionatmeansEquivalently, the kernelcontainsIn other words,andare proportional 1-forms. For this it is necessary and sufficient that the following system ofequations holds: wheredenotes theexterior product.The stationary pointsare the solutions of the above system of equations plus the constraintNote that theequations are not independent, since the left-hand side of the equation belongs to the subvariety ofconsisting ofdecomposable elements.

In this formulation, it is not necessary to explicitly find the Lagrange multiplier, a numbersuch that

Multiple constraints[edit]

Letandbe as in the above section regarding the case of a single constraint. Rather than the functiondescribed there, now consider a smooth functionwith component functionsfor whichis aregular value.Letbe the submanifold ofdefined by

is a stationary point ofif and only ifcontainsFor convenience letandwheredenotes the tangent map or JacobianThe subspacehas dimension smaller than that of,namelyandbelongs toif and only ifbelongs to the image ofComputationally speaking, the condition is thatbelongs to the row space of the matrix ofor equivalently the column space of the matrix of(the transpose). Ifdenotes the exterior product of the columns of the matrix ofthe stationary condition foratbecomes Once again, in this formulation it is not necessary to explicitly find the Lagrange multipliers, the numberssuch that

Interpretation of the Lagrange multipliers[edit]

In this section, we modify the constraint equations from the formto the formwhere thearemreal constants that are considered to be additional arguments of the Lagrangian expression.

Often the Lagrange multipliers have an interpretation as some quantity of interest. For example, by parametrising the constraint's contour line, that is, if the Lagrangian expression is then

So,λkis the rate of change of the quantity being optimized as a function of the constraint parameter. As examples, inLagrangian mechanicsthe equations of motion are derived by finding stationary points of theaction,the time integral of the difference between kinetic and potential energy. Thus, the force on a particle due to a scalar potential,F= −∇V,can be interpreted as a Lagrange multiplier determining the change in action (transfer of potential to kinetic energy) following a variation in the particle's constrained trajectory. In control theory this is formulated instead ascostate equations.

Moreover, by theenvelope theoremthe optimal value of a Lagrange multiplier has an interpretation as the marginal effect of the corresponding constraint constant upon the optimal attainable value of the original objective function: If we denote values at the optimum with a star (), then it can be shown that

For example, in economics the optimal profit to a player is calculated subject to a constrained space of actions, where a Lagrange multiplier is the change in the optimal value of the objective function (profit) due to the relaxation of a given constraint (e.g. through a change in income); in such a contextis themarginal costof the constraint, and is referred to as theshadow price.[15]

Sufficient conditions[edit]

Sufficient conditions for a constrained local maximum or minimum can be stated in terms of a sequence of principal minors (determinants of upper-left-justified sub-matrices) of the borderedHessian matrixof second derivatives of the Lagrangian expression.[6][16]

Examples[edit]

Example 1[edit]

Suppose we wish to maximizesubject to the constraintThefeasible setis the unit circle, and thelevel setsoffare diagonal lines (with slope −1), so we can see graphically that the maximum occurs atand that the minimum occurs at

For the method of Lagrange multipliers, the constraint is hence the Lagrangian function, is a function that is equivalent towhenis set to0.

Now we can calculate the gradient: and therefore:

Notice that the last equation is the original constraint.

The first two equations yield By substituting into the last equation we have: so which implies that the stationary points ofare

Evaluating the objective functionfat these points yields

Thus the constrained maximum isand the constrained minimum is.

Example 2[edit]

Now we modify the objective function of Example1so that we minimizeinstead ofagain along the circleNow the level sets ofare still lines of slope −1, and the points on the circle tangent to these level sets are againandThese tangency points are maxima of

On the other hand, the minima occur on the level set for(since by its constructioncannot take negative values), atandwhere the level curves ofare not tangent to the constraint. The condition thatcorrectly identifies all four points as extrema; the minima are characterized in byand the maxima by

Example 3[edit]

This example deals with more strenuous calculations, but it is still a single constraint problem.

Suppose one wants to find the maximum values of with the condition that the- and-coordinates lie on the circle around the origin with radiusThat is, subject to the constraint

As there is just a single constraint, there is a single multiplier, say

The constraintis identically zero on the circle of radiusAny multiple ofmay be added toleavingunchanged in the region of interest (on the circle where our original constraint is satisfied).

Applying the ordinary Lagrange multiplier method yields from which the gradient can be calculated: And therefore: (iii) is just the original constraint. (i) impliesorIfthenby (iii) and consequentlyfrom (ii). Ifsubstituting this into (ii) yieldsSubstituting this into (iii) and solving forgivesThus there are six critical points of

Evaluating the objective at these points, one finds that

Therefore, the objective function attains theglobal maximum(subject to the constraints) atand theglobal minimumatThe pointis alocal minimumofandis alocal maximumofas may be determined by consideration of theHessian matrixof

Note that whileis a critical point ofit is not a local extremum ofWe have

Given any neighbourhood ofone can choose a small positiveand a smallof either sign to getvalues both greater and less thanThis can also be seen from the Hessian matrix ofevaluated at this point (or indeed at any of the critical points) which is anindefinite matrix.Each of the critical points ofis asaddle pointof[4]

Example 4 – Entropy[edit]

Suppose we wish to find thediscrete probability distributionon the pointswith maximalinformation entropy.This is the same as saying that we wish to find theleast structuredprobability distribution on the pointsIn other words, we wish to maximize theShannon entropyequation:

For this to be a probability distribution the sum of the probabilitiesat each pointmust equal 1, so our constraint is:

We use Lagrange multipliers to find the point of maximum entropy,across all discrete probability distributionsonWe require that: which gives a system ofnequations,such that:

Carrying out the differentiation of thesenequations, we get

This shows that allare equal (because they depend onλonly). By using the constraint we find

Hence, the uniform distribution is the distribution with the greatest entropy, among distributions onnpoints.

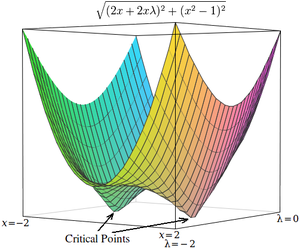

Example 5 – Numerical optimization[edit]

The critical points of Lagrangians occur atsaddle points,rather than at local maxima (or minima).[4][17]Unfortunately, many numerical optimization techniques, such ashill climbing,gradient descent,some of thequasi-Newton methods,among others, are designed to find local maxima (or minima) and not saddle points. For this reason, one must either modify the formulation to ensure that it's a minimization problem (for example, by extremizing the square of thegradientof the Lagrangian as below), or else use an optimization technique that findsstationary points(such asNewton's methodwithout an extremum seekingline search) and not necessarily extrema.

As a simple example, consider the problem of finding the value ofxthat minimizesconstrained such that(This problem is somewhat untypical because there are only two values that satisfy this constraint, but it is useful for illustration purposes because the corresponding unconstrained function can be visualized in three dimensions.)

Using Lagrange multipliers, this problem can be converted into an unconstrained optimization problem:

The two critical points occur at saddle points wherex= 1andx= −1.

In order to solve this problem with a numerical optimization technique, we must first transform this problem such that the critical points occur at local minima. This is done by computing the magnitude of the gradient of the unconstrained optimization problem.

First, we compute the partial derivative of the unconstrained problem with respect to each variable:

If the target function is not easily differentiable, the differential with respect to each variable can be approximated as whereis a small value.

Next, we compute the magnitude of the gradient, which is the square root of the sum of the squares of the partial derivatives:

(Since magnitude is always non-negative, optimizing over the squared-magnitude is equivalent to optimizing over the magnitude. Thus, the "square root" may be omitted from these equations with no expected difference in the results of optimization.)

The critical points ofhoccur atx= 1andx= −1,just as inUnlike the critical points inhowever, the critical points inhoccur at local minima, so numerical optimization techniques can be used to find them.

Applications[edit]

Control theory[edit]

Inoptimal controltheory, the Lagrange multipliers are interpreted ascostatevariables, and Lagrange multipliers are reformulated as the minimization of theHamiltonian,inPontryagin's minimum principle.

Nonlinear programming[edit]

The Lagrange multiplier method has several generalizations. Innonlinear programmingthere are several multiplier rules, e.g. the Carathéodory–John Multiplier Rule and the Convex Multiplier Rule, for inequality constraints.[18]

Power systems[edit]

Methods based on Lagrange multipliers have applications inpower systems,e.g. in distributed-energy-resources (DER) placement and load shedding.[19]

Safe Reinforcement Learning[edit]

The method of Lagrange multipliers applies toconstrained Markov decision processes.[20] It naturally produces gradient-based primal-dual algorithms in safe reinforcement learning.[21]

See also[edit]

- Adjustment of observations

- Duality

- Gittins index

- Karush–Kuhn–Tucker conditions:generalization of the method of Lagrange multipliers

- Lagrange multipliers on Banach spaces:another generalization of the method of Lagrange multipliers

- Lagrange multiplier testin maximum likelihood estimation

- Lagrangian relaxation

References[edit]

- ^Hoffmann, Laurence D.; Bradley, Gerald L. (2004).Calculus for Business, Economics, and the Social and Life Sciences(8th ed.). pp. 575–588.ISBN0-07-242432-X.

- ^Beavis, Brian; Dobbs, Ian M. (1990)."Static Optimization".Optimization and Stability Theory for Economic Analysis.New York: Cambridge University Press. p. 40.ISBN0-521-33605-8.

- ^Protter, Murray H.;Morrey, Charles B. Jr.(1985).Intermediate Calculus(2nd ed.). New York, NY: Springer. p. 267.ISBN0-387-96058-9.

- ^abcWalsh, G.R. (1975)."Saddle-point Property of Lagrangian Function".Methods of Optimization.New York, NY: John Wiley & Sons. pp. 39–44.ISBN0-471-91922-5.

- ^Kalman, Dan (2009). "Leveling with Lagrange: An alternate view of constrained optimization".Mathematics Magazine.82(3): 186–196.doi:10.1080/0025570X.2009.11953617.JSTOR27765899.S2CID121070192.

- ^abSilberberg, Eugene; Suen, Wing (2001).The Structure of Economics: A Mathematical Analysis(Third ed.). Boston: Irwin McGraw-Hill. pp. 134–141.ISBN0-07-234352-4.

- ^de la Fuente,Angel (2000).Mathematical Methods and Models for Economists.Cambridge: Cambridge University Press. p.285.doi:10.1017/CBO9780511810756.ISBN978-0-521-58512-5.

- ^Luenberger, David G.(1969).Optimization by Vector Space Methods.New York: John Wiley & Sons. pp. 188–189.

- ^Bertsekas, Dimitri P.(1999).Nonlinear Programming(Second ed.). Cambridge, MA: Athena Scientific.ISBN1-886529-00-0.

- ^Vapnyarskii, I.B. (2001) [1994],"Lagrange multipliers",Encyclopedia of Mathematics,EMS Press.

- ^Lasdon, Leon S. (2002) [1970].Optimization Theory for Large Systems(reprint ed.). Mineola, New York, NY: Dover.ISBN0-486-41999-1.MR1888251.

- ^Hiriart-Urruty, Jean-Baptiste;Lemaréchal, Claude(1993). "Chapter XII: Abstract duality for practitioners".Convex analysis and minimization algorithms.Grundlehren der Mathematischen Wissenschaften [Fundamental Principles of Mathematical Sciences]. Vol. 306. Berlin, DE: Springer-Verlag. pp. 136–193 (and Bibliographical comments pp. 334–335).ISBN3-540-56852-2.MR1295240.Volume II: Advanced theory and bundle methods.

- ^Lemaréchal, Claude(15–19 May 2000). "Lagrangian relaxation". In Jünger, Michael; Naddef, Denis (eds.).Computational combinatorial optimization: Papers from the Spring School held in Schloß Dagstuhl.Spring School held in Schloß Dagstuhl,May 15–19, 2000.Lecture Notes in Computer Science. Vol. 2241. Berlin, DE: Springer-Verlag (published 2001). pp. 112–156.doi:10.1007/3-540-45586-8_4.ISBN3-540-42877-1.MR1900016.S2CID9048698.

- ^Lafontaine, Jacques (2015).An Introduction to Differential Manifolds.Springer. p. 70.ISBN978-3-319-20735-3.

- ^Dixit, Avinash K.(1990)."Shadow Prices".Optimization in Economic Theory(2nd ed.). New York: Oxford University Press. pp. 40–54.ISBN0-19-877210-6.

- ^Chiang, Alpha C.(1984).Fundamental Methods of Mathematical Economics(Third ed.). McGraw-Hill. p.386.ISBN0-07-010813-7.

- ^Heath, Michael T.(2005).Scientific Computing: An introductory survey.McGraw-Hill. p. 203.ISBN978-0-07-124489-3.

- ^Pourciau, Bruce H. (1980)."Modern multiplier rules".American Mathematical Monthly.87(6): 433–452.doi:10.2307/2320250.JSTOR2320250.

- ^ Gautam, Mukesh; Bhusal, Narayan; Benidris, Mohammed (2020).A sensitivity-based approach to adaptive under-frequency load shedding.2020 IEEE Texas Power and Energy Conference (TPEC).Institute of Electronic and Electrical Engineers.pp. 1–5.doi:10.1109/TPEC48276.2020.9042569.

- ^ Altman, Eitan (2021).Constrained Markov Decision Processes.Routledge.

- ^ Ding, Dongsheng; Zhang, Kaiqing; Jovanovic, Mihailo; Basar, Tamer (2020).Natural policy gradient primal-dual method for constrained Markov decision processes.Advances in Neural Information Processing Systems.

Further reading[edit]

- Beavis, Brian; Dobbs, Ian M. (1990)."Static Optimization".Optimization and Stability Theory for Economic Analysis.New York, NY: Cambridge University Press. pp. 32–72.ISBN0-521-33605-8.

- Bertsekas, Dimitri P.(1982).Constrained optimization and Lagrange multiplier methods.New York, NY: Academic Press.ISBN0-12-093480-9.

- Beveridge, Gordon S.G.; Schechter, Robert S. (1970)."Lagrangian multipliers".Optimization: Theory and Practice.New York, NY: McGraw-Hill. pp. 244–259.ISBN0-07-005128-3.

- Binger, Brian R.; Hoffman, Elizabeth (1998). "Constrained optimization".Microeconomics with Calculus(2nd ed.). Reading: Addison-Wesley. pp. 56–91.ISBN0-321-01225-9.

- Carter, Michael (2001)."Equality constraints".Foundations of Mathematical Economics.Cambridge, MA: MIT Press. pp. 516–549.ISBN0-262-53192-5.

- Hestenes, Magnus R.(1966). "Minima of functions subject to equality constraints".Calculus of Variations and Optimal Control Theory.New York, NY: Wiley. pp. 29–34.

- Wylie, C. Ray; Barrett, Louis C. (1995). "The extrema of integrals under constraint".Advanced Engineering Mathematics(Sixth ed.). New York, NY: McGraw-Hill. pp. 1096–1103.ISBN0-07-072206-4.

External links[edit]

Exposition[edit]

- Steuard."Conceptual introduction".slimy.com.— plus a brief discussion of Lagrange multipliers in thecalculus of variationsas used in physics.

- Carpenter, Kenneth H."Lagrange multipliers for quadratic forms with linear constraints"(PDF).Kansas State University.

Additional text and interactive applets[edit]

- Resnik."Simple explanation with an example of governments using taxes as Lagrange multipliers".umiacs.umd.edu.University of Maryland.

- Klein, Dan."Lagrange multipliers without permanent scarring] Explanation with focus on the intuition"(PDF).nlp.cs.berkeley.edu.University of California, Berkeley.

- Sathyanarayana, Shashi."Geometric representation of method of Lagrange multipliers".wolfram.com(Mathematicademonstration).Wolfram Research.

Needs Internet Explorer / Firefox / Safari.

— Provides compelling insight in 2 dimensions that at a minimizing point, the direction of steepest descent must be perpendicular to the tangent of the constraint curve at that point. - "Lagrange multipliers – two variables".MIT Open Courseware (ocw.mit.edu)(Applet).Massachusetts Institute of Technology.

- "Lagrange multipliers".MIT Open Courseware (ocw.mit.edu)(video lecture). Mathematics 18-02: Multivariable calculus.Massachusetts Institute of Technology.Fall 2007.

- Bertsekas."Details on Lagrange multipliers"(PDF).athenasc.com(slides / course lecture). Non-Linear Programming.— Course slides accompanying text on nonlinear optimization

- Wyatt, John (7 April 2004) [19 November 2002]."Legrange multipliers, constrained optimization, and the maximum entropy principle"(PDF).www-mtl.mit.edu.Elec E & C S / Mech E 6.050– Information, entropy, and computation.— Geometric idea behind Lagrange multipliers

- "Using Lagrange multipliers in optimization".matlab.cheme.cmu.edu(MATLAB example). Pittsburgh, PA: Carnegie Mellon University. 24 December 2011.

![{\displaystyle {\Bigl [}\operatorname {D} g(x_{\star }){\Bigr ]}_{j,k}={\frac {\ \partial g_{j}\ }{\partial x_{k}}}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/e64847aaf74c536fcbdd35ff935281fda73117f3)

![{\displaystyle {\begin{aligned}&{\mathcal {L}}(x_{1},x_{2},\ldots ;\lambda _{1},\lambda _{2},\ldots ;c_{1},c_{2},\ldots )\\[4pt]={}&f(x_{1},x_{2},\ldots )+\lambda _{1}(c_{1}-g_{1}(x_{1},x_{2},\ldots ))+\lambda _{2}(c_{2}-g_{2}(x_{1},x_{2},\dots ))+\cdots \end{aligned}}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/1867ddf9118c757c322a3d5c0c94965c64a65d0a)

![{\displaystyle {\begin{aligned}{\mathcal {L}}(x,y,\lambda )&=f(x,y)+\lambda \cdot g(x,y)\\[4pt]&=x+y+\lambda (x^{2}+y^{2}-1)\ ,\end{aligned}}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/69623e2b0ffebabe351b9e7ed58a76751601bf66)

![{\displaystyle {\begin{aligned}\nabla _{x,y,\lambda }{\mathcal {L}}(x,y,\lambda )&=\left({\frac {\partial {\mathcal {L}}}{\partial x}},{\frac {\partial {\mathcal {L}}}{\partial y}},{\frac {\partial {\mathcal {L}}}{\partial \lambda }}\right)\\[4pt]&=\left(1+2\lambda x,1+2\lambda y,x^{2}+y^{2}-1\right)\ \color {gray}{,}\end{aligned}}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/cdf9c05227cba7a2024d2ef806cf55712c1a3073)

![{\displaystyle {\begin{aligned}&{\frac {\partial {\mathcal {L}}}{\partial x}}=2x+2x\lambda \\[5pt]&{\frac {\partial {\mathcal {L}}}{\partial \lambda }}=x^{2}-1~.\end{aligned}}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/498e5f0200a9e684456385a4d6b121c2274c2737)

![{\displaystyle {\begin{aligned}{\frac {\ \partial {\mathcal {L}}\ }{\partial x}}\approx {\frac {{\mathcal {L}}(x+\varepsilon ,\lambda )-{\mathcal {L}}(x,\lambda )}{\varepsilon }},\\[5pt]{\frac {\ \partial {\mathcal {L}}\ }{\partial \lambda }}\approx {\frac {{\mathcal {L}}(x,\lambda +\varepsilon )-{\mathcal {L}}(x,\lambda )}{\varepsilon }},\end{aligned}}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/0de4bda576fdb293b9e0b1f0ebe3f93d57c84f06)

![{\displaystyle {\begin{aligned}h(x,\lambda )&={\sqrt {(2x+2x\lambda )^{2}+(x^{2}-1)^{2}\ }}\\[4pt]&\approx {\sqrt {\left({\frac {\ {\mathcal {L}}(x+\varepsilon ,\lambda )-{\mathcal {L}}(x,\lambda )\ }{\varepsilon }}\right)^{2}+\left({\frac {\ {\mathcal {L}}(x,\lambda +\varepsilon )-{\mathcal {L}}(x,\lambda )\ }{\varepsilon }}\right)^{2}\ }}~.\end{aligned}}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/a19179451d91b7f354ff6326de2c76749c3e9f0f)