Matrix (mathematics)

Inmathematics,amatrix(pl.:matrices) is arectangulararray or table ofnumbers,symbols,orexpressions,with elements or entries arranged in rows and columns, which is used to represent amathematical objector property of such an object.

For example, is a matrix with two rows and three columns. This is often referred to as a "two-by-three matrix", a "matrix ", or a matrix of dimension.

Matrices are commonly related tolinear algebra.Notable exceptions includeincidence matricesandadjacency matricesingraph theory.[1]This article focuses on matrices related to linear algebra, and, unless otherwise specified, all matrices representlinear mapsor may be viewed as such.

Square matrices,matrices with the same number of rows and columns, play a major role in matrix theory. Square matrices of a given dimension form anoncommutative ring,which is one of the most common examples of a noncommutative ring. Thedeterminantof a square matrix is a number associated with the matrix, which is fundamental for the study of a square matrix; for example, a square matrix isinvertibleif and only if it has a nonzero determinant and theeigenvaluesof a square matrix are the roots of apolynomialdeterminant.

Ingeometry,matrices are widely used for specifying and representinggeometric transformations(for examplerotations) andcoordinate changes.Innumerical analysis,many computational problems are solved by reducing them to a matrix computation, and this often involves computing with matrices of huge dimensions. Matrices are used in most areas of mathematics and scientific fields, either directly, or through their use in geometry and numerical analysis.

Matrix theoryis thebranch of mathematicsthat focuses on the study of matrices. It was initially a sub-branch oflinear algebra,but soon grew to include subjects related tograph theory,algebra,combinatoricsandstatistics.

Definition

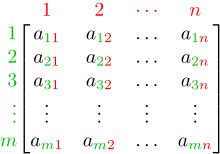

[edit]Amatrixis a rectangular array ofnumbers(or other mathematical objects), called theentriesof the matrix. Matrices are subject to standardoperationssuch asadditionandmultiplication.[2]Most commonly, a matrix over afieldFis a rectangular array ofelementsofF.[3][4]Areal matrixand acomplex matrixare matrices whose entries are respectivelyreal numbersorcomplex numbers.More general types of entries are discussedbelow.For instance, this is a real matrix:

The numbers, symbols, or expressions in the matrix are called itsentriesor itselements.The horizontal and vertical lines of entries in a matrix are calledrowsandcolumns,respectively.

Size

[edit]The size of a matrix is defined by the number of rows and columns it contains. There is no limit to the number of rows and columns, that a matrix (in the usual sense) can have as long as they are positive integers. A matrix withrows andcolumns is called anmatrix, or-by-matrix, whereandare called itsdimensions.For example, the matrixabove is amatrix.

Matrices with a single row are calledrow vectors,and those with a single column are calledcolumn vectors.A matrix with the same number of rows and columns is called asquare matrix.[5]A matrix with an infinite number of rows or columns (or both) is called aninfinite matrix.In some contexts, such ascomputer algebra programs,it is useful to consider a matrix with no rows or no columns, called anempty matrix.

| Name | Size | Example | Description | Notation |

|---|---|---|---|---|

| Row vector | 1×n | A matrix with one row, sometimes used to represent a vector | ||

| Column vector | n×1 | A matrix with one column, sometimes used to represent a vector | ||

| Square matrix | n×n | A matrix with the same number of rows and columns, sometimes used to represent alinear transformationfrom a vector space to itself, such asreflection,rotation,orshearing. |

Notation

[edit]The specifics of symbolic matrix notation vary widely, with some prevailing trends. Matrices are commonly written insquare bracketsorparentheses,so that anmatrixis represented as This may be abbreviated by writing only a single generic term, possibly along with indices, as in orin the case that.

Matrices are usually symbolized usingupper-caseletters (such asin the examples above), while the correspondinglower-caseletters, with two subscript indices (e.g.,,or), represent the entries. In addition to using upper-case letters to symbolize matrices, many authors use a specialtypographical style,commonly boldface Roman (non-italic), to further distinguish matrices from other mathematical objects. An alternative notation involves the use of a double-underline with the variable name, with or without boldface style, as in.

The entry in thei-th row andj-th column of a matrixAis sometimes referred to as theorentry of the matrix, and commonly denoted byor.Alternative notations for that entry areand.For example, theentry of the following matrixis5(also denoted,,or):

Sometimes, the entries of a matrix can be defined by a formula such as.For example, each of the entries of the following matrixis determined by the formula.

In this case, the matrix itself is sometimes defined by that formula, within square brackets or double parentheses. For example, the matrix above is defined asor.If matrix size is,the above-mentioned formulais valid for anyand any.This can be specified separately or indicated usingas a subscript. For instance, the matrixabove is,and can be defined asor.

Some programming languages utilize doubly subscripted arrays (or arrays of arrays) to represent anm-by-nmatrix. Some programming languages start the numbering of array indexes at zero, in which case the entries of anm-by-nmatrix are indexed byand.[6]This article follows the more common convention in mathematical writing where enumeration starts from1.

Thesetof allm-by-nreal matrices is often denotedorThe set of allm-by-nmatrices over anotherfield,or over aringR,is similarly denotedorIfm=n,such as in the case ofsquare matrices,one does not repeat the dimension:or[7]Often,,or,is used in place of

Basic operations

[edit]Several basic operations can be applied to matrices. Some, such astranspositionandsubmatrixdo not depend on the nature of the entries. Others, such asmatrix addition,scalar multiplication,matrix multiplication,androw operationsinvolve operations on matrix entries and therefore require that matrix entries are numbers or belong to afieldor aring.[8]

In this section, it is supposed that matrix entries belong to a fixed ring, which is typically a field of numbers.

Addition, scalar multiplication, subtraction and transposition

[edit]ThesumA+Bof twom×nmatricesAandBis calculated entrywise: For example,

The productcAof a numberc(also called ascalarin this context) and a matrixAis computed by multiplying every entry ofAbyc: This operation is calledscalar multiplication,but its result is not named "scalar product" to avoid confusion, since "scalar product" is often used as a synonym for "inner product".For example:

The subtraction of twom×nmatrices is defined by composing matrix addition with scalar multiplication by–1:

Thetransposeof anm×nmatrixAis then×mmatrixAT(also denotedAtrortA) formed by turning rows into columns and vice versa: For example:

Familiar properties of numbers extend to these operations on matrices: for example, addition iscommutative,that is, the matrix sum does not depend on the order of the summands:A+B=B+A.[9] The transpose is compatible with addition and scalar multiplication, as expressed by(cA)T=c(AT)and(A+B)T=AT+BT.Finally,(AT)T=A.

Matrix multiplication

[edit]

Multiplicationof two matrices is defined if and only if the number of columns of the left matrix is the same as the number of rows of the right matrix. IfAis anm×nmatrix andBis ann×pmatrix, then theirmatrix productABis them×pmatrix whose entries are given bydot productof the corresponding row ofAand the corresponding column ofB:[10]

where1 ≤i≤mand1 ≤j≤p.[11]For example, the underlined entry 2340 in the product is calculated as(2 × 1000) + (3 × 100) + (4 × 10) = 2340:

Matrix multiplication satisfies the rules(AB)C=A(BC)(associativity), and(A+B)C=AC+BCas well asC(A+B) =CA+CB(left and rightdistributivity), whenever the size of the matrices is such that the various products are defined.[12]The productABmay be defined withoutBAbeing defined, namely ifAandBarem×nandn×kmatrices, respectively, andm≠k.Even if both products are defined, they generally need not be equal, that is:

In other words,matrix multiplication is notcommutative,in marked contrast to (rational, real, or complex) numbers, whose product is independent of the order of the factors.[10]An example of two matrices not commuting with each other is:

whereas

Besides the ordinary matrix multiplication just described, other less frequently used operations on matrices that can be considered forms of multiplication also exist, such as theHadamard productand theKronecker product.[13]They arise in solving matrix equations such as theSylvester equation.

Row operations

[edit]There are three types of row operations:

- row addition, that is adding a row to another.

- row multiplication, that is multiplying all entries of a row by a non-zero constant;

- row switching, that is interchanging two rows of a matrix;

These operations are used in several ways, including solvinglinear equationsand findingmatrix inverses.

Submatrix

[edit]Asubmatrixof a matrix is a matrix obtained by deleting any collection of rows and/or columns.[14][15][16]For example, from the following 3-by-4 matrix, we can construct a 2-by-3 submatrix by removing row 3 and column 2:

Theminorsand cofactors of a matrix are found by computing thedeterminantof certain submatrices.[16][17]

Aprincipal submatrixis a square submatrix obtained by removing certain rows and columns. The definition varies from author to author. According to some authors, a principal submatrix is a submatrix in which the set of row indices that remain is the same as the set of column indices that remain.[18][19]Other authors define a principal submatrix as one in which the firstkrows and columns, for some numberk,are the ones that remain;[20]this type of submatrix has also been called aleading principal submatrix.[21]

Linear equations

[edit]Matrices can be used to compactly write and work with multiple linear equations, that is, systems of linear equations. For example, ifAis anm×nmatrix,xdesignates a column vector (that is,n×1-matrix) ofnvariablesx1,x2,...,xn,andbis anm×1-column vector, then the matrix equation

is equivalent to the system of linear equations[22]

Using matrices, this can be solved more compactly than would be possible by writing out all the equations separately. Ifn=mand the equations areindependent,then this can be done by writing

whereA−1is theinverse matrixofA.IfAhas no inverse, solutions—if any—can be found using itsgeneralized inverse.

Linear transformations

[edit]

Matrices and matrix multiplication reveal their essential features when related tolinear transformations,also known aslinear maps.A realm-by-nmatrixAgives rise to a linear transformationmapping each vectorxinto the (matrix) productAx,which is a vector inConversely, each linear transformationarises from a uniquem-by-nmatrixA:explicitly, the(i,j)-entry ofAis theith coordinate off (ej),whereej= (0,..., 0, 1, 0,..., 0)is theunit vectorwith 1 in thejth position and 0 elsewhere.The matrixAis said to represent the linear mapf,andAis called thetransformation matrixoff.

For example, the 2×2 matrix

can be viewed as the transform of theunit squareinto aparallelogramwith vertices at(0, 0),(a,b),(a+c,b+d),and(c,d).The parallelogram pictured at the right is obtained by multiplyingAwith each of the column vectors,andin turn. These vectors define the vertices of the unit square.

The following table shows several 2×2 real matrices with the associated linear maps ofTheblueoriginal is mapped to thegreengrid and shapes. The origin(0, 0)is marked with a black point.

| Horizontal shear withm= 1.25. |

Reflectionthrough the vertical axis | Squeeze mapping withr= 3/2 |

Scaling by a factor of 3/2 |

Rotation byπ/6 = 30° |

|

|

|

|

|

Under the1-to-1 correspondencebetween matrices and linear maps, matrix multiplication corresponds tocompositionof maps:[23]if ak-by-mmatrixBrepresents another linear map,then the compositiong∘fis represented byBAsince

The last equality follows from the above-mentioned associativity of matrix multiplication.

Therank of a matrixAis the maximum number oflinearly independentrow vectors of the matrix, which is the same as the maximum number of linearly independent column vectors.[24]Equivalently it is thedimensionof theimageof the linear map represented byA.[25]Therank–nullity theoremstates that the dimension of thekernelof a matrix plus the rank equals the number of columns of the matrix.[26]

Square matrix

[edit]Asquare matrixis a matrix with the same number of rows and columns.[5]Ann-by-nmatrix is known as a square matrix of ordern.Any two square matrices of the same order can be added and multiplied. The entriesaiiform themain diagonalof a square matrix. They lie on the imaginary line that runs from the top left corner to the bottom right corner of the matrix.

Main types

[edit]Name Example withn= 3 Diagonal matrix Lower triangular matrix Upper triangular matrix

Diagonal and triangular matrix

[edit]If all entries ofAbelow the main diagonal are zero,Ais called anuppertriangular matrix.Similarly, if all entries ofAabove the main diagonal are zero,Ais called alower triangular matrix.If all entries outside the main diagonal are zero,Ais called adiagonal matrix.

Identity matrix

[edit]Theidentity matrixInof sizenis then-by-nmatrix in which all the elements on themain diagonalare equal to 1 and all other elements are equal to 0, for example, It is a square matrix of ordern,and also a special kind ofdiagonal matrix.It is called an identity matrix because multiplication with it leaves a matrix unchanged: for anym-by-nmatrixA.

A nonzero scalar multiple of an identity matrix is called ascalarmatrix. If the matrix entries come from a field, the scalar matrices form a group, under matrix multiplication, that is isomorphic to the multiplicative group of nonzero elements of the field.

Symmetric or skew-symmetric matrix

[edit]A square matrixAthat is equal to its transpose, that is,A=AT,is asymmetric matrix.If instead,Ais equal to the negative of its transpose, that is,A= −AT,thenAis askew-symmetric matrix.In complex matrices, symmetry is often replaced by the concept ofHermitian matrices,which satisfiesA∗=A,where the star orasteriskdenotes theconjugate transposeof the matrix, that is, the transpose of thecomplex conjugateofA.

By thespectral theorem,real symmetric matrices and complex Hermitian matrices have aneigenbasis;that is, every vector is expressible as alinear combinationof eigenvectors. In both cases, all eigenvalues are real.[27]This theorem can be generalized to infinite-dimensional situations related to matrices with infinitely many rows and columns, seebelow.

Invertible matrix and its inverse

[edit]A square matrixAis calledinvertibleornon-singularif there exists a matrixBsuch that[28][29] whereInis then×nidentity matrixwith 1s on themain diagonaland 0s elsewhere. IfBexists, it is unique and is called theinverse matrixofA,denotedA−1.

Definite matrix

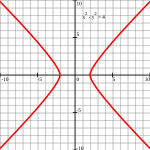

[edit]| Positive definite matrix | Indefinite matrix |

|---|---|

Points such that (Ellipse) |

Points such that (Hyperbola) |

A symmetric real matrixAis calledpositive-definiteif the associatedquadratic form has a positive value for every nonzero vectorxinIff (x)only yields negative values thenAisnegative-definite;iffdoes produce both negative and positive values thenAisindefinite.[30]If the quadratic formfyields only non-negative values (positive or zero), the symmetric matrix is calledpositive-semidefinite(or if only non-positive values, then negative-semidefinite); hence the matrix is indefinite precisely when it is neither positive-semidefinite nor negative-semidefinite.

A symmetric matrix is positive-definite if and only if all its eigenvalues are positive, that is, the matrix is positive-semidefinite and it is invertible.[31]The table at the right shows two possibilities for 2-by-2 matrices.

Allowing as input two different vectors instead yields thebilinear formassociated toA:[32]

In the case of complex matrices, the same terminology and result apply, withsymmetric matrix,quadratic form,bilinear form,andtransposexTreplaced respectively byHermitian matrix,Hermitian form,sesquilinear form,andconjugate transposexH.

Orthogonal matrix

[edit]Anorthogonal matrixis a square matrix withrealentries whose columns and rows areorthogonalunit vectors(that is,orthonormalvectors). Equivalently, a matrixAis orthogonal if itstransposeis equal to itsinverse:

which entails

whereInis theidentity matrixof sizen.

An orthogonal matrixAis necessarilyinvertible(with inverseA−1=AT),unitary(A−1=A*), andnormal(A*A=AA*). Thedeterminantof any orthogonal matrix is either+1or−1.Aspecial orthogonal matrixis an orthogonal matrix withdeterminant+1. As alinear transformation,every orthogonal matrix with determinant+1is a purerotationwithout reflection, i.e., the transformation preserves the orientation of the transformed structure, while every orthogonal matrix with determinant-1reverses the orientation, i.e., is a composition of a purereflectionand a (possibly null) rotation. The identity matrices have determinant1and are pure rotations by an angle zero.

Thecomplexanalog of an orthogonal matrix is aunitary matrix.

Main operations

[edit]Trace

[edit]Thetrace,tr(A)of a square matrixAis the sum of its diagonal entries. While matrix multiplication is not commutative as mentionedabove,the trace of the product of two matrices is independent of the order of the factors:

This is immediate from the definition of matrix multiplication:

It follows that the trace of the product of more than two matrices is independent ofcyclic permutationsof the matrices, however, this does not in general apply for arbitrary permutations (for example,tr(ABC) ≠ tr(BAC),in general). Also, the trace of a matrix is equal to that of its transpose, that is,

Determinant

[edit]

Thedeterminantof a square matrixA(denoteddet(A)or|A|) is a number encoding certain properties of the matrix. A matrix is invertibleif and only ifits determinant is nonzero. Itsabsolute valueequals the area (in) or volume (in) of the image of the unit square (or cube), while its sign corresponds to the orientation of the corresponding linear map: the determinant is positive if and only if the orientation is preserved.

The determinant of 2-by-2 matrices is given by

The determinant of 3-by-3 matrices involves 6 terms (rule of Sarrus). The more lengthyLeibniz formulageneralizes these two formulae to all dimensions.[34]

The determinant of a product of square matrices equals the product of their determinants: or using alternate notation:[35] Adding a multiple of any row to another row, or a multiple of any column to another column does not change the determinant. Interchanging two rows or two columns affects the determinant by multiplying it by −1.[36]Using these operations, any matrix can be transformed to a lower (or upper) triangular matrix, and for such matrices, the determinant equals the product of the entries on the main diagonal; this provides a method to calculate the determinant of any matrix. Finally, theLaplace expansionexpresses the determinant in terms ofminors,that is, determinants of smaller matrices.[37]This expansion can be used for a recursive definition of determinants (taking as starting case the determinant of a 1-by-1 matrix, which is its unique entry, or even the determinant of a 0-by-0 matrix, which is 1), that can be seen to be equivalent to the Leibniz formula. Determinants can be used to solvelinear systemsusingCramer's rule,where the division of the determinants of two related square matrices equates to the value of each of the system's variables.[38]

Eigenvalues and eigenvectors

[edit]A numberand a non-zero vectorvsatisfying

are called aneigenvalueand aneigenvectorofA,respectively.[39][40]The numberλis an eigenvalue of ann×n-matrixAif and only if(A− λIn)is not invertible, which isequivalentto

The polynomialpAin anindeterminateXgiven by evaluation of the determinantdet(X In−A)is called thecharacteristic polynomialofA.It is amonic polynomialofdegreen.Therefore the polynomial equationpA(λ) = 0has at mostndifferent solutions, that is, eigenvalues of the matrix.[42]They may be complex even if the entries ofAare real. According to theCayley–Hamilton theorem,pA(A) =0,that is, the result of substituting the matrix itself into its characteristic polynomial yields thezero matrix.

Computational aspects

[edit]Matrix calculations can be often performed with different techniques. Many problems can be solved by both direct algorithms and iterative approaches. For example, the eigenvectors of a square matrix can be obtained by finding asequenceof vectorsxnconvergingto an eigenvector whenntends toinfinity.[43]

To choose the most appropriate algorithm for each specific problem, it is important to determine both the effectiveness and precision of all the available algorithms. The domain studying these matters is callednumerical linear algebra.[44]As with other numerical situations, two main aspects are thecomplexityof algorithms and theirnumerical stability.

Determining the complexity of an algorithm means findingupper boundsor estimates of how many elementary operations such as additions and multiplications of scalars are necessary to perform some algorithm, for example,multiplication of matrices.Calculating the matrix product of twon-by-nmatrices using the definition given above needsn3multiplications, since for any of then2entries of the product,nmultiplications are necessary. TheStrassen algorithmoutperforms this "naive" algorithm; it needs onlyn2.807multiplications.[45]A refined approach also incorporates specific features of the computing devices.

In many practical situations, additional information about the matrices involved is known. An important case issparse matrices,that is, matrices most of whose entries are zero. There are specifically adapted algorithms for, say, solving linear systemsAx=bfor sparse matricesA,such as theconjugate gradient method.[46]

An algorithm is, roughly speaking, numerically stable if little deviations in the input values do not lead to big deviations in the result. For example, calculating the inverse of a matrix via Laplace expansion (adj(A)denotes theadjugate matrixofA) may lead to significant rounding errors if the determinant of the matrix is very small. Thenorm of a matrixcan be used to capture theconditioningof linear algebraic problems, such as computing a matrix's inverse.[47]

Most computerprogramming languagessupport arrays but are not designed with built-in commands for matrices. Instead, available external libraries provide matrix operations on arrays, in nearly all currently used programming languages. Matrix manipulation was among the earliest numerical applications of computers.[48]The originalDartmouth BASIChad built-in commands for matrix arithmetic on arrays from itssecond editionimplementation in 1964. As early as the 1970s, some engineering desktop computers such as theHP 9830hadROM cartridges to add BASIC commands for matrices.Some computer languages such asAPLwere designed to manipulate matrices, andvarious mathematical programscan be used to aid computing with matrices.[49]As of 2023, most computers have some form of built-in matrix operations at a low level implementing the standardBLASspecification, upon which most higher-level matrix and linear algebra libraries (e.g.,EISPACK,LINPACK,LAPACK) rely. While most of these libraries require a professional level of coding,LAPACKcan be accessed by higher-level (and user-friendly) bindings such asNumPy/SciPy,R,GNU Octave,MATLAB.

Decomposition

[edit]There are several methods to render matrices into a more easily accessible form. They are generally referred to asmatrix decompositionormatrix factorizationtechniques. The interest of all these techniques is that they preserve certain properties of the matrices in question, such as determinant, rank, or inverse, so that these quantities can be calculated after applying the transformation, or that certain matrix operations are algorithmically easier to carry out for some types of matrices.

TheLU decompositionfactors matrices as a product of lower (L) and an uppertriangular matrices(U).[50]Once this decomposition is calculated, linear systems can be solved more efficiently, by a simple technique calledforward and back substitution.Likewise, inverses of triangular matrices are algorithmically easier to calculate. TheGaussian eliminationis a similar algorithm; it transforms any matrix torow echelon form.[51]Both methods proceed by multiplying the matrix by suitableelementary matrices,which correspond topermuting rows or columnsand adding multiples of one row to another row.Singular value decompositionexpresses any matrixAas a productUDV∗,whereUandVareunitary matricesandDis a diagonal matrix.

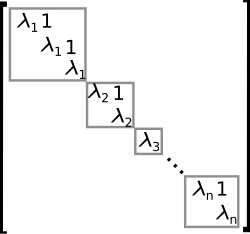

TheeigendecompositionordiagonalizationexpressesAas a productVDV−1,whereDis a diagonal matrix andVis a suitable invertible matrix.[52]IfAcan be written in this form, it is calleddiagonalizable.More generally, and applicable to all matrices, the Jordan decomposition transforms a matrix intoJordan normal form,that is to say matrices whose only nonzero entries are the eigenvaluesλ1toλnofA,placed on the main diagonal and possibly entries equal to one directly above the main diagonal, as shown at the right.[53]Given the eigendecomposition, thenth power ofA(that is,n-fold iterated matrix multiplication) can be calculated via and the power of a diagonal matrix can be calculated by taking the corresponding powers of the diagonal entries, which is much easier than doing the exponentiation forAinstead. This can be used to compute thematrix exponentialeA,a need frequently arising in solvinglinear differential equations,matrix logarithmsandsquare roots of matrices.[54]To avoid numericallyill-conditionedsituations, further algorithms such as theSchur decompositioncan be employed.[55]

Abstract algebraic aspects and generalizations

[edit]Matrices can be generalized in different ways. Abstract algebra uses matrices with entries in more generalfieldsor evenrings,while linear algebra codifies properties of matrices in the notion of linear maps. It is possible to consider matrices with infinitely many columns and rows. Another extension istensors,which can be seen as higher-dimensional arrays of numbers, as opposed to vectors, which can often be realized as sequences of numbers, while matrices are rectangular or two-dimensional arrays of numbers.[56]Matrices, subject to certain requirements tend to formgroupsknown as matrix groups. Similarly under certain conditions matrices formringsknown asmatrix rings.Though the product of matrices is not in general commutative certain matrices formfieldsknown asmatrix fields. In general, matrices and theirmultiplicationalso form acategory,thecategory of matrices.

Matrices with more general entries

[edit]This article focuses on matrices whose entries are real or complex numbers.However, matrices can be considered with much more general types of entries than real or complex numbers.As a first step of generalization, anyfield,that is, asetwhereaddition,subtraction,multiplication,anddivisionoperations are defined and well-behaved, may be used instead oforfor examplerational numbersorfinite fields.For example,coding theorymakes use of matrices over finite fields. Wherevereigenvaluesare considered, as these are roots of a polynomial they may exist only in a larger field than that of the entries of the matrix; for instance, they may be complex in the case of a matrix with real entries. The possibility to reinterpret the entries of a matrix as elements of a larger field (for example, to view a real matrix as a complex matrix whose entries happen to be all real) then allows considering each square matrix to possess a full set of eigenvalues. Alternatively one can consider only matrices with entries in analgebraically closed field,such asfrom the outset.

More generally, matrices with entries in aringRare widely used in mathematics.[57]Rings are a more general notion than fields in that a division operation need not exist. The very same addition and multiplication operations of matrices extend to this setting, too. The setM(n,R)(also denotedMn(R)[7]) of all squaren-by-nmatrices overRis a ring calledmatrix ring,isomorphic to theendomorphism ringof the leftR-moduleRn.[58]If the ringRiscommutative,that is, its multiplication is commutative, then the ringM(n,R)is also anassociative algebraoverR.Thedeterminantof square matrices over a commutative ringRcan still be defined using theLeibniz formula;such a matrix is invertible if and only if its determinant isinvertibleinR,generalizing the situation over a fieldF,where every nonzero element is invertible.[59]Matrices oversuperringsare calledsupermatrices.[60]

Matrices do not always have all their entries in the same ring– or even in any ring at all. One special but common case isblock matrices,which may be considered as matrices whose entries themselves are matrices. The entries need not be square matrices, and thus need not be members of anyring;but their sizes must fulfill certain compatibility conditions.

Relationship to linear maps

[edit]Linear mapsare equivalent tom-by-nmatrices, as describedabove.More generally, any linear mapf :V→Wbetween finite-dimensionalvector spacescan be described by a matrixA= (aij),after choosingbasesv1,...,vnofV,andw1,...,wmofW(sonis the dimension ofVandmis the dimension ofW), which is such that

In other words, columnjofAexpresses the image ofvjin terms of the basis vectorswIofW;thus this relation uniquely determines the entries of the matrixA.The matrix depends on the choice of the bases: different choices of bases give rise to different, butequivalent matrices.[61]Many of the above concrete notions can be reinterpreted in this light, for example, the transpose matrixATdescribes thetranspose of the linear mapgiven byA,concerning thedual bases.[62]

These properties can be restated more naturally: thecategory of matriceswith entries in a fieldwith multiplication as composition isequivalentto the category of finite-dimensionalvector spacesand linear maps over this field.[63]

More generally, the set ofm×nmatrices can be used to represent theR-linear maps between the free modulesRmandRnfor an arbitrary ringRwith unity. Whenn=mcomposition of these maps is possible, and this gives rise to thematrix ringofn×nmatrices representing theendomorphism ringofRn.

Matrix groups

[edit]Agroupis a mathematical structure consisting of a set of objects together with abinary operation,that is, an operation combining any two objects to a third, subject to certain requirements.[64]A group in which the objects are matrices and the group operation is matrix multiplication is called amatrix group.[65][66]Since a group of every element must be invertible, the most general matrix groups are the groups of all invertible matrices of a given size, called thegeneral linear groups.

Any property of matrices that is preserved under matrix products and inverses can be used to define further matrix groups. For example, matrices with a given size and with a determinant of 1 form asubgroupof (that is, a smaller group contained in) their general linear group, called aspecial linear group.[67]Orthogonal matrices,determined by the condition form theorthogonal group.[68]Every orthogonal matrix hasdeterminant1 or −1. Orthogonal matrices with determinant 1 form a subgroup calledspecial orthogonal group.

Everyfinite groupisisomorphicto a matrix group, as one can see by considering theregular representationof thesymmetric group.[69]General groups can be studied using matrix groups, which are comparatively well understood, usingrepresentation theory.[70]

Infinite matrices

[edit]It is also possible to consider matrices with infinitely many rows and/or columns[71]even though, being infinite objects, one cannot write down such matrices explicitly. All that matters is that for every element in the set indexing rows, and every element in the set indexing columns, there is a well-defined entry (these index sets need not even be subsets of the natural numbers). The basic operations of addition, subtraction, scalar multiplication, and transposition can still be defined without problem; however, matrix multiplication may involve infinite summations to define the resulting entries, and these are not defined in general.

IfRis any ring with unity, then the ring of endomorphisms ofas a rightRmodule is isomorphic to the ring ofcolumn finite matriceswhose entries are indexed by,and whose columns each contain only finitely many nonzero entries. The endomorphisms ofMconsidered as a leftRmodule result in an analogous object, therow finite matriceswhose rows each only have finitely many nonzero entries.

If infinite matrices are used to describe linear maps, then only those matrices can be used all of whose columns have but a finite number of nonzero entries, for the following reason. For a matrixAto describe a linear mapf :V→W,bases for both spaces must have been chosen; recall that by definition this means that every vector in the space can be written uniquely as a (finite) linear combination of basis vectors, so that written as a (column) vectorveofcoefficients,only finitely many entriesvIare nonzero. Now the columns ofAdescribe the images byfof individual basis vectors ofVin the basis ofW,which is only meaningful if these columns have only finitely many nonzero entries. There is no restriction on the rows ofAhowever: in the productA·vthere are only finitely many nonzero coefficients ofvinvolved, so every one of its entries, even if it is given as an infinite sum of products, involves only finitely many nonzero terms and is therefore well defined. Moreover, this amounts to forming a linear combination of the columns ofAthat effectively involves only finitely many of them, whence the result has only finitely many nonzero entries because each of those columns does. Products of two matrices of the given type are well defined (provided that the column-index and row-index sets match), are of the same type, and correspond to the composition of linear maps.

IfRis anormedring, then the condition of row or column finiteness can be relaxed. With the norm in place,absolutely convergent seriescan be used instead of finite sums. For example, the matrices whose column sums are convergent sequences form a ring. Analogously, the matrices whose row sums are convergent series also form a ring.

Infinite matrices can also be used to describeoperators on Hilbert spaces,where convergence andcontinuityquestions arise, which again results in certain constraints that must be imposed. However, the explicit point of view of matrices tends to obfuscate the matter,[72]and the abstract and more powerful tools offunctional analysiscan be used instead.

Empty matrix

[edit]Anempty matrixis a matrix in which the number of rows or columns (or both) is zero.[73][74]Empty matrices help to deal with maps involving thezero vector space.For example, ifAis a 3-by-0 matrix andBis a 0-by-3 matrix, thenABis the 3-by-3 zero matrix corresponding to the null map from a 3-dimensional spaceVto itself, whileBAis a 0-by-0 matrix. There is no common notation for empty matrices, but mostcomputer algebra systemsallow creating and computing with them. The determinant of the 0-by-0 matrix is 1 as follows regarding theempty productoccurring in the Leibniz formula for the determinant as 1. This value is also consistent with the fact that the identity map from any finite-dimensional space to itself has determinant1, a fact that is often used as a part of the characterization of determinants.

Applications

[edit]There are numerous applications of matrices, both in mathematics and other sciences. Some of them merely take advantage of the compact representation of a set of numbers in a matrix. For example, ingame theoryandeconomics,thepayoff matrixencodes the payoff for two players, depending on which out of a given (finite) set of strategies the players choose.[75]Text miningand automatedthesauruscompilation makes use ofdocument-term matricessuch astf-idfto track frequencies of certain words in several documents.[76]

Complex numbers can be represented by particular real 2-by-2 matrices via

under which addition and multiplication of complex numbers and matrices correspond to each other. For example, 2-by-2 rotation matrices represent the multiplication with some complex number ofabsolute value1, asabove.A similar interpretation is possible forquaternions[77]andClifford algebrasin general.

Earlyencryptiontechniques such as theHill cipheralso used matrices. However, due to the linear nature of matrices, these codes are comparatively easy to break.[78]Computer graphicsuses matrices to represent objects; to calculate transformations of objects using affinerotation matricesto accomplish tasks such as projecting a three-dimensional object onto a two-dimensional screen, corresponding to a theoretical camera observation; and to apply image convolutions such as sharpening, blurring, edge detection, and more.[79]Matrices over apolynomial ringare important in the study ofcontrol theory.

Chemistrymakes use of matrices in various ways, particularly since the use ofquantum theoryto discussmolecular bondingandspectroscopy.Examples are theoverlap matrixand theFock matrixused in solving theRoothaan equationsto obtain themolecular orbitalsof theHartree–Fock method.

Graph theory

[edit]

Theadjacency matrixof afinite graphis a basic notion ofgraph theory.[80]It records which vertices of the graph are connected by an edge. Matrices containing just two different values (1 and 0 meaning for example "yes" and "no", respectively) are calledlogical matrices.Thedistance (or cost) matrixcontains information about the distances of the edges.[81]These concepts can be applied towebsitesconnected byhyperlinksor cities connected by roads etc., in which case (unless the connection network is extremely dense) the matrices tend to besparse,that is, contain few nonzero entries. Therefore, specifically tailored matrix algorithms can be used innetwork theory.

Analysis and geometry

[edit]TheHessian matrixof adifferentiable functionconsists of thesecond derivativesofƒconcerning the several coordinate directions, that is,[82]

It encodes information about the local growth behavior of the function: given acritical pointx= (x1,...,xn),that is, a point where the firstpartial derivativesofƒvanish, the function has alocal minimumif the Hessian matrix ispositive definite.Quadratic programmingcan be used to find global minima or maxima of quadratic functions closely related to the ones attached to matrices (seeabove).[83]

Another matrix frequently used in geometrical situations is theJacobi matrixof a differentiable mapIff1,...,fmdenote the components off,then the Jacobi matrix is defined as[84]

Ifn>m,and if the rank of the Jacobi matrix attains its maximal valuem,fis locally invertible at that point, by theimplicit function theorem.[85]

Partial differential equationscan be classified by considering the matrix of coefficients of the highest-order differential operators of the equation. Forelliptic partial differential equationsthis matrix is positive definite, which has a decisive influence on the set of possible solutions of the equation in question.[86]

Thefinite element methodis an important numerical method to solve partial differential equations, widely applied in simulating complex physical systems. It attempts to approximate the solution to some equation by piecewise linear functions, where the pieces are chosen concerning a sufficiently fine grid, which in turn can be recast as a matrix equation.[87]

Probability theory and statistics

[edit]

Stochastic matricesare square matrices whose rows areprobability vectors,that is, whose entries are non-negative and sum up to one. Stochastic matrices are used to defineMarkov chainswith finitely many states.[88]A row of the stochastic matrix gives the probability distribution for the next position of some particle currently in the state that corresponds to the row. Properties of the Markov chain-likeabsorbing states,that is, states that any particle attains eventually, can be read off the eigenvectors of the transition matrices.[89]

Statistics also makes use of matrices in many different forms.[90]Descriptive statisticsis concerned with describing data sets, which can often be represented asdata matrices,which may then be subjected todimensionality reductiontechniques. Thecovariance matrixencodes the mutualvarianceof severalrandom variables.[91]Another technique using matrices arelinear least squares,a method that approximates a finite set of pairs(x1,y1), (x2,y2),..., (xN,yN),by a linear function

which can be formulated in terms of matrices, related to thesingular value decompositionof matrices.[92]

Random matricesare matrices whose entries are random numbers, subject to suitableprobability distributions,such asmatrix normal distribution.Beyond probability theory, they are applied in domains ranging fromnumber theorytophysics.[93][94]

Symmetries and transformations in physics

[edit]Linear transformations and the associatedsymmetriesplay a key role in modern physics. For example,elementary particlesinquantum field theoryare classified as representations of theLorentz groupof special relativity and, more specifically, by their behavior under thespin group.Concrete representations involving thePauli matricesand more generalgamma matricesare an integral part of the physical description offermions,which behave asspinors.[95]For the three lightestquarks,there is a group-theoretical representation involving thespecial unitary groupSU(3); for their calculations, physicists use a convenient matrix representation known as theGell-Mann matrices,which are also used for the SU(3)gauge groupthat forms the basis of the modern description of strong nuclear interactions,quantum chromodynamics.TheCabibbo–Kobayashi–Maskawa matrix,in turn, expresses the fact that the basic quark states that are important forweak interactionsare not the same as, but linearly related to the basic quark states that define particles with specific and distinctmasses.[96]

Linear combinations of quantum states

[edit]The first model ofquantum mechanics(Heisenberg,1925) represented the theory's operators by infinite-dimensional matrices acting on quantum states.[97]This is also referred to asmatrix mechanics.One particular example is thedensity matrixthat characterizes the "mixed" state of a quantum system as a linear combination of elementary, "pure"eigenstates.[98]

Another matrix serves as a key tool for describing the scattering experiments that form the cornerstone of experimental particle physics: Collision reactions such as occur inparticle accelerators,where non-interacting particles head towards each other and collide in a small interaction zone, with a new set of non-interacting particles as the result, can be described as the scalar product of outgoing particle states and a linear combination of ingoing particle states. The linear combination is given by a matrix known as theS-matrix,which encodes all information about the possible interactions between particles.[99]

Normal modes

[edit]A general application of matrices in physics is the description of linearly coupled harmonic systems. Theequations of motionof such systems can be described in matrix form, with a mass matrix multiplying a generalized velocity to give the kinetic term, and aforcematrix multiplying a displacement vector to characterize the interactions. The best way to obtain solutions is to determine the system'seigenvectors,itsnormal modes,by diagonalizing the matrix equation. Techniques like this are crucial when it comes to the internal dynamics ofmolecules:the internal vibrations of systems consisting of mutually bound component atoms.[100]They are also needed for describing mechanical vibrations, and oscillations in electrical circuits.[101]

Geometrical optics

[edit]Geometrical opticsprovides further matrix applications. In this approximative theory, thewave natureof light is neglected. The result is a model in whichlight raysare indeedgeometrical rays.If the deflection of light rays by optical elements is small, the action of alensor reflective element on a given light ray can be expressed as multiplication of a two-component vector with a two-by-two matrix calledray transfer matrix analysis:the vector's components are the light ray's slope and its distance from the optical axis, while the matrix encodes the properties of the optical element. There are two kinds of matrices, viz. arefraction matrixdescribing the refraction at a lens surface, and atranslation matrix,describing the translation of the plane of reference to the next refracting surface, where another refraction matrix applies. The optical system, consisting of a combination of lenses and/or reflective elements, is simply described by the matrix resulting from the product of the components' matrices.[102]

Electronics

[edit]Traditionalmesh analysisandnodal analysisin electronics lead to a system of linear equations that can be described with a matrix.

The behavior of manyelectronic componentscan be described using matrices. LetAbe a 2-dimensional vector with the component's input voltagev1and input currentI1as its elements, and letBbe a 2-dimensional vector with the component's output voltagev2and output currentI2as its elements. Then the behavior of the electronic component can be described byB=H·A,whereHis a 2 x 2 matrix containing oneimpedanceelement (h12), oneadmittanceelement (h21), and twodimensionlesselements (h11andh22). Calculating a circuit now reduces to multiplying matrices.

History

[edit]Matrices have a long history of application in solvinglinear equationsbut they were known as arrays until the 1800s. TheChinese textThe Nine Chapters on the Mathematical Artwritten in the 10th–2nd century BCE is the first example of the use of array methods to solvesimultaneous equations,[103]including the concept ofdeterminants.In 1545 Italian mathematicianGerolamo Cardanointroduced the method to Europe when he publishedArs Magna.[104]TheJapanese mathematicianSekiused the same array methods to solve simultaneous equations in 1683.[105]The Dutch mathematicianJan de Wittrepresented transformations using arrays in his 1659 bookElements of Curves(1659).[106]Between 1700 and 1710Gottfried Wilhelm Leibnizpublicized the use of arrays for recording information or solutions and experimented with over 50 different systems of arrays.[104]Cramerpresentedhis rulein 1750.

The term "matrix" (Latin for "womb", "dam" (non-human female animal kept for breeding), "source", "origin", "list", and "register", are derived frommater—mother[107]) was coined byJames Joseph Sylvesterin 1850,[108]who understood a matrix as an object giving rise to several determinants today calledminors,that is to say, determinants of smaller matrices that derive from the original one by removing columns and rows. In an 1851 paper, Sylvester explains:[109]

I have in previous papers defined a "Matrix" as a rectangular array of terms, out of which different systems of determinants may be engendered from the womb of a common parent.

Arthur Cayleypublished a treatise on geometric transformations using matrices that were not rotated versions of the coefficients being investigated as had previously been done. Instead, he defined operations such as addition, subtraction, multiplication, and division as transformations of those matrices and showed the associative and distributive properties held. Cayley investigated and demonstrated the non-commutative property of matrix multiplication as well as the commutative property of matrix addition.[104]Early matrix theory had limited the use of arrays almost exclusively to determinants and Arthur Cayley's abstract matrix operations were revolutionary. He was instrumental in proposing a matrix concept independent of equation systems. In 1858Cayleypublished hisA memoir on the theory of matrices[110][111]in which he proposed and demonstrated theCayley–Hamilton theorem.[104]

The English mathematicianCuthbert Edmund Culliswas the first to use modern bracket notation for matrices in 1913 and he simultaneously demonstrated the first significant use of the notationA= [ai,j]to represent a matrix whereai,jrefers to theith row and thejth column.[104]

The modern study of determinants sprang from several sources.[112]Number-theoreticalproblems ledGaussto relate coefficients ofquadratic forms,that is, expressions such asx2+xy− 2y2,andlinear mapsin three dimensions to matrices.Eisensteinfurther developed these notions, including the remark that, in modern parlance,matrix productsarenon-commutative.Cauchywas the first to prove general statements about determinants, using as the definition of the determinant of a matrixA= [ai, j]the following: replace the powersak

jbyajkin thepolynomial

- ,

wheredenotes theproductof the indicated terms. He also showed, in 1829, that theeigenvaluesof symmetric matrices are real.[113]Jacobistudied "functional determinants" —later calledJacobi determinantsby Sylvester—which can be used to describe geometric transformations at a local (orinfinitesimal) level, seeabove.Kronecker'sVorlesungen über die Theorie der Determinanten[114]andWeierstrass'Zur Determinantentheorie,[115]both published in 1903, first treated determinantsaxiomatically,as opposed to previous more concrete approaches such as the mentioned formula of Cauchy. At that point, determinants were firmly established.

Many theorems were first established for small matrices only, for example, theCayley–Hamilton theoremwas proved for 2×2 matrices by Cayley in the aforementioned memoir, and byHamiltonfor 4×4 matrices.Frobenius,working onbilinear forms,generalized the theorem to all dimensions (1898). Also at the end of the 19th century, theGauss–Jordan elimination(generalizing a special case now known asGauss elimination) was established byWilhelm Jordan.In the early 20th century, matrices attained a central role in linear algebra,[116]partially due to their use in the classification of thehypercomplex numbersystems of the previous century.

The inception ofmatrix mechanicsbyHeisenberg,BornandJordanled to studying matrices with infinitely many rows and columns.[117]Later,von Neumanncarried out themathematical formulation of quantum mechanics,by further developingfunctional analyticnotions such aslinear operatorsonHilbert spaces,which, very roughly speaking, correspond toEuclidean space,but with an infinity ofindependent directions.

Other historical usages of the word "matrix" in mathematics

[edit]The word has been used in unusual ways by at least two authors of historical importance.

Bertrand RussellandAlfred North Whiteheadin theirPrincipia Mathematica(1910–1913) use the word "matrix" in the context of theiraxiom of reducibility.They proposed this axiom as a means to reduce any function to one of lower type, successively, so that at the "bottom" (0 order) the function is identical to itsextension:[118]

Let us give the name ofmatrixto any function, of however many variables, that does not involve anyapparent variables.Then, any possible function other than a matrix derives from a matrix using generalization, that is, by considering the proposition that the function in question is true with all possible values or with some value of one of the arguments, the other argument or arguments remaining undetermined.

For example, a functionΦ(x, y)of two variablesxandycan be reduced to acollectionof functions of a single variable, for example,y,by "considering" the function for all possible values of "individuals"aisubstituted in place of a variablex.And then the resulting collection of functions of the single variabley,that is,∀ai:Φ(ai,y),can be reduced to a "matrix" of values by "considering" the function for all possible values of "individuals"bisubstituted in place of variabley:

Alfred Tarskiin his 1946Introduction to Logicused the word "matrix" synonymously with the notion oftruth tableas used in mathematical logic.[119]

See also

[edit]- List of named matrices

- Algebraic multiplicity– Multiplicity of an eigenvalue as a root of the characteristic polynomial

- Geometric multiplicity– Dimension of the eigenspace associated with an eigenvalue

- Gram–Schmidt process– Orthonormalization of a set of vectors

- Irregular matrix

- Matrix calculus– Specialized notation for multivariable calculus

- Matrix function– Function that maps matrices to matrices

- Matrix multiplication algorithm

- Tensor— A generalization of matrices with any number of indices

- Bohemian matrices– Set of matrices

- Category of matrices— The algebraic structure formed by matrices and their multiplication

Notes

[edit]- ^However, in the case of adjacency matrices,matrix multiplicationor a variant of it allows the simultaneous computation of the number of paths between any two vertices, and of the shortest length of a path between two vertices.

- ^Lang2002

- ^Fraleigh (1976,p. 209)

- ^Nering (1970,p. 37)

- ^abWeisstein, Eric W."Matrix".mathworld.wolfram.com.Retrieved2020-08-19.

- ^Oualline2003, Ch. 5

- ^abPop; Furdui (2017).Square Matrices of Order 2.Springer International Publishing.ISBN978-3-319-54938-5.

- ^Brown1991, Definition I.2.1 (addition), Definition I.2.4 (scalar multiplication), and Definition I.2.33 (transpose)

- ^Brown1991, Theorem I.2.6

- ^ab"How to Multiply Matrices".www.mathsisfun.com.Retrieved2020-08-19.

- ^Brown1991, Definition I.2.20

- ^Brown1991, Theorem I.2.24

- ^Horn & Johnson1985, Ch. 4 and 5

- ^Bronson (1970,p. 16)

- ^Kreyszig (1972,p. 220)

- ^abProtter & Morrey (1970,p. 869)

- ^Kreyszig (1972,pp. 241, 244)

- ^Schneider, Hans; Barker, George Phillip (2012),Matrices and Linear Algebra,Dover Books on Mathematics, Courier Dover Corporation, p. 251,ISBN978-0-486-13930-2.

- ^Perlis, Sam (1991),Theory of Matrices,Dover books on advanced mathematics, Courier Dover Corporation, p. 103,ISBN978-0-486-66810-9.

- ^Anton, Howard (2010),Elementary Linear Algebra(10th ed.), John Wiley & Sons, p. 414,ISBN978-0-470-45821-1.

- ^Horn, Roger A.; Johnson, Charles R. (2012),Matrix Analysis(2nd ed.), Cambridge University Press, p. 17,ISBN978-0-521-83940-2.

- ^Brown1991, I.2.21 and 22

- ^Greub1975, Section III.2

- ^Brown1991, Definition II.3.3

- ^Greub1975, Section III.1

- ^Brown1991, Theorem II.3.22

- ^Horn & Johnson1985, Theorem 2.5.6

- ^Brown1991, Definition I.2.28

- ^Brown1991, Definition I.5.13

- ^Horn & Johnson1985, Chapter 7

- ^Horn & Johnson1985, Theorem 7.2.1

- ^Horn & Johnson1985, Example 4.0.6, p. 169

- ^"Matrix | mathematics".Encyclopedia Britannica.Retrieved2020-08-19.

- ^Brown1991, Definition III.2.1

- ^Brown1991, Theorem III.2.12

- ^Brown1991, Corollary III.2.16

- ^Mirsky1990, Theorem 1.4.1

- ^Brown1991, Theorem III.3.18

- ^Eigenmeans "own" inGermanand inDutch.

- ^Brown1991, Definition III.4.1

- ^Brown1991, Definition III.4.9

- ^Brown1991, Corollary III.4.10

- ^Householder1975, Ch. 7

- ^Bau III & Trefethen1997

- ^Golub & Van Loan1996, Algorithm 1.3.1

- ^Golub & Van Loan1996, Chapters 9 and 10, esp. section 10.2

- ^Golub & Van Loan1996, Chapter 2.3

- ^Grcar, Joseph F. (2011-01-01)."John von Neumann's Analysis of Gaussian Elimination and the Origins of Modern Numerical Analysis".SIAM Review.53(4): 607–682.doi:10.1137/080734716.ISSN0036-1445.

- ^For example,Mathematica,see Wolfram2003, Ch. 3.7

- ^Press, Flannery & Teukolsky et al.1992

- ^Stoer & Bulirsch2002, Section 4.1

- ^Horn & Johnson1985, Theorem 2.5.4

- ^Horn & Johnson1985, Ch. 3.1, 3.2

- ^Arnold & Cooke1992, Sections 14.5, 7, 8

- ^Bronson1989, Ch. 15

- ^Coburn1955, Ch. V

- ^Lang2002, Chapter XIII

- ^Lang2002, XVII.1, p. 643

- ^Lang2002, Proposition XIII.4.16

- ^Reichl2004, Section L.2

- ^Greub1975, Section III.3

- ^Greub1975, Section III.3.13

- ^Perrone (2024),pp. 99–100

- ^See any standard reference in a group.

- ^Additionally, the group must beclosedin the general linear group.

- ^Baker2003, Def. 1.30

- ^Baker2003, Theorem 1.2

- ^Artin1991, Chapter 4.5

- ^Rowen2008, Example 19.2, p. 198

- ^See any reference in representation theory orgroup representation.

- ^See the item "Matrix" in Itõ, ed.1987

- ^"Not much of matrix theory carries over to infinite-dimensional spaces, and what does is not so useful, but it sometimes helps." Halmos1982, p. 23, Chapter 5

- ^"Empty Matrix: A matrix is empty if either its row or column dimension is zero",GlossaryArchived2009-04-29 at theWayback Machine,O-Matrix v6 User Guide

- ^"A matrix having at least one dimension equal to zero is called an empty matrix",MATLAB Data StructuresArchived2009-12-28 at theWayback Machine

- ^Fudenberg & Tirole1983, Section 1.1.1

- ^Manning1999, Section 15.3.4

- ^Ward1997, Ch. 2.8

- ^Stinson2005, Ch. 1.1.5 and 1.2.4

- ^Association for Computing Machinery1979, Ch. 7

- ^Godsil & Royle2004, Ch. 8.1

- ^Punnen2002

- ^Lang1987a, Ch. XVI.6

- ^Nocedal2006, Ch. 16

- ^Lang1987a, Ch. XVI.1

- ^Lang1987a, Ch. XVI.5. For a more advanced, and more general statement see Lang1969, Ch. VI.2

- ^Gilbarg & Trudinger2001

- ^Šolin2005, Ch. 2.5. See alsostiffness method.

- ^Latouche & Ramaswami1999

- ^Mehata & Srinivasan1978, Ch. 2.8

- ^Healy, Michael(1986),Matrices for Statistics,Oxford University Press,ISBN978-0-19-850702-4

- ^Krzanowski1988, Ch. 2.2., p. 60

- ^Krzanowski1988, Ch. 4.1

- ^Conrey2007

- ^Zabrodin, Brezin & Kazakov et al.2006

- ^Itzykson & Zuber1980, Ch. 2

- ^see Burgess & Moore2007, section 1.6.3. (SU(3)), section 2.4.3.2. (Kobayashi–Maskawa matrix)

- ^Schiff1968, Ch. 6

- ^Bohm2001, sections II.4 and II.8

- ^Weinberg1995, Ch. 3

- ^Wherrett1987, part II

- ^Riley, Hobson & Bence1997, 7.17

- ^Guenther1990, Ch. 5

- ^Shen, Crossley & Lun1999cited by Bretscher2005, p. 1

- ^abcdeDiscrete Mathematics4th Ed. Dossey, Otto, Spense, Vanden Eynden, Published by Addison Wesley, October 10, 2001ISBN978-0-321-07912-1,p. 564-565

- ^Needham, Joseph;Wang Ling(1959).Science and Civilisation in China.Vol. III. Cambridge: Cambridge University Press. p. 117.ISBN978-0-521-05801-8.

- ^Discrete Mathematics4th Ed. Dossey, Otto, Spense, Vanden Eynden, Published by Addison Wesley, October 10, 2001ISBN978-0-321-07912-1,p. 564

- ^Merriam-Webster dictionary,Merriam-Webster,retrievedApril 20,2009

- ^Although many sources state that J. J. Sylvester coined the mathematical term "matrix" in 1848, Sylvester published nothing in 1848. (For proof that Sylvester published nothing in 1848, see J. J. Sylvester with H. F. Baker, ed.,The Collected Mathematical Papers of James Joseph Sylvester(Cambridge, England: Cambridge University Press, 1904),vol. 1.) His earliest use of the term "matrix" occurs in 1850 in J. J. Sylvester (1850) "Additions to the articles in the September number of this journal," On a new class of theorems, "and on Pascal's theorem,"The London, Edinburgh, and Dublin Philosophical Magazine and Journal of Science,37:363-370.From page 369:"For this purpose, we must commence, not with a square, but with an oblong arrangement of terms consisting, suppose, of m lines and n columns. This does not in itself represent a determinant, but is, as it were, a Matrix out of which we may form various systems of determinants..."

- ^The Collected Mathematical Papers of James Joseph Sylvester: 1837–1853,Paper 37,p. 247

- ^Phil.Trans.1858, vol.148, pp.17-37Math. Papers II475-496

- ^Dieudonné, ed.1978, Vol. 1, Ch. III, p. 96

- ^Knobloch1994

- ^Hawkins1975

- ^Kronecker1897

- ^Weierstrass1915, pp. 271–286

- ^Bôcher2004

- ^Mehra & Rechenberg1987

- ^Whitehead, Alfred North; and Russell, Bertrand (1913)Principia Mathematica to *56,Cambridge at the University Press, Cambridge UK (republished 1962) cf page 162ff.

- ^Tarski, Alfred; (1946)Introduction to Logic and the Methodology of Deductive Sciences,Dover Publications, Inc, New York NY,ISBN0-486-28462-X.

References

[edit]- Anton, Howard (1987),Elementary Linear Algebra(5th ed.), New York:Wiley,ISBN0-471-84819-0

- Arnold, Vladimir I.;Cooke, Roger(1992),Ordinary differential equations,Berlin, DE; New York, NY:Springer-Verlag,ISBN978-3-540-54813-3

- Artin, Michael(1991),Algebra,Prentice Hall,ISBN978-0-89871-510-1

- Association for Computing Machinery (1979),Computer Graphics,Tata McGraw–Hill,ISBN978-0-07-059376-3

- Baker, Andrew J. (2003),Matrix Groups: An Introduction to Lie Group Theory,Berlin, DE; New York, NY: Springer-Verlag,ISBN978-1-85233-470-3

- Bau III, David;Trefethen, Lloyd N.(1997),Numerical linear algebra,Philadelphia, PA: Society for Industrial and Applied Mathematics,ISBN978-0-89871-361-9

- Beauregard, Raymond A.; Fraleigh, John B. (1973),A First Course In Linear Algebra: with Optional Introduction to Groups, Rings, and Fields,Boston:Houghton Mifflin Co.,ISBN0-395-14017-X

- Bretscher, Otto (2005),Linear Algebra with Applications(3rd ed.), Prentice Hall

- Bronson, Richard (1970),Matrix Methods: An Introduction,New York:Academic Press,LCCN70097490

- Bronson, Richard (1989),Schaum's outline of theory and problems of matrix operations,New York:McGraw–Hill,ISBN978-0-07-007978-6

- Brown, William C. (1991),Matrices and vector spaces,New York, NY:Marcel Dekker,ISBN978-0-8247-8419-5

- Coburn, Nathaniel (1955),Vector and tensor analysis,New York, NY: Macmillan,OCLC1029828

- Conrey, J. Brian (2007),Ranks of elliptic curves and random matrix theory,Cambridge University Press,ISBN978-0-521-69964-8

- Fraleigh, John B. (1976),A First Course In Abstract Algebra(2nd ed.), Reading:Addison-Wesley,ISBN0-201-01984-1

- Fudenberg, Drew;Tirole, Jean(1983),Game Theory,MIT Press

- Gilbarg, David;Trudinger, Neil S.(2001),Elliptic partial differential equations of second order(2nd ed.), Berlin, DE; New York, NY: Springer-Verlag,ISBN978-3-540-41160-4

- Godsil, Chris;Royle, Gordon(2004),Algebraic Graph Theory,Graduate Texts in Mathematics, vol. 207, Berlin, DE; New York, NY: Springer-Verlag,ISBN978-0-387-95220-8

- Golub, Gene H.;Van Loan, Charles F.(1996),Matrix Computations(3rd ed.), Johns Hopkins,ISBN978-0-8018-5414-9

- Greub, Werner Hildbert (1975),Linear algebra,Graduate Texts in Mathematics, Berlin, DE; New York, NY: Springer-Verlag,ISBN978-0-387-90110-7

- Halmos, Paul Richard(1982),A Hilbert space problem book,Graduate Texts in Mathematics, vol. 19 (2nd ed.), Berlin, DE; New York, NY: Springer-Verlag,ISBN978-0-387-90685-0,MR0675952

- Horn, Roger A.;Johnson, Charles R.(1985),Matrix Analysis,Cambridge University Press,ISBN978-0-521-38632-6

- Householder, Alston S. (1975),The theory of matrices in numerical analysis,New York, NY:Dover Publications,MR0378371

- Kreyszig, Erwin (1972),Advanced Engineering Mathematics(3rd ed.), New York:Wiley,ISBN0-471-50728-8.

- Krzanowski, Wojtek J. (1988),Principles of multivariate analysis,Oxford Statistical Science Series, vol. 3, The Clarendon Press Oxford University Press,ISBN978-0-19-852211-9,MR0969370

- Itô, Kiyosi, ed. (1987),Encyclopedic dictionary of mathematics. Vol. I-IV(2nd ed.), MIT Press,ISBN978-0-262-09026-1,MR0901762

- Lang, Serge(1969),Analysis II,Addison-Wesley

- Lang, Serge (1987a),Calculus of several variables(3rd ed.), Berlin, DE; New York, NY: Springer-Verlag,ISBN978-0-387-96405-8

- Lang, Serge (1987b),Linear algebra,Berlin, DE; New York, NY: Springer-Verlag,ISBN978-0-387-96412-6

- Lang, Serge(2002),Algebra,Graduate Texts in Mathematics,vol. 211 (Revised third ed.), New York: Springer-Verlag,ISBN978-0-387-95385-4,MR1878556

- Latouche, Guy; Ramaswami, Vaidyanathan (1999),Introduction to matrix analytic methods in stochastic modeling(1st ed.), Philadelphia, PA: Society for Industrial and Applied Mathematics,ISBN978-0-89871-425-8

- Manning, Christopher D.; Schütze, Hinrich (1999),Foundations of statistical natural language processing,MIT Press,ISBN978-0-262-13360-9

- Mehata, K. M.; Srinivasan, S. K. (1978),Stochastic processes,New York, NY: McGraw–Hill,ISBN978-0-07-096612-3

- Mirsky, Leonid(1990),An Introduction to Linear Algebra,Courier Dover Publications,ISBN978-0-486-66434-7

- Nering, Evar D. (1970),Linear Algebra and Matrix Theory(2nd ed.), New York:Wiley,LCCN76-91646

- Nocedal, Jorge; Wright, Stephen J. (2006),Numerical Optimization(2nd ed.), Berlin, DE; New York, NY: Springer-Verlag, p. 449,ISBN978-0-387-30303-1

- Oualline, Steve (2003),Practical C++ programming,O'Reilly,ISBN978-0-596-00419-4

- Perrone, Paolo (2024),Starting Category Theory,World Scientific,doi:10.1142/9789811286018_0005,ISBN978-981-12-8600-1

- Press, William H.; Flannery, Brian P.;Teukolsky, Saul A.;Vetterling, William T. (1992),"LU Decomposition and Its Applications"(PDF),Numerical Recipes in FORTRAN: The Art of Scientific Computing(2nd ed.), Cambridge University Press, pp. 34–42, archived from the original on 2009-09-06

{{citation}}:CS1 maint: unfit URL (link) - Protter, Murray H.; Morrey, Charles B. Jr. (1970),College Calculus with Analytic Geometry(2nd ed.), Reading:Addison-Wesley,LCCN76087042

- Punnen, Abraham P.; Gutin, Gregory (2002),The traveling salesman problem and its variations,Boston, MA: Kluwer Academic Publishers,ISBN978-1-4020-0664-7

- Reichl, Linda E.(2004),The transition to chaos: conservative classical systems and quantum manifestations,Berlin, DE; New York, NY: Springer-Verlag,ISBN978-0-387-98788-0

- Rowen, Louis Halle (2008),Graduate Algebra: noncommutative view,Providence, RI:American Mathematical Society,ISBN978-0-8218-4153-2

- Šolin, Pavel (2005),Partial Differential Equations and the Finite Element Method,Wiley-Interscience,ISBN978-0-471-76409-0

- Stinson, Douglas R. (2005),Cryptography,Discrete Mathematics and its Applications, Chapman & Hall/CRC,ISBN978-1-58488-508-5

- Stoer, Josef; Bulirsch, Roland (2002),Introduction to Numerical Analysis(3rd ed.), Berlin, DE; New York, NY: Springer-Verlag,ISBN978-0-387-95452-3

- Ward, J. P. (1997),Quaternions and Cayley numbers,Mathematics and its Applications, vol. 403, Dordrecht, NL: Kluwer Academic Publishers Group,doi:10.1007/978-94-011-5768-1,ISBN978-0-7923-4513-8,MR1458894

- Wolfram, Stephen(2003),The Mathematica Book(5th ed.), Champaign, IL: Wolfram Media,ISBN978-1-57955-022-6

Physics references

[edit]- Bohm, Arno (2001),Quantum Mechanics: Foundations and Applications,Springer,ISBN0-387-95330-2

- Burgess, Cliff; Moore, Guy (2007),The Standard Model. A Primer,Cambridge University Press,ISBN978-0-521-86036-9

- Guenther, Robert D. (1990),Modern Optics,John Wiley,ISBN0-471-60538-7

- Itzykson, Claude; Zuber, Jean-Bernard (1980),Quantum Field Theory,McGraw–Hill,ISBN0-07-032071-3

- Riley, Kenneth F.; Hobson, Michael P.; Bence, Stephen J. (1997),Mathematical methods for physics and engineering,Cambridge University Press,ISBN0-521-55506-X

- Schiff, Leonard I. (1968),Quantum Mechanics(3rd ed.), McGraw–Hill

- Weinberg, Steven (1995),The Quantum Theory of Fields. Volume I: Foundations,Cambridge University Press,ISBN0-521-55001-7

- Wherrett, Brian S. (1987),Group Theory for Atoms, Molecules and Solids,Prentice–Hall International,ISBN0-13-365461-3

- Zabrodin, Anton; Brezin, Édouard; Kazakov, Vladimir; Serban, Didina; Wiegmann, Paul (2006),Applications of Random Matrices in Physics (NATO Science Series II: Mathematics, Physics and Chemistry),Berlin, DE; New York, NY:Springer-Verlag,ISBN978-1-4020-4530-1

Historical references

[edit]- A. CayleyA memoir on the theory of matrices.Phil. Trans. 148 1858 17–37; Math. Papers II 475–496

- Bôcher, Maxime(2004),Introduction to higher algebra,New York, NY:Dover Publications,ISBN978-0-486-49570-5,reprint of the 1907 original edition

- Cayley, Arthur(1889),The collected mathematical papers of Arthur Cayley,vol. I (1841–1853),Cambridge University Press,pp. 123–126

- Dieudonné, Jean,ed. (1978),Abrégé d'histoire des mathématiques 1700-1900,Paris, FR: Hermann

- Hawkins, Thomas (1975), "Cauchy and the spectral theory of matrices",Historia Mathematica,2:1–29,doi:10.1016/0315-0860(75)90032-4,ISSN0315-0860,MR0469635

- Knobloch, Eberhard(1994), "From Gauss to Weierstrass: determinant theory and its historical evaluations",The intersection of history and mathematics,Science Networks Historical Studies, vol. 15, Basel, Boston, Berlin: Birkhäuser, pp. 51–66,MR1308079

- Kronecker, Leopold(1897),Hensel, Kurt(ed.),Leopold Kronecker's Werke,Teubner

- Mehra, Jagdish;Rechenberg, Helmut(1987),The Historical Development of Quantum Theory(1st ed.), Berlin, DE; New York, NY:Springer-Verlag,ISBN978-0-387-96284-9

- Shen, Kangshen; Crossley, John N.; Lun, Anthony Wah-Cheung (1999),Nine Chapters of the Mathematical Art, Companion and Commentary(2nd ed.),Oxford University Press,ISBN978-0-19-853936-0

- Weierstrass, Karl(1915),Collected works,vol. 3

Further reading

[edit]- "Matrix",Encyclopedia of Mathematics,EMS Press,2001 [1994]

- The Matrix Cookbook(PDF),retrieved24 March2014

- Brookes, Mike (2005),The Matrix Reference Manual,London:Imperial College,archived fromthe originalon 16 December 2008,retrieved10 Dec2008

![{\displaystyle \mathbf {A} =\left(a_{ij}\right),\quad \left[a_{ij}\right],\quad {\text{or}}\quad \left(a_{ij}\right)_{1\leq i\leq m,\;1\leq j\leq n}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/bfc2e9990806f2830d7a3865e6adb451a66e546c)

![{\displaystyle {\mathbf {A} [i,j]}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/eeb3630aa110e0aa90d0bc0e4c0b55f659d3d63e)

![{\displaystyle \mathbf {A} [1,3]}](https://wikimedia.org/api/rest_v1/media/math/render/svg/fa3b9da80148fbbfe012952941a2bdc0d8393c38)

![{\displaystyle {\mathbf {A} }=[i-j]}](https://wikimedia.org/api/rest_v1/media/math/render/svg/9724402762df013fda41e49ca5572dc9b89cd9cc)

}](https://wikimedia.org/api/rest_v1/media/math/render/svg/8ad68413c2769db2a39b51a017fb2e45cd793d3b)

![{\displaystyle {\mathbf {A} }=[i-j]_{3\times 4}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/f2ab376d25a953520208baf764ea114a1ee565b9)

![{\displaystyle [\mathbf {AB} ]_{i,j}=a_{i,1}b_{1,j}+a_{i,2}b_{2,j}+\cdots +a_{i,n}b_{n,j}=\sum _{r=1}^{n}a_{i,r}b_{r,j},}](https://wikimedia.org/api/rest_v1/media/math/render/svg/c903c2c14d249005ce9ebaa47a8d6c6710c1c29e)

![{\displaystyle {\begin{aligned}\mathbf {I} _{1}&={\begin{bmatrix}1\end{bmatrix}},\\[4pt]\mathbf {I} _{2}&={\begin{bmatrix}1&0\\0&1\end{bmatrix}},\\[4pt]\vdots &\\[4pt]\mathbf {I} _{n}&={\begin{bmatrix}1&0&\cdots &0\\0&1&\cdots &0\\\vdots &\vdots &\ddots &\vdots \\0&0&\cdots &1\end{bmatrix}}\end{aligned}}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/e2f691509cf9deb5416f60f917b8dfc543b1c6d3)

![{\displaystyle H(f)=\left[{\frac {\partial ^{2}f}{\partial x_{i}\,\partial x_{j}}}\right].}](https://wikimedia.org/api/rest_v1/media/math/render/svg/9cf91a060a82dd7a47c305e9a4c2865378fcf35f)

![{\displaystyle J(f)=\left[{\frac {\partial f_{i}}{\partial x_{j}}}\right]_{1\leq i\leq m,1\leq j\leq n}.}](https://wikimedia.org/api/rest_v1/media/math/render/svg/bdbd42114b895c82930ea1e229b566f71fd6b07d)