Probability density function

This articleneeds additional citations forverification.(June 2022) |

Inprobability theory,aprobability density function(PDF),density function,ordensityof anabsolutely continuous random variable,is afunctionwhose value at any given sample (or point) in thesample space(the set of possible values taken by the random variable) can be interpreted as providing arelative likelihoodthat the value of the random variable would be equal to that sample.[2][3]Probability density is the probability per unit length, in other words, while theabsolute likelihoodfor a continuous random variable to take on any particular value is 0 (since there is an infinite set of possible values to begin with), the value of the PDF at two different samples can be used to infer, in any particular draw of the random variable, how much more likely it is that the random variable would be close to one sample compared to the other sample.

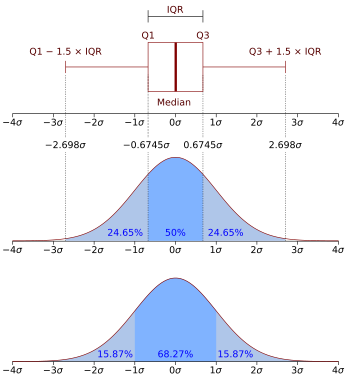

More precisely, the PDF is used to specify the probability of therandom variablefallingwithin a particular range of values,as opposed to taking on any one value. This probability is given by theintegralof this variable's PDF over that range—that is, it is given by the area under the density function but above the horizontal axis and between the lowest and greatest values of the range. The probability density function is nonnegative everywhere, and the area under the entire curve is equal to 1.

The termsprobability distribution functionandprobability functionhave also sometimes been used to denote the probability density function. However, this use is not standard among probabilists and statisticians. In other sources, "probability distribution function" may be used when theprobability distributionis defined as a function over general sets of values or it may refer to thecumulative distribution function,or it may be aprobability mass function(PMF) rather than the density. "Density function" itself is also used for the probability mass function, leading to further confusion.[4]In general though, the PMF is used in the context ofdiscrete random variables(random variables that take values on a countable set), while the PDF is used in the context of continuous random variables.

Example[edit]

Suppose bacteria of a certain species typically live 20 to 30 hours. The probability that a bacterium livesexactly5 hours is equal to zero. A lot of bacteria live for approximately 5 hours, but there is no chance that any given bacterium dies at exactly 5.00... hours. However, the probability that the bacterium dies between 5 hours and 5.01 hours is quantifiable. Suppose the answer is 0.02 (i.e., 2%). Then, the probability that the bacterium dies between 5 hours and 5.001 hours should be about 0.087, since this time interval is one-tenth as long as the previous. The probability that the bacterium dies between 5 hours and 5.0001 hours should be about 0.0087, and so on.

In this example, the ratio (probability of living during an interval) / (duration of the interval) is approximately constant, and equal to 2 per hour (or 2 hour−1). For example, there is 0.02 probability of dying in the 0.01-hour interval between 5 and 5.01 hours, and (0.02 probability / 0.01 hours) = 2 hour−1.This quantity 2 hour−1is called the probability density for dying at around 5 hours. Therefore, the probability that the bacterium dies at 5 hours can be written as (2 hour−1)dt.This is the probability that the bacterium dies within an infinitesimal window of time around 5 hours, wheredtis the duration of this window. For example, the probability that it lives longer than 5 hours, but shorter than (5 hours + 1 nanosecond), is (2 hour−1)×(1 nanosecond) ≈6×10−13(using theunit conversion3.6×1012nanoseconds = 1 hour).

There is a probability density functionfwithf(5 hours) = 2 hour−1.Theintegraloffover any window of time (not only infinitesimal windows but also large windows) is the probability that the bacterium dies in that window.

Absolutely continuous univariate distributions[edit]

A probability density function is most commonly associated withabsolutely continuousunivariate distributions.Arandom variablehas density,whereis a non-negativeLebesgue-integrablefunction, if:

Hence, ifis thecumulative distribution functionof,then: and (ifis continuous at)

Intuitively, one can think ofas being the probability offalling within the infinitesimalinterval.

Formal definition[edit]

(This definition may be extended to any probability distribution using themeasure-theoreticdefinition of probability.)

Arandom variablewith values in ameasurable space(usuallywith theBorel setsas measurable subsets) has asprobability distributionthe measureX∗Pon:thedensityofwith respect to a reference measureonis theRadon–Nikodym derivative:

That is,fis any measurable function with the property that: for any measurable set

Discussion[edit]

In thecontinuous univariate case above,the reference measure is theLebesgue measure.Theprobability mass functionof adiscrete random variableis the density with respect to thecounting measureover the sample space (usually the set ofintegers,or some subset thereof).

It is not possible to define a density with reference to an arbitrary measure (e.g. one can not choose the counting measure as a reference for a continuous random variable). Furthermore, when it does exist, the density is almost unique, meaning that any two such densities coincidealmost everywhere.

Further details[edit]

Unlike a probability, a probability density function can take on values greater than one; for example, thecontinuous uniform distributionon the interval[0, 1/2]has probability densityf(x) = 2for0 ≤x≤ 1/2andf(x) = 0elsewhere.

Thestandard normal distributionhas probability density

If a random variableXis given and its distribution admits a probability density functionf,then theexpected valueofX(if the expected value exists) can be calculated as

Not every probability distribution has a density function: the distributions ofdiscrete random variablesdo not; nor does theCantor distribution,even though it has no discrete component, i.e., does not assign positive probability to any individual point.

A distribution has a density function if and only if itscumulative distribution functionF(x)isabsolutely continuous.In this case:Fisalmost everywheredifferentiable,and its derivative can be used as probability density:

If a probability distribution admits a density, then the probability of every one-point set{a}is zero; the same holds for finite and countable sets.

Two probability densitiesfandgrepresent the sameprobability distributionprecisely if they differ only on a set ofLebesguemeasure zero.

In the field ofstatistical physics,a non-formal reformulation of the relation above between the derivative of thecumulative distribution functionand the probability density function is generally used as the definition of the probability density function. This alternate definition is the following:

Ifdtis an infinitely small number, the probability thatXis included within the interval(t,t+dt)is equal tof(t)dt,or:

Link between discrete and continuous distributions[edit]

It is possible to represent certain discrete random variables as well as random variables involving both a continuous and a discrete part with ageneralizedprobability density function using theDirac delta function.(This is not possible with a probability density function in the sense defined above, it may be done with adistribution.) For example, consider a binary discreterandom variablehaving theRademacher distribution—that is, taking −1 or 1 for values, with probability1⁄2each. The density of probability associated with this variable is:

More generally, if a discrete variable can takendifferent values among real numbers, then the associated probability density function is: whereare the discrete values accessible to the variable andare the probabilities associated with these values.

This substantially unifies the treatment of discrete and continuous probability distributions. The above expression allows for determining statistical characteristics of such a discrete variable (such as themean,variance,andkurtosis), starting from the formulas given for a continuous distribution of the probability.

Families of densities[edit]

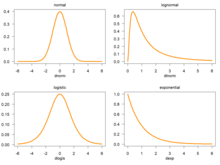

It is common for probability density functions (andprobability mass functions) to be parametrized—that is, to be characterized by unspecifiedparameters.For example, thenormal distributionis parametrized in terms of themeanand thevariance,denoted byandrespectively, giving the family of densities Different values of the parameters describe different distributions of differentrandom variableson the samesample space(the same set of all possible values of the variable); this sample space is the domain of the family of random variables that this family of distributions describes. A given set of parameters describes a single distribution within the family sharing the functional form of the density. From the perspective of a given distribution, the parameters are constants, and terms in a density function that contain only parameters, but not variables, are part of thenormalization factorof a distribution (the multiplicative factor that ensures that the area under the density—the probability ofsomethingin the domain occurring— equals 1). This normalization factor is outside thekernelof the distribution.

Since the parameters are constants, reparametrizing a density in terms of different parameters to give a characterization of a different random variable in the family, means simply substituting the new parameter values into the formula in place of the old ones.

Densities associated with multiple variables[edit]

For continuousrandom variablesX1,...,Xn,it is also possible to define a probability density function associated to the set as a whole, often calledjoint probability density function.This density function is defined as a function of thenvariables, such that, for any domainDin then-dimensional space of the values of the variablesX1,...,Xn,the probability that a realisation of the set variables falls inside the domainDis

IfF(x1,...,xn) = Pr(X1≤x1,...,Xn≤xn)is thecumulative distribution functionof the vector(X1,...,Xn),then the joint probability density function can be computed as a partial derivative

Marginal densities[edit]

Fori= 1, 2,...,n,letfXi(xi)be the probability density function associated with variableXialone. This is called the marginal density function, and can be deduced from the probability density associated with the random variablesX1,...,Xnby integrating over all values of the othern− 1variables:

Independence[edit]

Continuous random variablesX1,...,Xnadmitting a joint density are allindependentfrom each other if and only if

Corollary[edit]

If the joint probability density function of a vector ofnrandom variables can be factored into a product ofnfunctions of one variable (where eachfiis not necessarily a density) then thenvariables in the set are allindependentfrom each other, and the marginal probability density function of each of them is given by

Example[edit]

This elementary example illustrates the above definition of multidimensional probability density functions in the simple case of a function of a set of two variables. Let us calla 2-dimensional random vector of coordinates(X,Y):the probability to obtainin the quarter plane of positivexandyis

Function of random variables and change of variables in the probability density function[edit]

If the probability density function of a random variable (or vector)Xis given asfX(x),it is possible (but often not necessary; see below) to calculate the probability density function of some variableY=g(X).This is also called a "change of variable" and is in practice used to generate a random variable of arbitrary shapefg(X)=fYusing a known (for instance, uniform) random number generator.

It is tempting to think that in order to find the expected valueE(g(X)),one must first find the probability densityfg(X)of the new random variableY=g(X).However, rather than computing one may find instead

The values of the two integrals are the same in all cases in which bothXandg(X)actually have probability density functions. It is not necessary thatgbe aone-to-one function.In some cases the latter integral is computed much more easily than the former. SeeLaw of the unconscious statistician.

Scalar to scalar[edit]

Letbe amonotonic function,then the resulting density function is[5]

Hereg−1denotes theinverse function.

This follows from the fact that the probability contained in a differential area must be invariant under change of variables. That is, or

For functions that are not monotonic, the probability density function foryis wheren(y)is the number of solutions inxfor the equation,andare these solutions.

Vector to vector[edit]

Supposexis ann-dimensional random variable with joint densityf.Ify=G(x),whereGis abijective,differentiable function,thenyhas densitypY: with the differential regarded as theJacobianof the inverse ofG(⋅),evaluated aty.[6]

For example, in the 2-dimensional casex= (x1,x2),suppose the transformGis given asy1=G1(x1,x2),y2=G2(x1,x2)with inversesx1=G1−1(y1,y2),x2=G2−1(y1,y2).The joint distribution fory= (y1,y2) has density[7]

Vector to scalar[edit]

Letbe a differentiable function andbe a random vector taking values in,be the probability density function ofandbe theDirac deltafunction. It is possible to use the formulas above to determine,the probability density function of,which will be given by

This result leads to thelaw of the unconscious statistician:

Proof:

Letbe a collapsed random variable with probability density function(i.e., a constant equal to zero). Let the random vectorand the transformbe defined as

It is clear thatis a bijective mapping, and the Jacobian ofis given by: which is an upper triangular matrix with ones on the main diagonal, therefore its determinant is 1. Applying the change of variable theorem from the previous section we obtain that which if marginalized overleads to the desired probability density function.

Sums of independent random variables[edit]

The probability density function of the sum of twoindependentrandom variablesUandV,each of which has a probability density function, is theconvolutionof their separate density functions:

It is possible to generalize the previous relation to a sum of N independent random variables, with densitiesU1,...,UN:

This can be derived from a two-way change of variables involvingY=U+VandZ=V,similarly to the example below for the quotient of independent random variables.

Products and quotients of independent random variables[edit]

Given two independent random variablesUandV,each of which has a probability density function, the density of the productY=UVand quotientY=U/Vcan be computed by a change of variables.

Example: Quotient distribution[edit]

To compute the quotientY=U/Vof two independent random variablesUandV,define the following transformation:

Then, the joint densityp(y,z)can be computed by a change of variables fromU,VtoY,Z,andYcan be derived bymarginalizing outZfrom the joint density.

The inverse transformation is

The absolute value of theJacobian matrixdeterminantof this transformation is:

Thus:

And the distribution ofYcan be computed bymarginalizing outZ:

This method crucially requires that the transformation fromU,VtoY,Zbebijective.The above transformation meets this becauseZcan be mapped directly back toV,and for a givenVthe quotientU/Vismonotonic.This is similarly the case for the sumU+V,differenceU−Vand productUV.

Exactly the same method can be used to compute the distribution of other functions of multiple independent random variables.

Example: Quotient of two standard normals[edit]

Given twostandard normalvariablesUandV,the quotient can be computed as follows. First, the variables have the following density functions:

We transform as described above:

This leads to:

This is the density of a standardCauchy distribution.

See also[edit]

- Density estimation– Estimate of an unobservable underlying probability density function

- Kernel density estimation– Estimator

- Likelihood function– Function related to statistics and probability theory

- List of probability distributions

- Probability amplitude– Complex number whose squared absolute value is a probability

- Probability mass function– Discrete-variable probability distribution

- Secondary measure

- Uses asposition probability density:

- Atomic orbital– Function describing an electron in an atom

- Home range– The area in which an animal lives and moves on a periodic basis

References[edit]

- ^"AP Statistics Review - Density Curves and the Normal Distributions".Archived fromthe originalon 2 April 2015.Retrieved16 March2015.

- ^Grinstead, Charles M.; Snell, J. Laurie (2009)."Conditional Probability - Discrete Conditional"(PDF).Grinstead & Snell's Introduction to Probability.Orange Grove Texts.ISBN978-1616100469.Archived(PDF)from the original on 2003-04-25.Retrieved2019-07-25.

- ^"probability - Is a uniformly random number over the real line a valid distribution?".Cross Validated.Retrieved2021-10-06.

- ^Ord, J.K. (1972)Families of Frequency Distributions,Griffin.ISBN0-85264-137-0(for example, Table 5.1 and Example 5.4)

- ^Siegrist, Kyle."Transformations of Random Variables".LibreTexts Statistics.Retrieved22 December2023.

- ^Devore, Jay L.; Berk, Kenneth N. (2007).Modern Mathematical Statistics with Applications.Cengage. p. 263.ISBN978-0-534-40473-4.

- ^David, Stirzaker (2007-01-01).Elementary Probability.Cambridge University Press.ISBN978-0521534284.OCLC851313783.

Further reading[edit]

- Billingsley, Patrick(1979).Probability and Measure.New York, Toronto, London: John Wiley and Sons.ISBN0-471-00710-2.

- Casella, George;Berger, Roger L.(2002).Statistical Inference(Second ed.). Thomson Learning. pp. 34–37.ISBN0-534-24312-6.

- Stirzaker, David (2003).Elementary Probability.Cambridge University Press.ISBN0-521-42028-8.Chapters 7 to 9 are about continuous variables.

External links[edit]

- Ushakov, N.G. (2001) [1994],"Density of a probability distribution",Encyclopedia of Mathematics,EMS Press

- Weisstein, Eric W."Probability density function".MathWorld.

![{\displaystyle \Pr[a\leq X\leq b]=\int _{a}^{b}f_{X}(x)\,dx.}](https://wikimedia.org/api/rest_v1/media/math/render/svg/45fd7691b5fbd323f64834d8e5b8d4f54c73a6f8)

![{\displaystyle [x,x+dx]}](https://wikimedia.org/api/rest_v1/media/math/render/svg/f07271dbe3f8967834a2eaf143decd7e41c61d7a)

![{\displaystyle \Pr[X\in A]=\int _{X^{-1}A}\,dP=\int _{A}f\,d\mu }](https://wikimedia.org/api/rest_v1/media/math/render/svg/591b4a96fefea18b28fe8eb36d3469ad6b33a9db)

![{\displaystyle \operatorname {E} [X]=\int _{-\infty }^{\infty }x\,f(x)\,dx.}](https://wikimedia.org/api/rest_v1/media/math/render/svg/00ce7a00fac378eafc98afb88de88d619e15e996)

![{\displaystyle p_{Y}(\mathbf {y} )=f{\Bigl (}G^{-1}(\mathbf {y} ){\Bigr )}\left|\det \left[\left.{\frac {dG^{-1}(\mathbf {z} )}{d\mathbf {z} }}\right|_{\mathbf {z} =\mathbf {y} }\right]\right|}](https://wikimedia.org/api/rest_v1/media/math/render/svg/48cc1c800c9d64079df336d91594f175aa00dfa0)

![{\displaystyle \operatorname {E} _{Y}[Y]=\int _{\mathbb {R} }yf_{Y}(y)\,dy=\int _{\mathbb {R} }y\int _{\mathbb {R} ^{n}}f_{X}(\mathbf {x} )\delta {\big (}y-V(\mathbf {x} ){\big )}\,d\mathbf {x} \,dy=\int _{{\mathbb {R} }^{n}}\int _{\mathbb {R} }yf_{X}(\mathbf {x} )\delta {\big (}y-V(\mathbf {x} ){\big )}\,dy\,d\mathbf {x} =\int _{\mathbb {R} ^{n}}V(\mathbf {x} )f_{X}(\mathbf {x} )\,d\mathbf {x} =\operatorname {E} _{X}[V(X)].}](https://wikimedia.org/api/rest_v1/media/math/render/svg/ad5325e96f2d76c533cb1a21d2095e8cf16e6fc7)

![{\displaystyle {\begin{aligned}Y&=U/V\\[1ex]Z&=V\end{aligned}}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/878eef546b1fb56d8cd6843edf0d6666642a77e2)

![{\displaystyle {\begin{aligned}p(u)&={\frac {1}{\sqrt {2\pi }}}e^{-{u^{2}}/{2}}\\[1ex]p(v)&={\frac {1}{\sqrt {2\pi }}}e^{-{v^{2}}/{2}}\end{aligned}}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/ad1edf70beff1658f6db7cafd4dd84111b4d3c0c)

![{\displaystyle {\begin{aligned}p(y)&=\int _{-\infty }^{\infty }p_{U}(yz)\,p_{V}(z)\,|z|\,dz\\[5pt]&=\int _{-\infty }^{\infty }{\frac {1}{\sqrt {2\pi }}}e^{-{\frac {1}{2}}y^{2}z^{2}}{\frac {1}{\sqrt {2\pi }}}e^{-{\frac {1}{2}}z^{2}}|z|\,dz\\[5pt]&=\int _{-\infty }^{\infty }{\frac {1}{2\pi }}e^{-{\frac {1}{2}}\left(y^{2}+1\right)z^{2}}|z|\,dz\\[5pt]&=2\int _{0}^{\infty }{\frac {1}{2\pi }}e^{-{\frac {1}{2}}\left(y^{2}+1\right)z^{2}}z\,dz\\[5pt]&=\int _{0}^{\infty }{\frac {1}{\pi }}e^{-\left(y^{2}+1\right)u}\,du&&u={\tfrac {1}{2}}z^{2}\\[5pt]&=\left.-{\frac {1}{\pi \left(y^{2}+1\right)}}e^{-\left(y^{2}+1\right)u}\right|_{u=0}^{\infty }\\[5pt]&={\frac {1}{\pi \left(y^{2}+1\right)}}\end{aligned}}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/63983efb2501c35f094487a9c6473a30e9405551)