Student's t-distribution

|

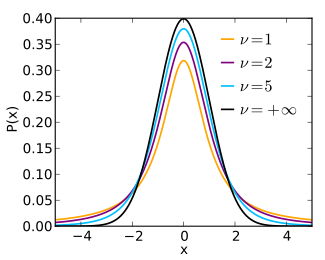

Probability density function  | |||

|

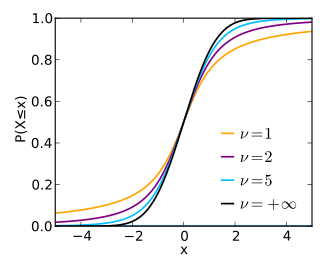

Cumulative distribution function  | |||

| Parameters | degrees of freedom(real,almost always a positiveinteger) | ||

|---|---|---|---|

| Support | |||

| CDF |

| ||

| Mean | forotherwiseundefined | ||

| Median | |||

| Mode | |||

| Variance |

for∞for otherwiseundefined | ||

| Skewness | forotherwiseundefined | ||

| Excess kurtosis |

for∞ for otherwiseundefined | ||

| Entropy |

| ||

| MGF | undefined | ||

| CF |

for | ||

| Expected shortfall |

Whereis the inverse standardized StudenttCDF,andis the standardized Student tPDF.[2] | ||

Inprobabilityandstatistics,Student'stdistribution(or simply thetdistribution)is a continuousprobability distributionthat generalizes thestandard normal distribution.Like the latter, it is symmetric around zero and bell-shaped.

However,hasheavier tailsand the amount of probability mass in the tails is controlled by the parameterForthe Student'stdistributionbecomes the standardCauchy distribution,which has very"fat" tails;whereas forit becomes the standard normal distributionwhich has very "thin" tails.

The Student'stdistribution plays a role in a number of widely used statistical analyses, includingStudent'sttestfor assessing thestatistical significanceof the difference between two sample means, the construction ofconfidence intervalsfor the difference between two population means, and in linearregression analysis.

In the form of thelocation-scaletdistributionit generalizes thenormal distributionand also arises in theBayesian analysisof data from a normal family as acompound distributionwhen marginalizing over the variance parameter.

History and etymology

[edit]

In statistics, thetdistribution was first derived as aposterior distributionin 1876 byHelmert[3][4][5]andLüroth.[6][7][8]As such, Student's t-distribution is an example ofStigler's Law of Eponymy.Thetdistribution also appeared in a more general form asPearson type IVdistribution inKarl Pearson's 1895 paper.[9]

In the English-language literature, the distribution takes its name fromWilliam Sealy Gosset's 1908 paper inBiometrikaunder the pseudonym "Student".[10]One version of the origin of the pseudonym is that Gosset's employer preferred staff to use pen names when publishing scientific papers instead of their real name, so he used the name "Student" to hide his identity. Another version is that Guinness did not want their competitors to know that they were using thettest to determine the quality of raw material.[11][12]

Gosset worked at theGuinness BreweryinDublin, Ireland,and was interested in the problems of small samples – for example, the chemical properties of barley where sample sizes might be as few as 3. Gosset's paper refers to the distribution as the "frequency distribution of standard deviations of samples drawn from a normal population". It became well known through the work ofRonald Fisher,who called the distribution "Student's distribution" and represented the test value with the lettert.[13][14]

Definition

[edit]Probability density function

[edit]Student'stdistributionhas theprobability density function(PDF) given by

whereis the number ofdegrees of freedomandis thegamma function.This may also be written as

whereis theBeta function.In particular for integer valued degrees of freedomwe have:

Forand even,

Forand odd,

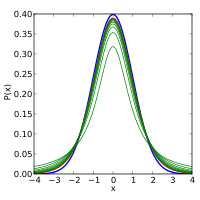

The probability density function issymmetric,and its overall shape resembles the bell shape of a normally distributed variable with mean 0 and variance 1, except that it is a bit lower and wider. As the number of degrees of freedom grows, thetdistribution approaches the normal distribution with mean 0 and variance 1. For this reasonis also known as the normality parameter.[15]

The following images show the density of thetdistribution for increasing values ofThe normal distribution is shown as a blue line for comparison. Note that thetdistribution (red line) becomes closer to the normal distribution asincreases.

Cumulative distribution function

[edit]Thecumulative distribution function(CDF) can be written in terms ofI,the regularized incomplete beta function.Fort> 0,

where

Other values would be obtained by symmetry. An alternative formula, valid foris

whereis a particular instance of thehypergeometric function.

For information on its inverse cumulative distribution function, seequantile function § Student's t-distribution.

Special cases

[edit]Certain values ofgive a simple form for Student's t-distribution.

| CDF | notes | ||

|---|---|---|---|

| 1 | SeeCauchy distribution | ||

| 2 | |||

| 3 | |||

| 4 | |||

| 5 | |||

| SeeNormal distribution,Error function |

Moments

[edit]Fortheraw momentsof thetdistribution are

Moments of orderor higher do not exist.[16]

The term forkeven, may be simplified using the properties of thegamma functionto

For atdistribution withdegrees of freedom, theexpected valueisifand itsvarianceisifTheskewnessis 0 ifand theexcess kurtosisisif

Location-scaletdistribution

[edit]Location-scale transformation

[edit]Student'stdistribution generalizes to the three parameterlocation-scaletdistributionby introducing alocation parameterand ascale parameterWith

andlocation-scale familytransformation

we get

The resulting distribution is also called thenon-standardized Student'stdistribution.

Density and first two moments

[edit]The location-scaletdistribution has a density defined by:[17]

Equivalently, the density can be written in terms of:

Other properties of this version of the distribution are:[17]

Special cases

[edit]- Iffollows a location-scaletdistributionthen foris normally distributedwith meanand variance

- The location-scaletdistributionwith degree of freedomis equivalent to theCauchy distribution

- The location-scaletdistributionwithandreduces to the Student'stdistribution

How thetdistribution arises (characterization)

[edit]As the distribution of a test statistic

[edit]Student'st-distribution withdegrees of freedom can be defined as the distribution of therandom variableTwith[18][19]

where

- Zis a standard normal withexpected value0 and variance 1;

- Vhas achi-squared distribution(χ2-distribution) withdegrees of freedom;

- ZandVareindependent;

A different distribution is defined as that of the random variable defined, for a given constantμ,by

This random variable has anoncentralt-distributionwithnoncentrality parameterμ.This distribution is important in studies of thepowerof Student'st-test.

Derivation

[edit]SupposeX1,...,Xnareindependentrealizations of the normally-distributed, random variableX,which has an expected valueμandvarianceσ2.Let

be the sample mean, and

be an unbiased estimate of the variance from the sample. It can be shown that the random variable

has a chi-squared distribution withdegrees of freedom (byCochran's theorem).[20]It is readily shown that the quantity

is normally distributed with mean 0 and variance 1, since the sample meanis normally distributed with meanμand varianceσ2/n.Moreover, it is possible to show that these two random variables (the normally distributed oneZand the chi-squared-distributed oneV) are independent. Consequently[clarification needed]thepivotal quantity

which differs fromZin that the exact standard deviationσis replaced by the random variableSn,has a Student'st-distribution as defined above. Notice that the unknown population varianceσ2does not appear inT,since it was in both the numerator and the denominator, so it canceled. Gosset intuitively obtained the probability density function stated above, withequal ton− 1, and Fisher proved it in 1925.[13]

The distribution of the test statisticTdepends on,but notμorσ;the lack of dependence onμandσis what makes thet-distribution important in both theory and practice.

Sampling distribution of t-statistic

[edit]Thetdistribution arises as the sampling distribution of thetstatistic. Below the one-sampletstatistic is discussed, for the corresponding two-sampletstatistic seeStudent's t-test.

Unbiased variance estimate

[edit]Letbe independent and identically distributed samples from a normal distribution with meanand varianceThe sample mean and unbiasedsample varianceare given by:

The resulting (one sample)tstatistic is given by

and is distributed according to a Student'stdistribution withdegrees of freedom.

Thus for inference purposes thetstatistic is a useful "pivotal quantity"in the case when the mean and varianceare unknown population parameters, in the sense that thetstatistic has then a probability distribution that depends on neithernor

ML variance estimate

[edit]Instead of the unbiased estimatewe may also use the maximum likelihood estimate

yielding the statistic

This is distributed according to the location-scaletdistribution:

Compound distribution of normal with inverse gamma distribution

[edit]The location-scaletdistribution results fromcompoundingaGaussian distribution(normal distribution) withmeanand unknownvariance,with aninverse gamma distributionplaced over the variance with parametersandIn other words, therandom variableXis assumed to have a Gaussian distribution with an unknown variance distributed as inverse gamma, and then the variance ismarginalized out(integrated out).

Equivalently, this distribution results from compounding a Gaussian distribution with ascaled-inverse-chi-squared distributionwith parametersandThe scaled-inverse-chi-squared distribution is exactly the same distribution as the inverse gamma distribution, but with a different parameterization, i.e.

The reason for the usefulness of this characterization is that inBayesian statisticsthe inverse gamma distribution is theconjugate priordistribution of the variance of a Gaussian distribution. As a result, the location-scaletdistribution arises naturally in many Bayesian inference problems.[21]

Maximum entropy distribution

[edit]Student'stdistribution is themaximum entropy probability distributionfor a random variateXfor whichis fixed.[22][clarification needed][better source needed]

Further properties

[edit]Monte Carlo sampling

[edit]There are various approaches to constructing random samples from the Student'stdistribution. The matter depends on whether the samples are required on a stand-alone basis, or are to be constructed by application of aquantile functiontouniformsamples; e.g., in the multi-dimensional applications basis ofcopula-dependency.[citation needed]In the case of stand-alone sampling, an extension of theBox–Muller methodand itspolar formis easily deployed.[23]It has the merit that it applies equally well to all real positivedegrees of freedom,ν,while many other candidate methods fail ifνis close to zero.[23]

Integral of Student's probability density function andp-value

[edit]The functionA(t|ν)is the integral of Student's probability density function,f(t)between-tandt,fort≥ 0.It thus gives the probability that a value oftless than that calculated from observed data would occur by chance. Therefore, the functionA(t|ν)can be used when testing whether the difference between the means of two sets of data is statistically significant, by calculating the corresponding value oftand the probability of its occurrence if the two sets of data were drawn from the same population. This is used in a variety of situations, particularly inttests.For the statistict,withνdegrees of freedom,A(t|ν)is the probability thattwould be less than the observed value if the two means were the same (provided that the smaller mean is subtracted from the larger, so thatt≥ 0).It can be easily calculated from thecumulative distribution functionFν(t)of thetdistribution:

whereIx(a,b)is the regularizedincomplete beta function.

For statistical hypothesis testing this function is used to construct thep-value.

Related distributions

[edit]- Thenoncentraltdistributiongeneralizes thetdistribution to include a noncentrality parameter. Unlike the nonstandardizedtdistributions, the noncentral distributions are not symmetric (the median is not the same as the mode).

- Thediscrete Student'stdistributionis defined by itsprobability mass functionatrbeing proportional to:[24]Herea,b,andkare parameters. This distribution arises from the construction of a system of discrete distributions similar to that of thePearson distributionsfor continuous distributions.[25]

- One can generate StudentA(t|ν)samples by taking the ratio of variables from the normal distribution and the square-root of theχ²distribution.If we use instead of the normal distribution, e.g., theIrwin–Hall distribution,we obtain over-all a symmetric 4 parameter distribution, which includes the normal, theuniform,thetriangular,the Studenttand theCauchy distribution.This is also more flexible than some other symmetric generalizations of the normal distribution.

- tdistribution is an instance ofratio distributions.

Uses

[edit]In frequentist statistical inference

[edit]Student'stdistribution arises in a variety of statistical estimation problems where the goal is to estimate an unknown parameter, such as a mean value, in a setting where the data are observed with additiveerrors.If (as in nearly all practical statistical work) the populationstandard deviationof these errors is unknown and has to be estimated from the data, thetdistribution is often used to account for the extra uncertainty that results from this estimation. In most such problems, if the standard deviation of the errors were known, a normal distribution would be used instead of thetdistribution.

Confidence intervalsandhypothesis testsare two statistical procedures in which thequantilesof the sampling distribution of a particular statistic (e.g. thestandard score) are required. In any situation where this statistic is alinear functionof thedata,divided by the usual estimate of the standard deviation, the resulting quantity can be rescaled and centered to follow Student'stdistribution. Statistical analyses involving means, weighted means, and regression coefficients all lead to statistics having this form.

Quite often, textbook problems will treat the population standard deviation as if it were known and thereby avoid the need to use the Student'stdistribution. These problems are generally of two kinds: (1) those in which the sample size is so large that one may treat a data-based estimate of thevarianceas if it were certain, and (2) those that illustrate mathematical reasoning, in which the problem of estimating the standard deviation is temporarily ignored because that is not the point that the author or instructor is then explaining.

Hypothesis testing

[edit]A number of statistics can be shown to havetdistributions for samples of moderate size undernull hypothesesthat are of interest, so that thetdistribution forms the basis for significance tests. For example, the distribution ofSpearman's rank correlation coefficientρ,in the null case (zero correlation) is well approximated by thetdistribution for sample sizes above about 20.[citation needed]

Confidence intervals

[edit]Suppose the numberAis so chosen that

whenThas atdistribution withn− 1 degrees of freedom. By symmetry, this is the same as saying thatAsatisfies

soAis the "95th percentile" of this probability distribution, orThen

and this is equivalent to

Therefore, the interval whose endpoints are

is a 90%confidence intervalfor μ. Therefore, if we find the mean of a set of observations that we can reasonably expect to have a normal distribution, we can use thetdistribution to examine whether the confidence limits on that mean include some theoretically predicted value – such as the value predicted on anull hypothesis.

It is this result that is used in theStudent'sttests:since the difference between the means of samples from two normal distributions is itself distributed normally, thetdistribution can be used to examine whether that difference can reasonably be supposed to be zero.

If the data are normally distributed, the one-sided(1 −α)upperconfidence limit (UCL) of the mean, can be calculated using the following equation:

The resulting UCL will be the greatest average value that will occur for a given confidence interval and population size. In other words,being the mean of the set of observations, the probability that the mean of the distribution is inferior toUCL1 −αis equal to the confidencelevel1 −α.

Prediction intervals

[edit]Thetdistribution can be used to construct aprediction intervalfor an unobserved sample from a normal distribution with unknown mean and variance.

In Bayesian statistics

[edit]The Student'stdistribution, especially in its three-parameter (location-scale) version, arises frequently inBayesian statisticsas a result of its connection with the normal distribution. Whenever thevarianceof a normally distributedrandom variableis unknown and aconjugate priorplaced over it that follows aninverse gamma distribution,the resultingmarginal distributionof the variable will follow a Student'stdistribution. Equivalent constructions with the same results involve a conjugatescaled-inverse-chi-squared distributionover the variance, or a conjugate gamma distribution over theprecision.If animproper priorproportional to1/ σ² is placed over the variance, thetdistribution also arises. This is the case regardless of whether the mean of the normally distributed variable is known, is unknown distributed according to aconjugatenormally distributed prior, or is unknown distributed according to an improper constant prior.

Related situations that also produce atdistribution are:

- Themarginalposterior distributionof the unknown mean of a normally distributed variable, with unknown prior mean and variance following the above model.

- Theprior predictive distributionandposterior predictive distributionof a new normally distributed data point when a series ofindependent identically distributednormally distributed data points have been observed, with prior mean and variance as in the above model.

Robust parametric modeling

[edit]Thetdistribution is often used as an alternative to the normal distribution as a model for data, which often has heavier tails than the normal distribution allows for; see e.g. Lange et al.[26]The classical approach was to identifyoutliers(e.g., usingGrubbs's test) and exclude or downweight them in some way. However, it is not always easy to identify outliers (especially inhigh dimensions), and thetdistribution is a natural choice of model for such data and provides a parametric approach torobust statistics.

A Bayesian account can be found in Gelman et al.[27]The degrees of freedom parameter controls the kurtosis of the distribution and is correlated with the scale parameter. The likelihood can have multiple local maxima and, as such, it is often necessary to fix the degrees of freedom at a fairly low value and estimate the other parameters taking this as given. Some authors[citation needed]report that values between 3 and 9 are often good choices. Venables and Ripley[citation needed]suggest that a value of 5 is often a good choice.

Student'stprocess

[edit]For practicalregressionandpredictionneeds, Student'stprocesses were introduced, that are generalisations of the Studenttdistributions for functions. A Student'stprocess is constructed from the Studenttdistributions like aGaussian processis constructed from theGaussian distributions.For aGaussian process,all sets of values have a multidimensional Gaussian distribution. Analogously,is a Studenttprocess on an intervalif the correspondent values of the process() have a jointmultivariate Studenttdistribution.[28]These processes are used for regression, prediction, Bayesian optimization and related problems. For multivariate regression and multi-output prediction, the multivariate Studenttprocesses are introduced and used.[29]

Table of selected values

[edit]The following table lists values fortdistributions withνdegrees of freedom for a range of one-sided or two-sided critical regions. The first column isν,the percentages along the top are confidence levelsand the numbers in the body of the table are thefactors described in the section onconfidence intervals.

The last row with infiniteνgives critical points for a normal distribution since atdistribution with infinitely many degrees of freedom is a normal distribution. (SeeRelated distributionsabove).

| One-sided | 75% | 80% | 85% | 90% | 95% | 97.5% | 99% | 99.5% | 99.75% | 99.9% | 99.95% |

|---|---|---|---|---|---|---|---|---|---|---|---|

| Two-sided | 50% | 60% | 70% | 80% | 90% | 95% | 98% | 99% | 99.5% | 99.8% | 99.9% |

| 1 | 1.000 | 1.376 | 1.963 | 3.078 | 6.314 | 12.706 | 31.821 | 63.657 | 127.321 | 318.309 | 636.619 |

| 2 | 0.816 | 1.061 | 1.386 | 1.886 | 2.920 | 4.303 | 6.965 | 9.925 | 14.089 | 22.327 | 31.599 |

| 3 | 0.765 | 0.978 | 1.250 | 1.638 | 2.353 | 3.182 | 4.541 | 5.841 | 7.453 | 10.215 | 12.924 |

| 4 | 0.741 | 0.941 | 1.190 | 1.533 | 2.132 | 2.776 | 3.747 | 4.604 | 5.598 | 7.173 | 8.610 |

| 5 | 0.727 | 0.920 | 1.156 | 1.476 | 2.015 | 2.571 | 3.365 | 4.032 | 4.773 | 5.893 | 6.869 |

| 6 | 0.718 | 0.906 | 1.134 | 1.440 | 1.943 | 2.447 | 3.143 | 3.707 | 4.317 | 5.208 | 5.959 |

| 7 | 0.711 | 0.896 | 1.119 | 1.415 | 1.895 | 2.365 | 2.998 | 3.499 | 4.029 | 4.785 | 5.408 |

| 8 | 0.706 | 0.889 | 1.108 | 1.397 | 1.860 | 2.306 | 2.896 | 3.355 | 3.833 | 4.501 | 5.041 |

| 9 | 0.703 | 0.883 | 1.100 | 1.383 | 1.833 | 2.262 | 2.821 | 3.250 | 3.690 | 4.297 | 4.781 |

| 10 | 0.700 | 0.879 | 1.093 | 1.372 | 1.812 | 2.228 | 2.764 | 3.169 | 3.581 | 4.144 | 4.587 |

| 11 | 0.697 | 0.876 | 1.088 | 1.363 | 1.796 | 2.201 | 2.718 | 3.106 | 3.497 | 4.025 | 4.437 |

| 12 | 0.695 | 0.873 | 1.083 | 1.356 | 1.782 | 2.179 | 2.681 | 3.055 | 3.428 | 3.930 | 4.318 |

| 13 | 0.694 | 0.870 | 1.079 | 1.350 | 1.771 | 2.160 | 2.650 | 3.012 | 3.372 | 3.852 | 4.221 |

| 14 | 0.692 | 0.868 | 1.076 | 1.345 | 1.761 | 2.145 | 2.624 | 2.977 | 3.326 | 3.787 | 4.140 |

| 15 | 0.691 | 0.866 | 1.074 | 1.341 | 1.753 | 2.131 | 2.602 | 2.947 | 3.286 | 3.733 | 4.073 |

| 16 | 0.690 | 0.865 | 1.071 | 1.337 | 1.746 | 2.120 | 2.583 | 2.921 | 3.252 | 3.686 | 4.015 |

| 17 | 0.689 | 0.863 | 1.069 | 1.333 | 1.740 | 2.110 | 2.567 | 2.898 | 3.222 | 3.646 | 3.965 |

| 18 | 0.688 | 0.862 | 1.067 | 1.330 | 1.734 | 2.101 | 2.552 | 2.878 | 3.197 | 3.610 | 3.922 |

| 19 | 0.688 | 0.861 | 1.066 | 1.328 | 1.729 | 2.093 | 2.539 | 2.861 | 3.174 | 3.579 | 3.883 |

| 20 | 0.687 | 0.860 | 1.064 | 1.325 | 1.725 | 2.086 | 2.528 | 2.845 | 3.153 | 3.552 | 3.850 |

| 21 | 0.686 | 0.859 | 1.063 | 1.323 | 1.721 | 2.080 | 2.518 | 2.831 | 3.135 | 3.527 | 3.819 |

| 22 | 0.686 | 0.858 | 1.061 | 1.321 | 1.717 | 2.074 | 2.508 | 2.819 | 3.119 | 3.505 | 3.792 |

| 23 | 0.685 | 0.858 | 1.060 | 1.319 | 1.714 | 2.069 | 2.500 | 2.807 | 3.104 | 3.485 | 3.767 |

| 24 | 0.685 | 0.857 | 1.059 | 1.318 | 1.711 | 2.064 | 2.492 | 2.797 | 3.091 | 3.467 | 3.745 |

| 25 | 0.684 | 0.856 | 1.058 | 1.316 | 1.708 | 2.060 | 2.485 | 2.787 | 3.078 | 3.450 | 3.725 |

| 26 | 0.684 | 0.856 | 1.058 | 1.315 | 1.706 | 2.056 | 2.479 | 2.779 | 3.067 | 3.435 | 3.707 |

| 27 | 0.684 | 0.855 | 1.057 | 1.314 | 1.703 | 2.052 | 2.473 | 2.771 | 3.057 | 3.421 | 3.690 |

| 28 | 0.683 | 0.855 | 1.056 | 1.313 | 1.701 | 2.048 | 2.467 | 2.763 | 3.047 | 3.408 | 3.674 |

| 29 | 0.683 | 0.854 | 1.055 | 1.311 | 1.699 | 2.045 | 2.462 | 2.756 | 3.038 | 3.396 | 3.659 |

| 30 | 0.683 | 0.854 | 1.055 | 1.310 | 1.697 | 2.042 | 2.457 | 2.750 | 3.030 | 3.385 | 3.646 |

| 40 | 0.681 | 0.851 | 1.050 | 1.303 | 1.684 | 2.021 | 2.423 | 2.704 | 2.971 | 3.307 | 3.551 |

| 50 | 0.679 | 0.849 | 1.047 | 1.299 | 1.676 | 2.009 | 2.403 | 2.678 | 2.937 | 3.261 | 3.496 |

| 60 | 0.679 | 0.848 | 1.045 | 1.296 | 1.671 | 2.000 | 2.390 | 2.660 | 2.915 | 3.232 | 3.460 |

| 80 | 0.678 | 0.846 | 1.043 | 1.292 | 1.664 | 1.990 | 2.374 | 2.639 | 2.887 | 3.195 | 3.416 |

| 100 | 0.677 | 0.845 | 1.042 | 1.290 | 1.660 | 1.984 | 2.364 | 2.626 | 2.871 | 3.174 | 3.390 |

| 120 | 0.677 | 0.845 | 1.041 | 1.289 | 1.658 | 1.980 | 2.358 | 2.617 | 2.860 | 3.160 | 3.373 |

| ∞ | 0.674 | 0.842 | 1.036 | 1.282 | 1.645 | 1.960 | 2.326 | 2.576 | 2.807 | 3.090 | 3.291 |

| One-sided | 75% | 80% | 85% | 90% | 95% | 97.5% | 99% | 99.5% | 99.75% | 99.9% | 99.95% |

| Two-sided | 50% | 60% | 70% | 80% | 90% | 95% | 98% | 99% | 99.5% | 99.8% | 99.9% |

- Calculating the confidence interval

Let's say we have a sample with size 11, sample mean 10, and sample variance 2. For 90% confidence with 10 degrees of freedom, the one-sidedtvalue from the table is 1.372. Then with confidence interval calculated from

we determine that with 90% confidence we have a true mean lying below

In other words, 90% of the times that an upper threshold is calculated by this method from particular samples, this upper threshold exceeds the true mean.

And with 90% confidence we have a true mean lying above

In other words, 90% of the times that a lower threshold is calculated by this method from particular samples, this lower threshold lies below the true mean.

So that at 80% confidence (calculated from 100% − 2 × (1 − 90%) = 80%), we have a true mean lying within the interval

Saying that 80% of the times that upper and lower thresholds are calculated by this method from a given sample, the true mean is both below the upper threshold and above the lower threshold is not the same as saying that there is an 80% probability that the true mean lies between a particular pair of upper and lower thresholds that have been calculated by this method; seeconfidence intervalandprosecutor's fallacy.

Nowadays, statistical software, such as theR programming language,and functions available in manyspreadsheet programscompute values of thetdistribution and its inverse without tables.

See also

[edit]- F-distribution

- Foldedtand halftdistributions

- Hotelling'sT² distribution

- Multivariate Student distribution

- Standard normal table(Z-distribution table)

- tstatistic

- Tau distribution,forinternally studentized residuals

- Wilks' lambda distribution

- Wishart distribution

- Modified half-normal distribution[30]with the pdf onis given aswheredenotes theFox–Wright Psi function.

Notes

[edit]- ^Hurst, Simon."The characteristic function of the Studenttdistribution ".Financial Mathematics Research Report. Statistics Research Report No. SRR044-95. Archived fromthe originalon February 18, 2010.

- ^Norton, Matthew; Khokhlov, Valentyn; Uryasev, Stan (2019)."Calculating CVaR and bPOE for common probability distributions with application to portfolio optimization and density estimation"(PDF).Annals of Operations Research.299(1–2). Springer: 1281–1315.arXiv:1811.11301.doi:10.1007/s10479-019-03373-1.S2CID254231768.Retrieved2023-02-27.

- ^Helmert FR (1875). "Über die Berechnung des wahrscheinlichen Fehlers aus einer endlichen Anzahl wahrer Beobachtungsfehler".Zeitschrift für Angewandte Mathematik und Physik(in German).20:300–303.

- ^Helmert FR (1876). "Über die Wahrscheinlichkeit der Potenzsummen der Beobachtungsfehler und uber einige damit in Zusammenhang stehende Fragen".Zeitschrift für Angewandte Mathematik und Physik(in German).21:192–218.

- ^Helmert FR (1876)."Die Genauigkeit der Formel von Peters zur Berechnung des wahrscheinlichen Beobachtungsfehlers directer Beobachtungen gleicher Genauigkeit"[The accuracy of Peters' formula for calculating the probable observation error of direct observations of the same accuracy].Astronomische Nachrichten(in German).88(8–9): 113–132.Bibcode:1876AN.....88..113H.doi:10.1002/asna.18760880802.

- ^Lüroth J (1876)."Vergleichung von zwei Werten des wahrscheinlichen Fehlers".Astronomische Nachrichten(in German).87(14): 209–220.Bibcode:1876AN.....87..209L.doi:10.1002/asna.18760871402.

- ^Pfanzagl J, Sheynin O (1996). "Studies in the history of probability and statistics. XLIV. A forerunner of thetdistribution ".Biometrika.83(4): 891–898.doi:10.1093/biomet/83.4.891.MR1766040.

- ^Sheynin O (1995). "Helmert's work in the theory of errors".Archive for History of Exact Sciences.49(1): 73–104.doi:10.1007/BF00374700.S2CID121241599.

- ^Pearson, K. (1895)."Contributions to the Mathematical Theory of Evolution. II. Skew Variation in Homogeneous Material"(PDF).Philosophical Transactions of the Royal Society A:Mathematical, Physical and Engineering Sciences.186(374): 343–414.Bibcode:1895RSPTA.186..343P.doi:10.1098/rsta.1895.0010.ISSN1364-503X.

- ^"Student" [pseu.William Sealy Gosset] (1908)."The probable error of a mean"(PDF).Biometrika.6(1): 1–25.doi:10.1093/biomet/6.1.1.hdl:10338.dmlcz/143545.JSTOR2331554.

{{cite journal}}:CS1 maint: numeric names: authors list (link) - ^Wendl MC(2016). "Pseudonymous fame".Science.351(6280): 1406.Bibcode:2016Sci...351.1406W.doi:10.1126/science.351.6280.1406.PMID27013722.

- ^Mortimer RG (2005).Mathematics for Physical Chemistry(3rd ed.). Burlington, MA: Elsevier. pp.326.ISBN9780080492889.OCLC156200058.

- ^abFisher RA(1925)."Applications of 'Student's' distribution"(PDF).Metron.5:90–104. Archived fromthe original(PDF)on 5 March 2016.

- ^Walpole RE, Myers R, Myers S, Ye K (2006).Probability & Statistics for Engineers & Scientists(7th ed.). New Delhi, IN: Pearson. p. 237.ISBN9788177584042.OCLC818811849.

- ^Kruschke JK(2015).Doing Bayesian Data Analysis(2nd ed.). Academic Press.ISBN9780124058880.OCLC959632184.

- ^Casella G, Berger RL (1990).Statistical Inference.Duxbury Resource Center. p. 56.ISBN9780534119584.

- ^abJackman, S. (2009).Bayesian Analysis for the Social Sciences.Wiley Series in Probability and Statistics. Wiley. p.507.doi:10.1002/9780470686621.ISBN9780470011546.

- ^Johnson NL, Kotz S, Balakrishnan N (1995). "Chapter 28".Continuous Univariate Distributions.Vol. 2 (2nd ed.). Wiley.ISBN9780471584940.

- ^Hogg RV,Craig AT (1978).Introduction to Mathematical Statistics(4th ed.). New York: Macmillan.ASINB010WFO0SA.Sections 4.4 and 4.8

{{cite book}}:CS1 maint: postscript (link) - ^Cochran WG(1934). "The distribution of quadratic forms in a normal system, with applications to the analysis of covariance".Math. Proc. Camb. Philos. Soc.30(2): 178–191.Bibcode:1934PCPS...30..178C.doi:10.1017/S0305004100016595.S2CID122547084.

- ^Gelman AB, Carlin JS, Rubin DB, Stern HS (1997).Bayesian Data Analysis(2nd ed.). Boca Raton, FL: Chapman & Hal l. p. 68.ISBN9780412039911.

- ^Park SY, Bera AK (2009). "Maximum entropy autoregressive conditional heteroskedasticity model".J. Econom.150(2): 219–230.doi:10.1016/j.jeconom.2008.12.014.

- ^abBailey RW (1994). "Polar generation of random variates with thetdistribution ".Mathematics of Computation.62(206): 779–781.Bibcode:1994MaCom..62..779B.doi:10.2307/2153537.JSTOR2153537.S2CID120459654.

- ^Ord JK (1972).Families of Frequency Distributions.London, UK: Griffin. Table 5.1.ISBN9780852641378.

- ^Ord JK (1972).Families of frequency distributions.London, UK: Griffin. Chapter 5.ISBN9780852641378.

- ^Lange KL, Little RJ, Taylor JM (1989)."Robust Statistical Modeling Using thetDistribution "(PDF).J. Am. Stat. Assoc.84(408): 881–896.doi:10.1080/01621459.1989.10478852.JSTOR2290063.

- ^Gelman AB, Carlin JB, Stern HS, et al. (2014). "Computationally efficient Markov chain simulation".Bayesian Data Analysis.Boca Raton, Florida: CRC Press. p. 293.ISBN9781439898208.

- ^Shah, Amar; Wilson, Andrew Gordon; Ghahramani, Zoubin (2014)."Studenttprocesses as alternatives to Gaussian processes "(PDF).JMLR.33(Proceedings of the 17th International Conference on Artificial Intelligence and Statistics (AISTATS) 2014, Reykjavik, Iceland): 877–885.arXiv:1402.4306.

- ^Chen, Zexun; Wang, Bo; Gorban, Alexander N. (2019)."Multivariate Gaussian and Studenttprocess regression for multi-output prediction ".Neural Computing and Applications.32(8): 3005–3028.arXiv:1703.04455.doi:10.1007/s00521-019-04687-8.

- ^Sun, Jingchao; Kong, Maiying; Pal, Subhadip (22 June 2021)."The Modified-Half-Normal distribution: Properties and an efficient sampling scheme".Communications in Statistics - Theory and Methods.52(5): 1591–1613.doi:10.1080/03610926.2021.1934700.ISSN0361-0926.S2CID237919587.

References

[edit]- Senn, S.; Richardson, W. (1994). "The firstttest ".Statistics in Medicine.13(8): 785–803.doi:10.1002/sim.4780130802.PMID8047737.

- Hogg RV,Craig AT (1978).Introduction to Mathematical Statistics(4th ed.). New York: Macmillan.ASINB010WFO0SA.

- Venables, W. N.; Ripley, B. D. (2002).Modern Applied Statistics with S(Fourth ed.). Springer.

- Gelman, Andrew; John B. Carlin; Hal S. Stern; Donald B. Rubin (2003).Bayesian Data Analysis(Second ed.). CRC/Chapman & Hall.ISBN1-58488-388-X.

External links

[edit]- "Student distribution",Encyclopedia of Mathematics,EMS Press,2001 [1994]

- Earliest Known Uses of Some of the Words of Mathematics (S)(Remarks on the history of the term "Student's distribution" )

- Rouaud, M. (2013),Probability, Statistics and Estimation(PDF)(short ed.)First Students on page 112.

- Student's t-Distribution,Archived2021-04-10 at theWayback Machine

![{\displaystyle {\begin{matrix}\ {\frac {\ 1\ }{2}}+x\ \Gamma \left({\frac {\ \nu +1\ }{2}}\right)\times \\[0.5em]{\frac {\ {{}_{2}F_{1}}\!\left(\ {\frac {\ 1\ }{2}},\ {\frac {\ \nu +1\ }{2}};\ {\frac {3}{\ 2\ }};\ -{\frac {~x^{2}\ }{\nu }}\ \right)\ }{\ {\sqrt {\pi \nu }}\ \Gamma \left({\frac {\ \nu \ }{2}}\right)\ }}\ ,\end{matrix}}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/32b4a3af11d075b054f564e60b7aea14bf2a95f3)

![{\displaystyle \ {\begin{matrix}{\frac {\ \nu +1\ }{2}}\left[\ \psi \left({\frac {\ \nu +1\ }{2}}\right)-\psi \left({\frac {\ \nu \ }{2}}\right)\ \right]\\[0.5em]+\ln \left[{\sqrt {\nu \ }}\ {\mathrm {B} }\left(\ {\frac {\ \nu \ }{2}},\ {\frac {\ 1\ }{2}}\ \right)\right]\ {\scriptstyle {\text{(nats)}}}\ \end{matrix}}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/f248820e946d0f06d1d132fb0d1473d3bed1736c)

![{\displaystyle \ {\frac {\ 1\ }{2}}+{\frac {\ 1\ }{\pi }}\ \left[{\frac {\left(\ {\frac {t}{\ {\sqrt {3\ }}\ }}\ \right)}{\left(\ 1+{\frac {~t^{2}\ }{3}}\ \right)}}+\arctan \left(\ {\frac {t}{\ {\sqrt {3\ }}\ }}\ \right)\ \right]\ }](https://wikimedia.org/api/rest_v1/media/math/render/svg/8354c1a9905eca443735c8edd6f2ff7e30338284)

![{\displaystyle \ {\frac {\ 1\ }{2}}+{\frac {\ 3\ }{8}}\left[\ {\frac {t}{\ {\sqrt {1+{\frac {~t^{2}\ }{4}}~}}\ }}\right]\left[\ 1-{\frac {~t^{2}\ }{\ 12\ \left(\ 1+{\frac {~t^{2}\ }{4}}\ \right)\ }}\ \right]\ }](https://wikimedia.org/api/rest_v1/media/math/render/svg/593d1fbbf85d9c6eca1216081674478751fb9474)

![{\displaystyle \ {\frac {\ 1\ }{2}}+{\frac {\ 1\ }{\pi }}{\left[{\frac {t}{\ {\sqrt {5\ }}\left(1+{\frac {\ t^{2}\ }{5}}\right)\ }}\left(1+{\frac {2}{\ 3\left(1+{\frac {\ t^{2}\ }{5}}\right)\ }}\right)+\arctan \left({\frac {t}{\ {\sqrt {\ 5\ }}\ }}\right)\right]}\ }](https://wikimedia.org/api/rest_v1/media/math/render/svg/e4b4a5988d5b6d89997077e3ef32bda4bdf6ba5e)

![{\displaystyle \ {\frac {\ 1\ }{2}}\ {\left[1+\operatorname {erf} \left({\frac {t}{\ {\sqrt {2\ }}\ }}\right)\right]}\ }](https://wikimedia.org/api/rest_v1/media/math/render/svg/a55ea64b38c1617eea3674d914c315fdb9fab27c)

![{\displaystyle \operatorname {\mathbb {E} } \left\{\ T^{k}\ \right\}={\begin{cases}\quad 0&k{\text{ odd }},\quad 0<k<\nu \ ,\\{}\\{\frac {1}{\ {\sqrt {\pi \ }}\ \Gamma \left({\frac {\ \nu \ }{2}}\right)}}\ \left[\ \Gamma \!\left({\frac {\ k+1\ }{2}}\right)\ \Gamma \!\left({\frac {\ \nu -k\ }{2}}\right)\ \nu ^{\frac {\ k\ }{2}}\ \right]&k{\text{ even }},\quad 0<k<\nu ~.\\\end{cases}}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/bede1b3de92cfea26b16817b52f40f76d35d1ceb)

![{\displaystyle {\begin{aligned}{\bar {x}}&={\frac {\ x_{1}+\cdots +x_{n}\ }{n}}\ ,\\[5pt]s^{2}&={\frac {1}{\ n-1\ }}\ \sum _{i=1}^{n}(x_{i}-{\bar {x}})^{2}~.\end{aligned}}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/d888648788b273c89dbb57cb5a936e7824fa31d2)

![{\displaystyle I=[a,b]}](https://wikimedia.org/api/rest_v1/media/math/render/svg/6d6214bb3ce7f00e496c0706edd1464ac60b73b5)