Designed for simplicity, customization, and developer productivity.

Important

✨ See theOfficial Documentationfor more details.

Objective

README-AI is a developer tool for automatically generating README markdown files using a robust repository processor engine and generative AI. Simply provide a repository URL or local path to your codebase, and a well-structured and detailed README file will be generated for you.

Motivation

This project aims to streamline the documentation process for developers, ensuring projects are properly documented and easy to understand. Whether you're working on an open-source project, enterprise software, or a personal project, README-AI is here to help you create high-quality documentation quickly and efficiently.

Running from the command line:

readmeai-cli-demo.mov

Running directly in your browser:

readmeai-streamlit-demo.mov

- Automated Documentation:Synchronize data from third-party sources and generates documentation automatically.

- Customizable Output:Dozens of options for styling/formatting, badges, header designs, and more.

- Language Agnostic:Works across a wide range of programming languages and project types.

- Multi-LLM Support:Compatible with

OpenAI,Ollama,Anthropic,Google GeminiandOffline Mode. - Offline Mode:Generate a boilerplate README without calling an external API.

- Markdown Best Practices:Leverage best practices in Markdown formatting for clean, professional-looking docs.

A few combinations of README styles and configurations:

See theConfigurationsection for a complete list of CLI options.

📍 Overview

|

Overview ◎ High-level introduction of the project, focused on the value proposition and use-cases, rather than technical aspects. |

|

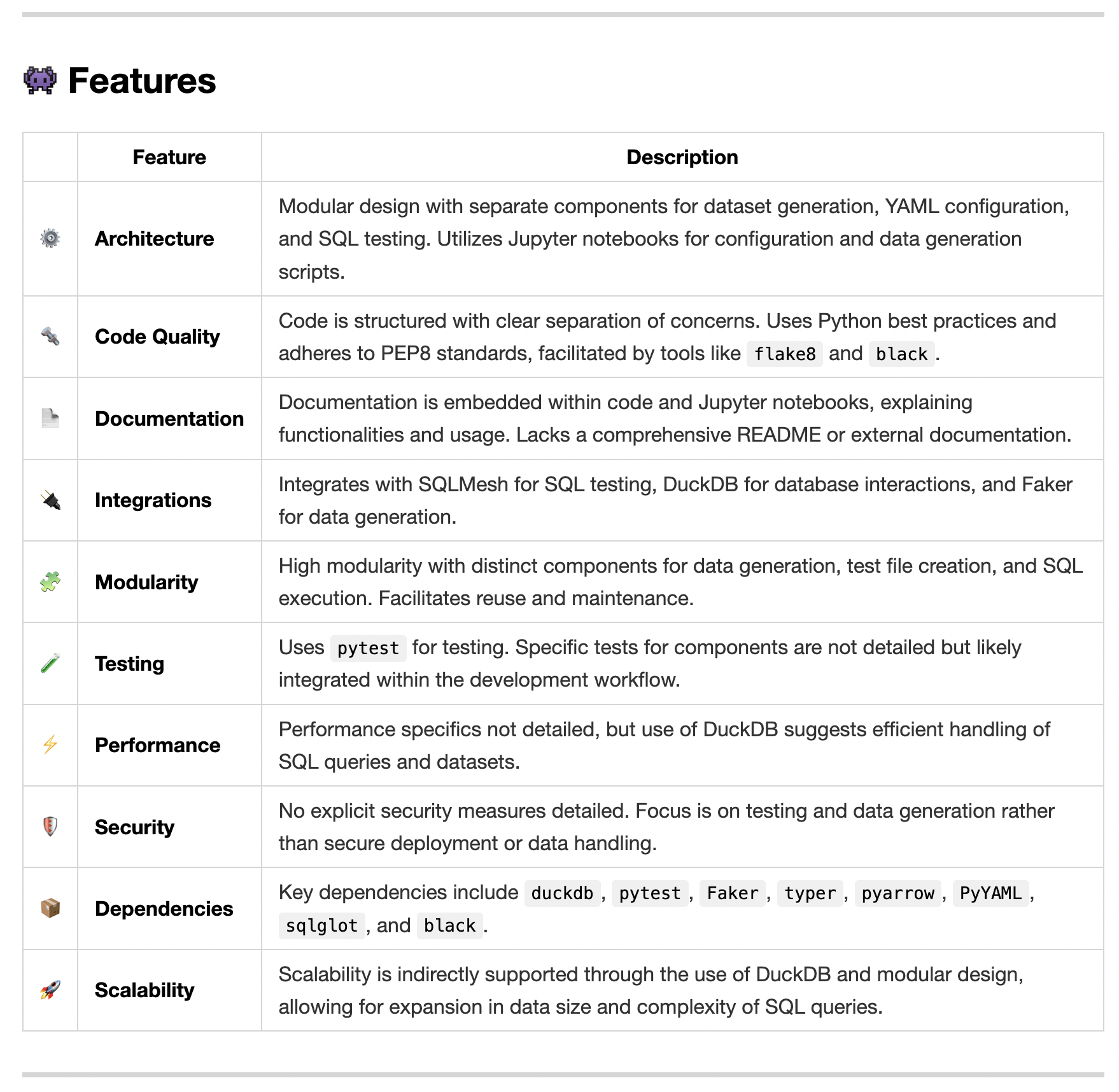

✨ Features

| Features Table ◎ Generated markdown table that highlights the key technical features and components of the codebase. This table is generated using a structuredprompt template. |

|

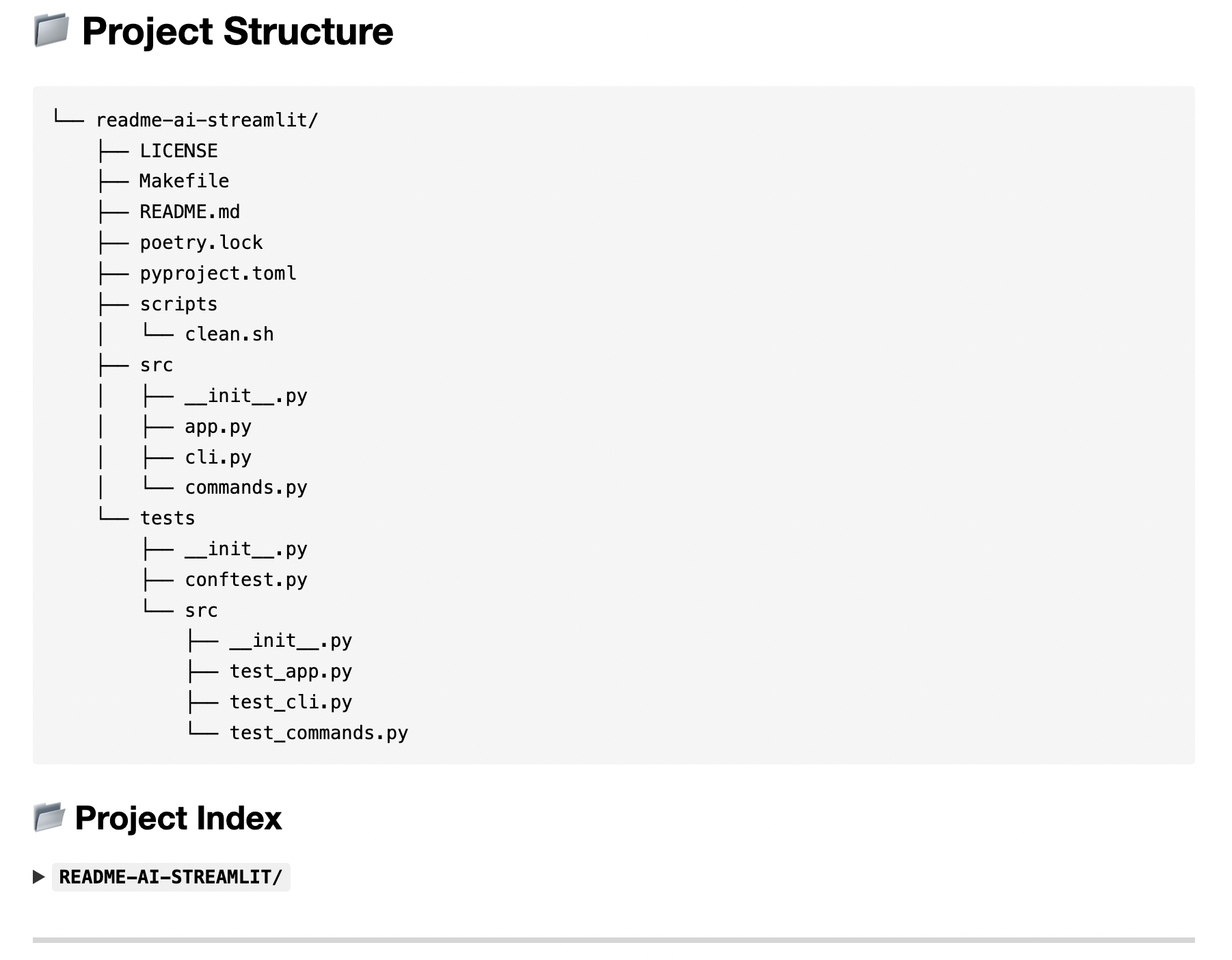

📃 Codebase Documentation

| Directory Tree ◎ The project's directory structure is generated using pure Python and embedded in the README. Seereadmeai.generators.tree.for more details. |

|

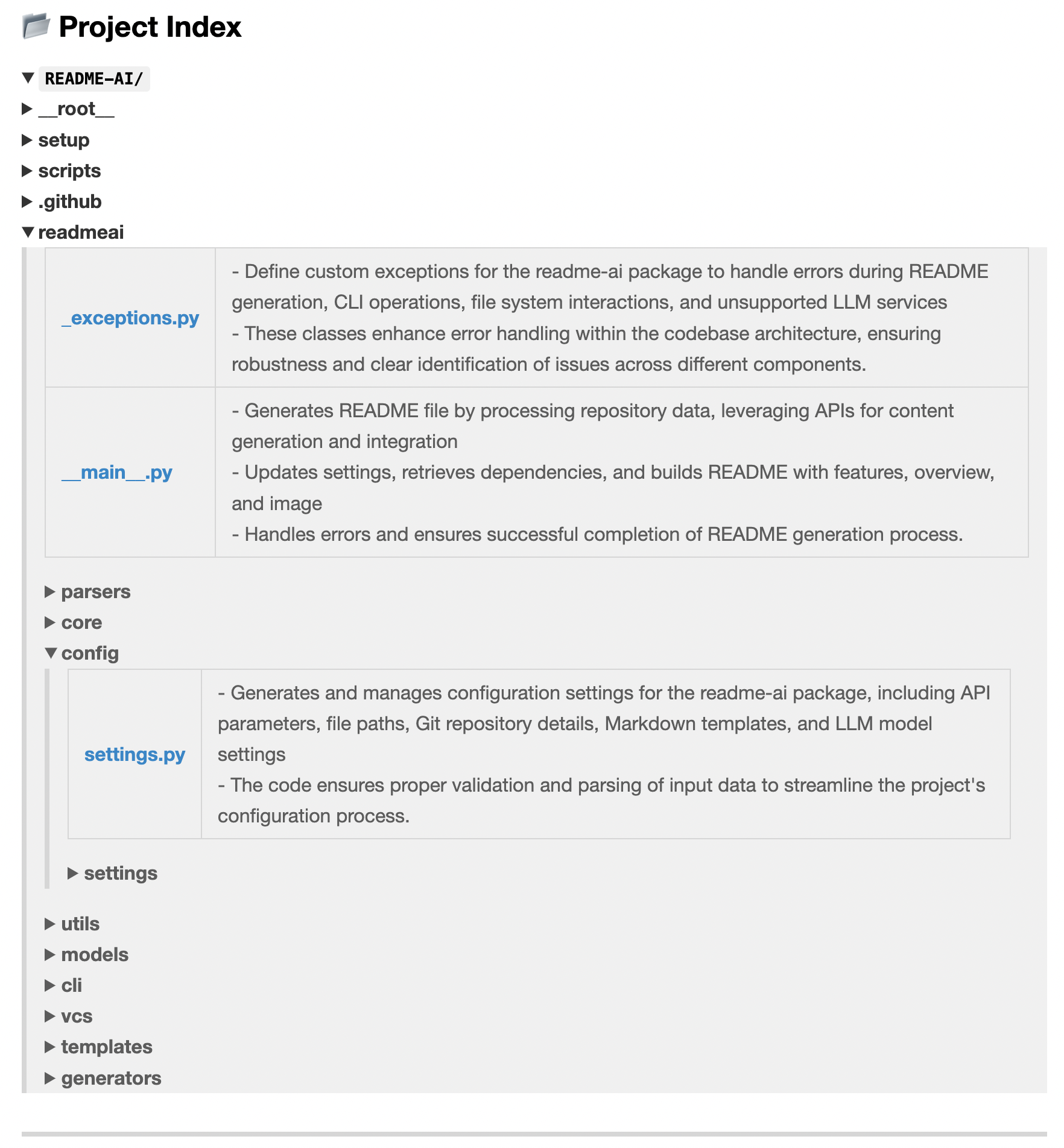

|

File Summaries ◎ Summarizes key modules of the project, which are also used as context for downstreamprompts. |

|

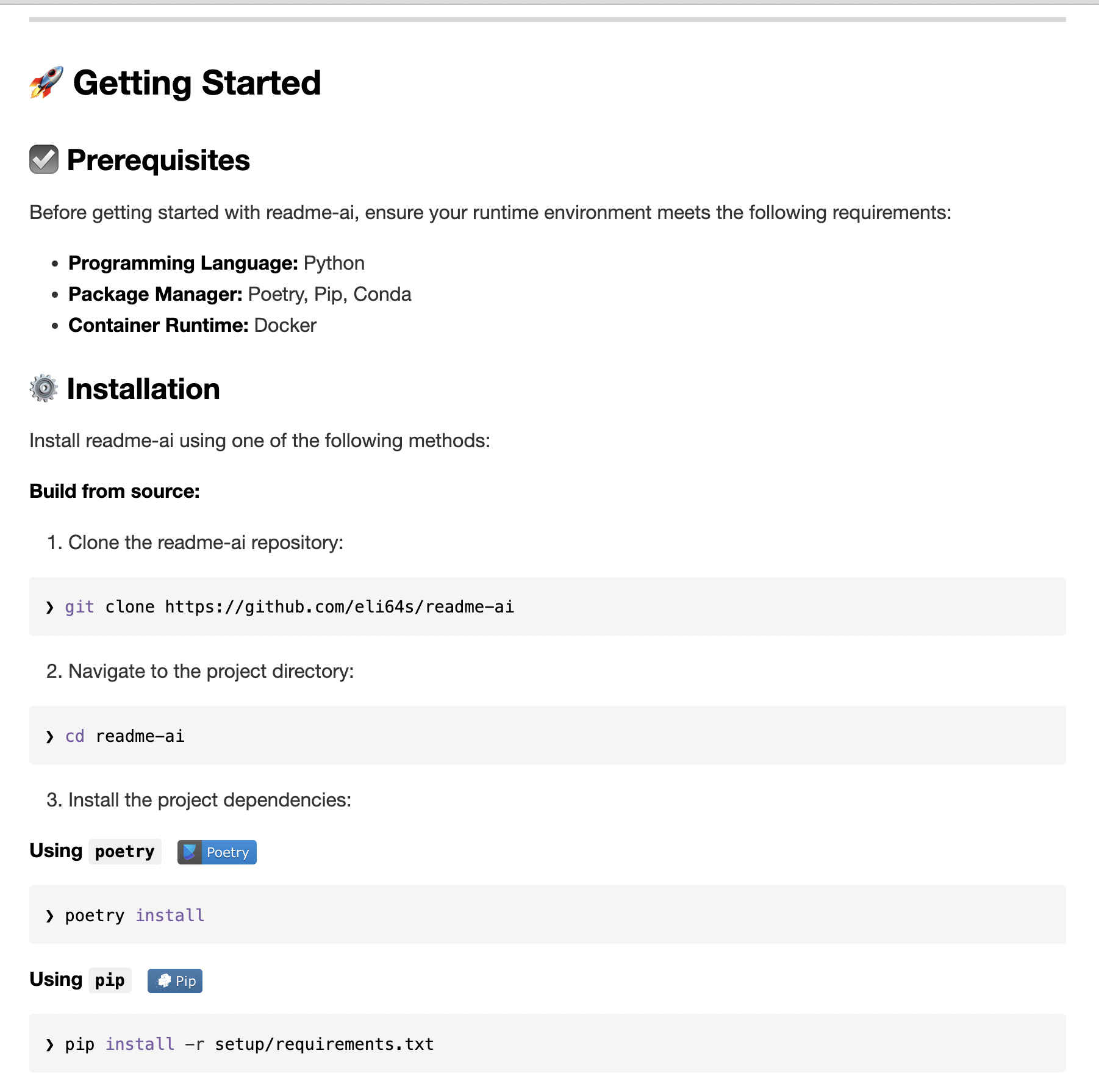

🚀 Quickstart Instructions

| Getting Started Guides ◎ Prerequisites and system requirements are extracted from the codebase during preprocessing. Theparsershandles the majority of this logic currently. |

|

| Installation Guide ◎ |

|

🔰 Contributing Guidelines

System Requirements:

- Python

3.9+ - Package Manager/Container:

pip,pipx,docker - LLM API Service:

OpenAI,Ollama,Anthropic,Google Gemini,Offline Mode

Repository URL or Path:

Make sure to have a repository URL or local directory path ready for the CLI.

LLM API Service:

- OpenAI:Recommended, requires an account setup and API key.

- Ollama:Free and open-source, potentially slower and more resource-intensive.

- Anthropic:Requires an Anthropic account and API key.

- Google Gemini:Requires a Google Cloud account and API key.

- Offline Mode:Generates a boilerplate README without making API calls.

Install readme-ai using your preferred package manager, container, or directly from the source.

❯ pip install readmeai❯ pipx install readmeai[! TIP]

Usepipxto install and run Python command-line applications without causing dependency conflicts with other packages!

Pull the latest Docker image from the Docker Hub repository.

❯ docker pull zeroxeli/readme-ai:latestBuild readme-ai

❯ bash setup/setup.sh- Clone the repository:

❯ git clone https://github /eli64s/readme-ai- Navigate to the

readme-aidirectory:

❯cdreadme-ai- Install dependencies using

poetry:

❯ poetry install- Enter the

poetryshell environment:

❯ poetry shellTo use theAnthropicandGoogle Geminiclients, install the optional dependencies.

Anthropic:

❯ pip install readmeai[anthropic]Google Gemini:

❯ pip install readmeai[gemini]OpenAI

Generate a OpenAI API key and set it as the environment variableOPENAI_API_KEY.

#Using Linux or macOS

❯exportOPENAI_API_KEY=<your_api_key>

#Using Windows

❯setOPENAI_API_KEY=<your_api_key>Ollama

Pull your model of choice from the Ollama repository:

❯ ollama pull mistral:latestStart the Ollama server:

❯exportOLLAMA_HOST=127.0.0.1&&ollama serveSee all available models from Ollamahere.

Anthropic

Generate an Anthropic API key and set the following environment variables:

❯exportANTHROPIC_API_KEY=<your_api_key>Google Gemini

Generate a Google API key and set the following environment variables:

❯exportGOOGLE_API_KEY=<your_api_key>With OpenAI:

❯ readmeai --api openai --repository https://github /eli64s/readme-ai[! IMPORTANT] By default, the

gpt-3.5-turbomodel is used. Higher costs may be incurred when more advanced models.

With Ollama:

❯ readmeai --api ollama --model llama3 --repository https://github /eli64s/readme-aiWith Anthropic:

❯ readmeai --api anthropic -m claude-3-5-sonnet-20240620 -r https://github /eli64s/readme-aiWith Gemini:

❯ readmeai --api gemini -m gemini-1.5-flash -r https://github /eli64s/readme-aiAdding more customization options:

❯ readmeai --repository https://github /eli64s/readme-ai \

--output readmeai.md \

--api openai \

--model gpt-4 \

--badge-color A931EC \

--badge-style flat-square \

--header-style compact \

--toc-style fold \

--temperature 0.9 \

--tree-depth 2

--image LLM \

--emojisRunning the Docker container with the OpenAI API:

❯ docker run -it \

-e OPENAI_API_KEY=$OPENAI_API_KEY\

-v"$(pwd)":/app zeroxeli/readme-ai:latest \

-r https://github /eli64s/readme-aiTry readme-ai directly in your browser, no installation required. See thereadme-ai-streamlitrepository for more details.

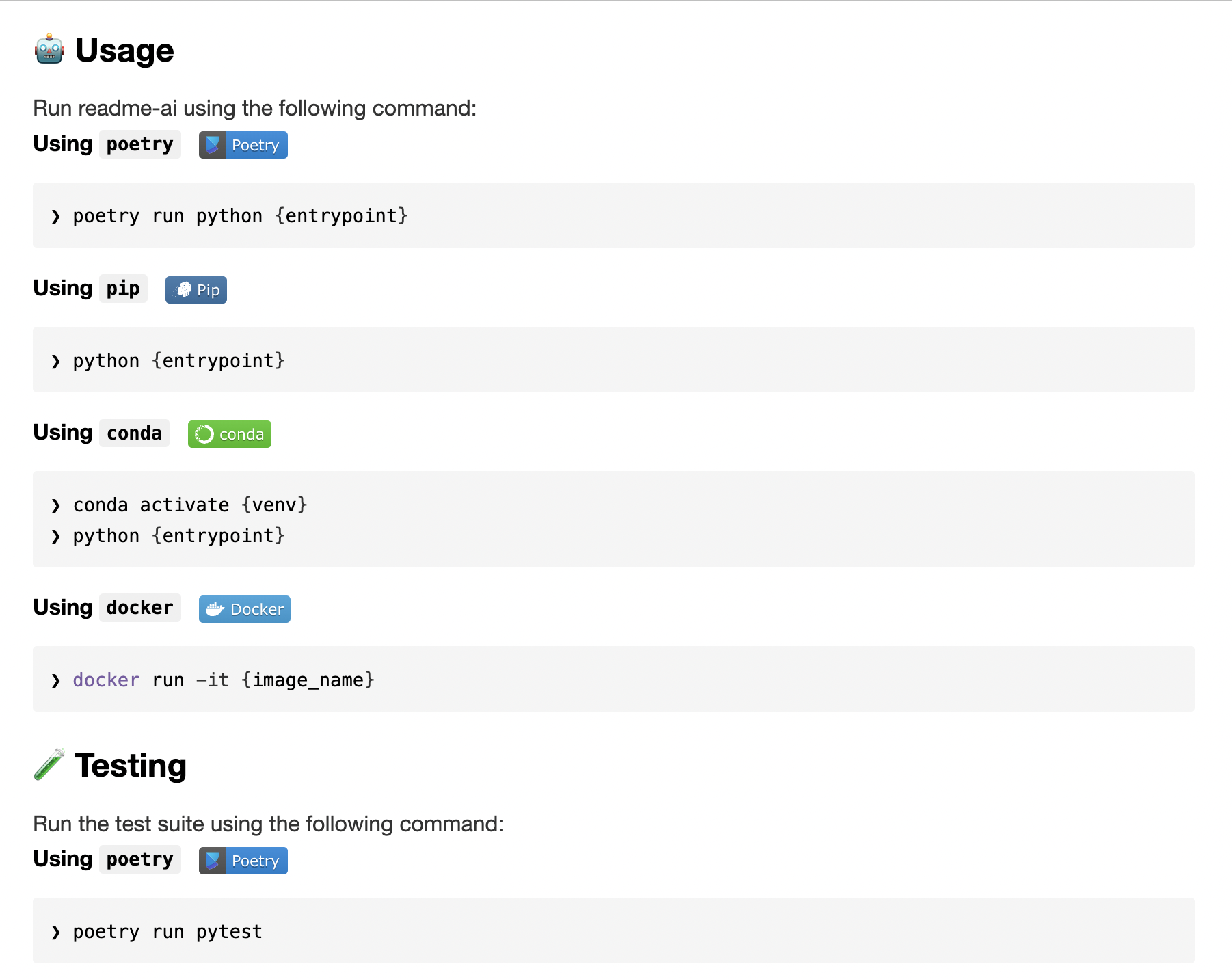

Using readme-ai

❯ conda activate readmeai

❯ Python 3 -m readmeai.cli.main -r https://github /eli64s/readme-ai❯ poetry shell

❯ poetry run Python 3 -m readmeai.cli.main -r https://github /eli64s/readme-aiThe pytest framework and nox automation tool are used for testing the application.

❯ maketest❯ make test-noxTip

Usenoxto test application against multiple Python environments and dependencies!

Customize your README generation using these CLI options:

| Option | Description | Default |

|---|---|---|

--align |

Text align in header | center |

--api |

LLM API service provider | offline |

--badge-color |

Badge color name or hex code | 0080ff |

--badge-style |

Badge icon style type | flat |

--base-url |

Base URL for the repository | v1/chat/completions |

--context-window |

Maximum context window of the LLM API | 3900 |

--emojis |

Adds emojis to the README header sections | False |

--header-style |

Header template style | classic |

--image |

Project logo image | blue |

--model |

Specific LLM model to use | gpt-3.5-turbo |

--output |

Output filename | readme-ai.md |

--rate-limit |

Maximum API requests per minute | 10 |

--repository |

Repository URL or local directory path | None |

--temperature |

Creativity level for content generation | 0.1 |

--toc-style |

Table of contents template style | bullet |

--top-p |

Probability of the top-p sampling method | 0.9 |

--tree-depth |

Maximum depth of the directory tree structure | 2 |

Tip

For a full list of options, runreadmeai --helpin your terminal.

To see the full list of customization options, check out theConfigurationsection in the official documentation. This section provides a detailed overview of all available CLI options and how to use them, including badge styles, header templates, and more.

| Language/Framework | Output File | Input Repository | Description |

|---|---|---|---|

| Python | readme- Python.md | readme-ai | Core readme-ai project |

| TypeScript & React | readme-typescript.md | ChatGPT App | React Native ChatGPT app |

| PostgreSQL & DuckDB | readme-postgres.md | Buenavista | Postgres proxy server |

| Kotlin & Android | readme-kotlin.md | file.io Client | Android file sharing app |

| Streamlit | readme-streamlit.md | readme-ai-streamlit | Streamlit UI for readme-ai app |

| Rust & C | readme-rust-c.md | CallMon | System call monitoring tool |

| Docker & Go | readme-go.md | docker-gs-ping | Dockerized Go app |

| Java | readme-java.md | Minimal-Todo | Minimalist todo Java app |

| FastAPI & Redis | readme-fastapi-redis.md | async-ml-inference | Async ML inference service |

| Jupyter Notebook | readme-mlops.md | mlops-course | MLOps course repository |

| Apache Flink | readme-local.md | Local Directory | Example using a local directory |

See additional README files generated by readme-aihere

- Release

readmeai 1.0.0with enhanced documentation management features. - Develop

Vscode Extensionto generate README files directly in the editor. - Develop

GitHub Actionsto automate documentation updates. - Add

badge packsto provide additional badge styles and options.- Code coverage, CI/CD status, project version, and more.

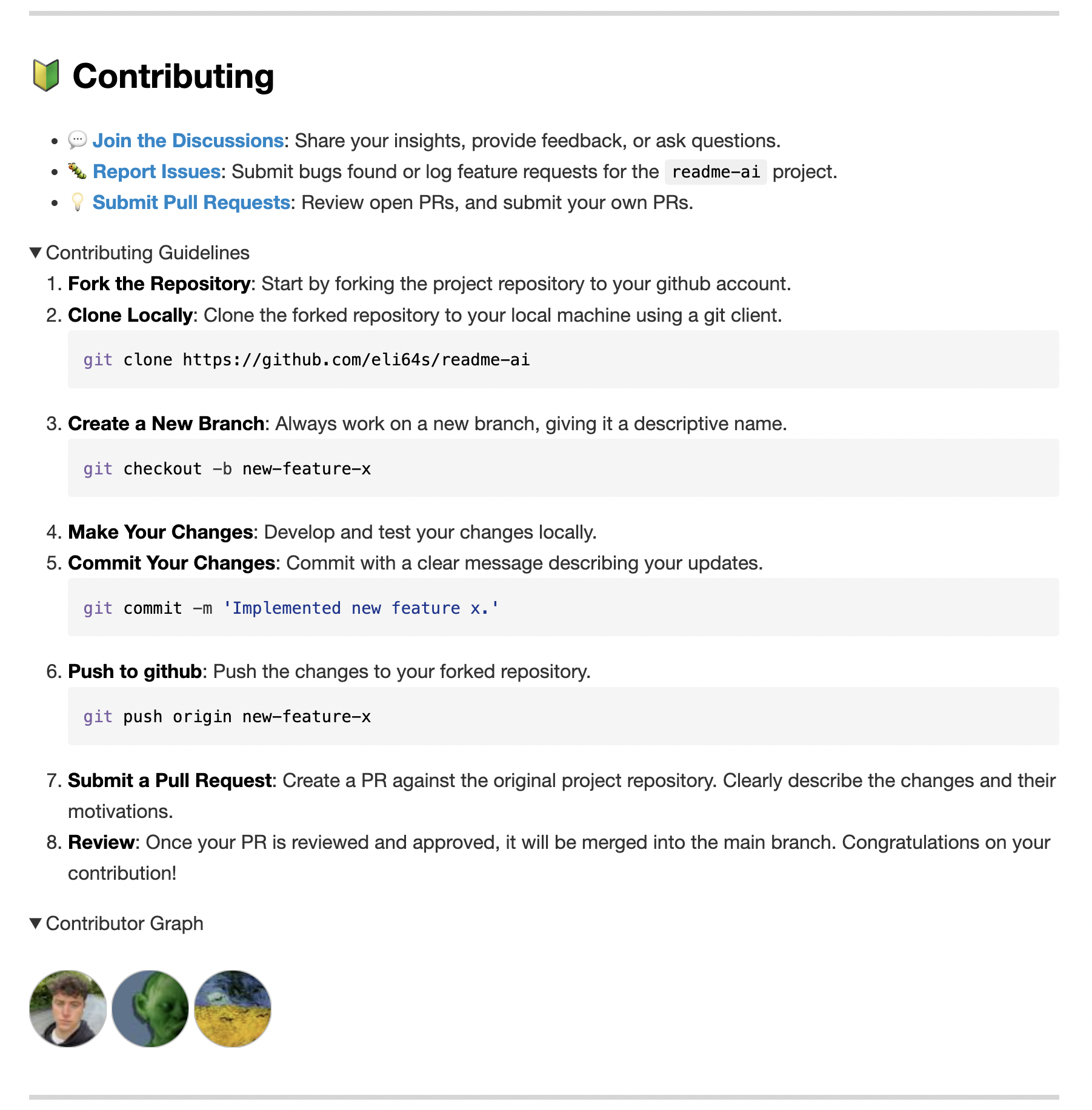

Contributions are welcome and encouraged! If interested, please begin by reviewing the resources below:

- 💡Contributing Guide:Learn about our contribution process, coding standards, and how to submit your ideas.

- 💬Start a Discussion:Have questions or suggestions? Join our community discussions to share your thoughts and engage with others.

- 🐛Report an Issue:Found a bug or have a feature request? Let us know by opening an issue so we can address it promptly.