High Performance Machine Learning Distribution

We are currently rebuilding SHARK to take advantage ofTurbine.Until that is complete make sure you use an.exe release or a checkout of theSHARK-1.0branch, for a working SHARK

Prerequisites - Drivers

- [AMD RDNA Users] Download the latest driver (23.2.1 is the oldest supported)here.

- [macOS Users] Download and install the 1.3.216 Vulkan SDK fromhere.Newer versions of the SDK will not work.

- [Nvidia Users] Download and install the latest CUDA / Vulkan drivers fromhere

- MESA / RADV drivers wont work with FP16. Please use the latest AMGPU-PRO drivers (non-pro OSS drivers also wont work) or the latest NVidia Linux Drivers.

Other users please ensure you have your latest vendor drivers and Vulkan SDK fromhereand if you are using vulkan checkvulkaninfoworks in a terminal window

Install the Driver from (Prerequisites)[https://github /nod-ai/SHARK#install-your-hardware-drivers] above

Download thestable releaseor the most recentSHARK 1.0 pre-release.

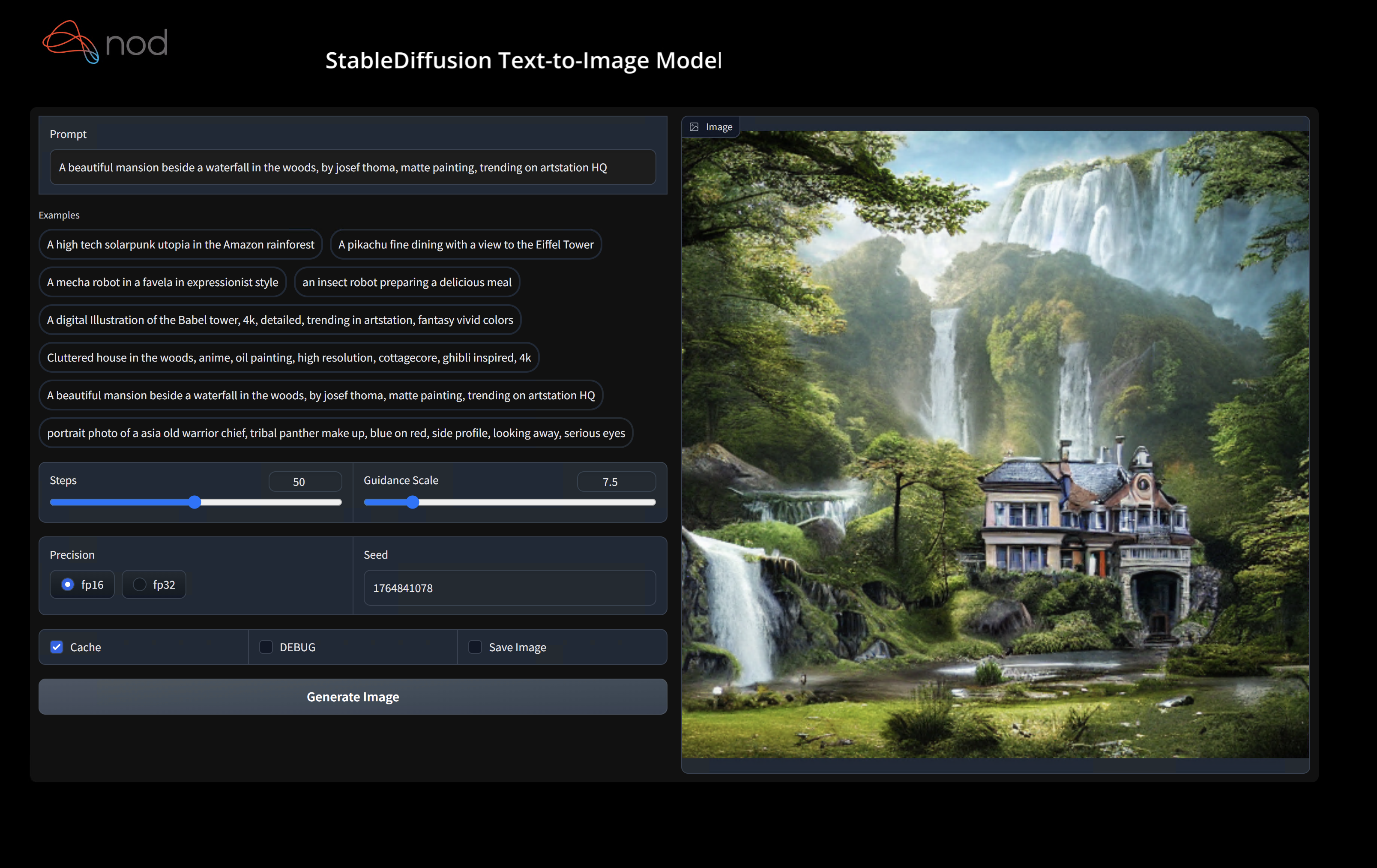

Double click the.exe, orrun from the command line(recommended), and you should have theUIin the browser.

If you have custom models put them in amodels/directory where the.exe is.

Enjoy.

More installation notes

* We recommend that you download EXE in a new folder, whenever you download a new EXE version. If you download it in the same folder as a previous install, you must delete the old `*.vmfb` files with `rm *.vmfb`. You can also use `--clear_all` flag once to clean all the old files. * If you recently updated the driver or this binary (EXE file), we recommend you clear all the local artifacts with `--clear_all`- Open a Command Prompt or Powershell terminal, change folder (

cd) to the.exe folder. Then run the EXE from the command prompt. That way, if an error occurs, you'll be able to cut-and-paste it to ask for help. (if it always works for you without error, you may simply double-click the EXE) - The first run may take few minutes when the models are downloaded and compiled. Your patience is appreciated. The download could be about 5GB.

- You will likely see a Windows Defender message asking you to give permission to open a web server port. Accept it.

- Open a browser to access the Stable Diffusion web server. By default, the port is 8080, so you can go tohttp://localhost:8080/.

- If you prefer to always run in the browser, use the

--ui=webcommand argument when running the EXE.

- Select the command prompt that's running the EXE. Press CTRL-C and wait a moment or close the terminal.

Advanced Installation (Only for developers)

- Install Git for Windows fromhereif you don't already have it.

git clone https://github /nod-ai/SHARK.git

cdSHARKCurrently SHARK is being rebuilt forTurbineon themainbranch. For now you are strongly discouraged from usingmainunless you are working on the rebuild effort, and should not expect the code there to produce a working application for Image Generation, So for now you'll need switch over to theSHARK-1.0branch and use the stable code.

git checkout SHARK-1.0The following setup instructions assume you are on this branch.

- Install the latest Python 3.11.x version fromhere

set-executionpolicyremotesigned./setup_venv.ps1#You can re-run this script to get the latest version./setup_venv.sh

sourceshark1.venv/bin/activate(shark1.venv) PS C:\g\shark>cd.\apps\stable_diffusion\web\

(shark1.venv) PS C:\g\shark\apps\stable_diffusion\web>Python.\index.py(shark1.venv)>cdapps/stable_diffusion/web

(shark1.venv)>Python index.pyAccess Stable Diffusion onhttp://localhost:8080/?__theme=dark

(shark1.venv) PS C:\g\shark>Python.\apps\stable_diffusion\scripts\main.py--app="txt2img"--precision="fp16"--prompt="tajmahal, snow, sunflowers, oil on canvas"--device="vulkan"Python 3.11 apps/stable_diffusion/scripts/main.py --app=txt2img --precision=fp16 --device=vulkan --prompt="tajmahal, oil on canvas, sunflowers, 4k, uhd"You can replacevulkanwithcputo run on your CPU or withcudato run on CUDA devices. If you have multiple vulkan devices you can address them with--device=vulkan://1etc

The output on a AMD 7900XTX would look something like:

Average step time: 47.19188690185547ms/it

Clip Inferencetime(ms) = 109.531

VAE Inferencetime(ms): 78.590

Total image generation time: 2.5788655281066895secHere are some samples generated:

Find us onSHARK Discord serverif you have any trouble with running it on your hardware.

Binary Installation

This step sets up a new VirtualEnv for Python

Python --version#Check you have 3.11 on Linux, macOS or Windows Powershell

Python -m venv shark_venv

sourceshark_venv/bin/activate#Use shark_venv/Scripts/activate on Windows

#If you are using conda create and activate a new conda env

#Some older pip installs may not be able to handle the recent PyTorch deps

Python -m pip install --upgrade pipmacOS Metalusers please installhttps://sdk.lunarg /sdk/download/latest/mac/vulkan-sdk.dmgand enable "System wide install"

This step pip installs SHARK and related packages on Linux Python 3.8, 3.10 and 3.11 and macOS / Windows Python 3.11

pip install nodai-shark -f https://nod-ai.github.io/SHARK/package-index/ -f https://llvm.github.io/torch-mlir/package-index/ -f https://nod-ai.github.io/SRT/pip-release-links.html --extra-index-url https://download.pytorch.org/whl/nightly/cpupytest tank/test_models.pySee tank/README.md for a more detailed walkthrough of our pytest suite and CLI.

curl -O https://raw.githubusercontent /nod-ai/SHARK/main/shark/examples/shark_inference/resnet50_script.py

#Install deps for test script

pip install --pre torch torchvision torchaudio tqdm pillow gsutil --extra-index-url https://download.pytorch.org/whl/nightly/cpu

Python./resnet50_script.py --device="cpu"#use cuda or vulkan or metalcurl -O https://raw.githubusercontent /nod-ai/SHARK/main/shark/examples/shark_inference/minilm_jit.py

#Install deps for test script

pip install transformers torch --extra-index-url https://download.pytorch.org/whl/nightly/cpu

Python./minilm_jit.py --device="cpu"#use cuda or vulkan or metalDevelopment, Testing and Benchmarks

If you want to use Python3.11 and with TF Import tools you can use the environment variables like:

SetUSE_IREE=1to use upstream IREE

# PYTHON= Python 3.11 VENV_DIR=0617_venv IMPORTER=1./setup_venv.sh

Python -m shark.examples.shark_inference.resnet50_script --device="cpu"#Use gpu | vulkan

#Or a pytest

pytest tank/test_models.py -k"MiniLM"If you are aTorch-mlir developer or an IREE developerand want to test local changes you can uninstall

the provided packages withpip uninstall torch-mlirand / orpip uninstall iree-compiler iree-runtimeand build locally

with Python bindings and set your PYTHONPATH as mentionedhere

for IREE andhere

for Torch-MLIR.

How to use your locally built Torch-MLIR with SHARK:

1.) Run`./setup_venv.shinSHARK`and activate`shark.venv`virtual env.

2.) Run`pip uninstall torch-mlir`.

3.) Go to yourlocalTorch-MLIR directory.

4.) Activate mlir_venv virtual envirnoment.

5.) Run`pip uninstall -r requirements.txt`.

6.) Run`pip install -r requirements.txt`.

7.) Build Torch-MLIR.

8.) Activate shark.venv virtual environment from the Torch-MLIR directory.

8.) Run`export PYTHONPATH=`pwd`/build/tools/torch-mlir/ Python _packages/torch_mlir:`pwd`/examples`inthe Torch-MLIR directory.

9.) Go to the SHARK directory.Now the SHARK will use your locally build Torch-MLIR repo.

To produce benchmarks of individual dispatches, you can add--dispatch_benchmarks=All --dispatch_benchmarks_dir=<output_dir>to your pytest command line argument.

If you only want to compile specific dispatches, you can specify them with a space seperated string instead of"All".E.G.--dispatch_benchmarks= "0 1 2 10"

For example, to generate and run dispatch benchmarks for MiniLM on CUDA:

pytest -k "MiniLM and torch and static and cuda" --benchmark_dispatches=All -s --dispatch_benchmarks_dir=./my_dispatch_benchmarks

The given command will populate<dispatch_benchmarks_dir>/<model_name>/with anordered_dispatches.txtthat lists and orders the dispatches and their latencies, as well as folders for each dispatch that contain.mlir,.vmfb, and results of the benchmark for that dispatch.

if you want to instead incorporate this into a Python script, you can pass thedispatch_benchmarksanddispatch_benchmarks_dircommands when initializingSharkInference,and the benchmarks will be generated when compiled. E.G:

shark_module = SharkInference(

mlir_model,

device=args.device,

mlir_dialect= "tm_tensor",

dispatch_benchmarks= "all",

dispatch_benchmarks_dir= "results"

)

Output will include:

- An ordered list ordered-dispatches.txt of all the dispatches with their runtime

- Inside the specified directory, there will be a directory for each dispatch (there will be mlir files for all dispatches, but only compiled binaries and benchmark data for the specified dispatches)

- An.mlir file containing the dispatch benchmark

- A compiled.vmfb file containing the dispatch benchmark

- An.mlir file containing just the hal executable

- A compiled.vmfb file of the hal executable

- A.txt file containing benchmark output

See tank/README.md for further instructions on how to run model tests and benchmarks from the SHARK tank.

API Reference

from shark.shark_importer import SharkImporter

# SharkImporter imports mlir file from the torch, tensorflow or tf-lite module.

mlir_importer = SharkImporter(

torch_module,

(input),

frontend= "torch", #tf, #tf-lite

)

torch_mlir, func_name = mlir_importer.import_mlir(tracing_required=True)

# SharkInference accepts mlir in linalg, mhlo, and tosa dialect.

from shark.shark_inference import SharkInference

shark_module = SharkInference(torch_mlir, device= "cpu", mlir_dialect= "linalg" )

shark_module pile()

result = shark_module.forward((input))

from shark.shark_inference import SharkInference

import numpy as np

mhlo_ir = r "" "builtin.module {

func.func @forward(%arg0: tensor<1x4xf32>, %arg1: tensor<4x1xf32>) -> tensor<4x4xf32> {

%0 = chlo.broadcast_add %arg0, %arg1: (tensor<1x4xf32>, tensor<4x1xf32>) -> tensor<4x4xf32>

%1 =" mhlo.abs "(%0): (tensor<4x4xf32>) -> tensor<4x4xf32>

return %1: tensor<4x4xf32>

}

}" ""

arg0 = np.ones((1, 4)).astype(np.float32)

arg1 = np.ones((4, 1)).astype(np.float32)

shark_module = SharkInference(mhlo_ir, device= "cpu", mlir_dialect= "mhlo" )

shark_module pile()

result = shark_module.forward((arg0, arg1))

SHARK is maintained to support the latest innovations in ML Models:

| TF HuggingFace Models | SHARK-CPU | SHARK-CUDA | SHARK-METAL |

|---|---|---|---|

| BERT | 💚 | 💚 | 💚 |

| DistilBERT | 💚 | 💚 | 💚 |

| GPT2 | 💚 | 💚 | 💚 |

| BLOOM | 💚 | 💚 | 💚 |

| Stable Diffusion | 💚 | 💚 | 💚 |

| Vision Transformer | 💚 | 💚 | 💚 |

| ResNet50 | 💚 | 💚 | 💚 |

For a complete list of the models supported in SHARK, please refer totank/README.md.

- SHARK Discord server:Real time discussions with the SHARK team and other users

- GitHub issues:Feature requests, bugs etc

IREE Project Channels

- Upstream IREE issues:Feature requests, bugs, and other work tracking

- Upstream IREE Discord server:Daily development discussions with the core team and collaborators

- iree-discuss email list: Announcements, general and low-priority discussion

MLIR and Torch-MLIR Project Channels

#torch-mlirchannel on the LLVMDiscord- this is the most active communication channel- Torch-MLIR Github issueshere

torch-mlirsectionof LLVM Discourse- Weekly meetings on Mondays 9AM PST. Seeherefor more information.

- MLIR topic within LLVM DiscourseSHARK and IREE is enabled by and heavily relies onMLIR.

nod.ai SHARK is licensed under the terms of the Apache 2.0 License with LLVM Exceptions. SeeLICENSEfor more information.